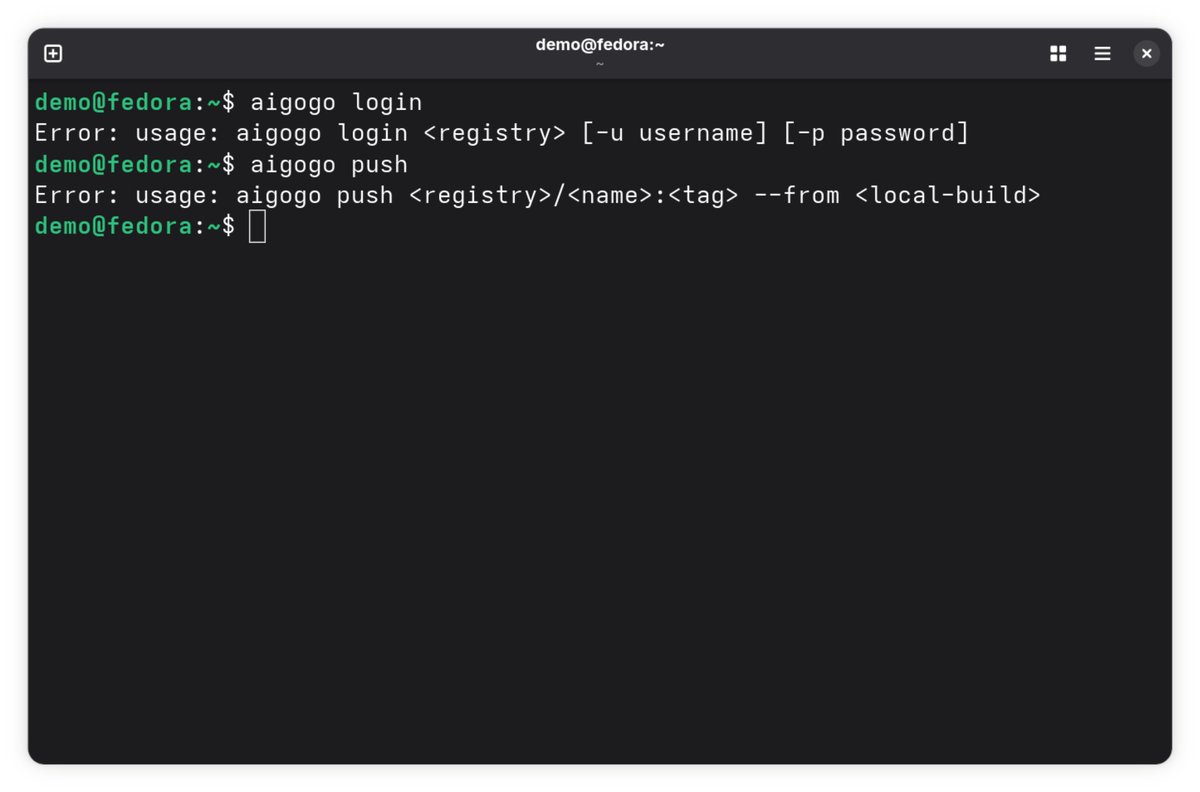

Gave a talk at MLAI Melbourne last Tuesday on aigogo - I was fed up with there being no good way to reuse AI agents between projects so I built a package manager for them. Turns out other people have the same problem. Good crowd and cool meetup. github.com/aupeachmo/aigo…

English