Sabitlenmiş Tweet

On 𝗧𝗵𝘂𝗿𝘀𝗱𝗮𝘆, 𝗙𝗲𝗯𝗿𝘂𝗮𝗿𝘆 𝟮𝟲𝘁𝗵, 𝗮𝘁 𝟭𝟰:𝟬𝟬 𝗘𝗮𝘀𝘁𝗲𝗿𝗻 𝗧𝗶𝗺𝗲, I will present a 𝘄𝗲𝗯𝗶𝗻𝗮𝗿 titled “𝗧𝗵𝗲 𝗣𝗿𝗼𝗺𝗽𝘁𝘄𝗮𝗿𝗲 𝗞𝗶𝗹𝗹 𝗖𝗵𝗮𝗶𝗻: From Prompt Injection to Multi-Step LLM Malware.” The talk is based on joint work with Oleg Brodt, Elad Feldman, and Bruce Schneier.

Registration link:

webinar.connectmeinforma.com/event/register…

𝗔𝗯𝘀𝘁𝗿𝗮𝗰𝘁:

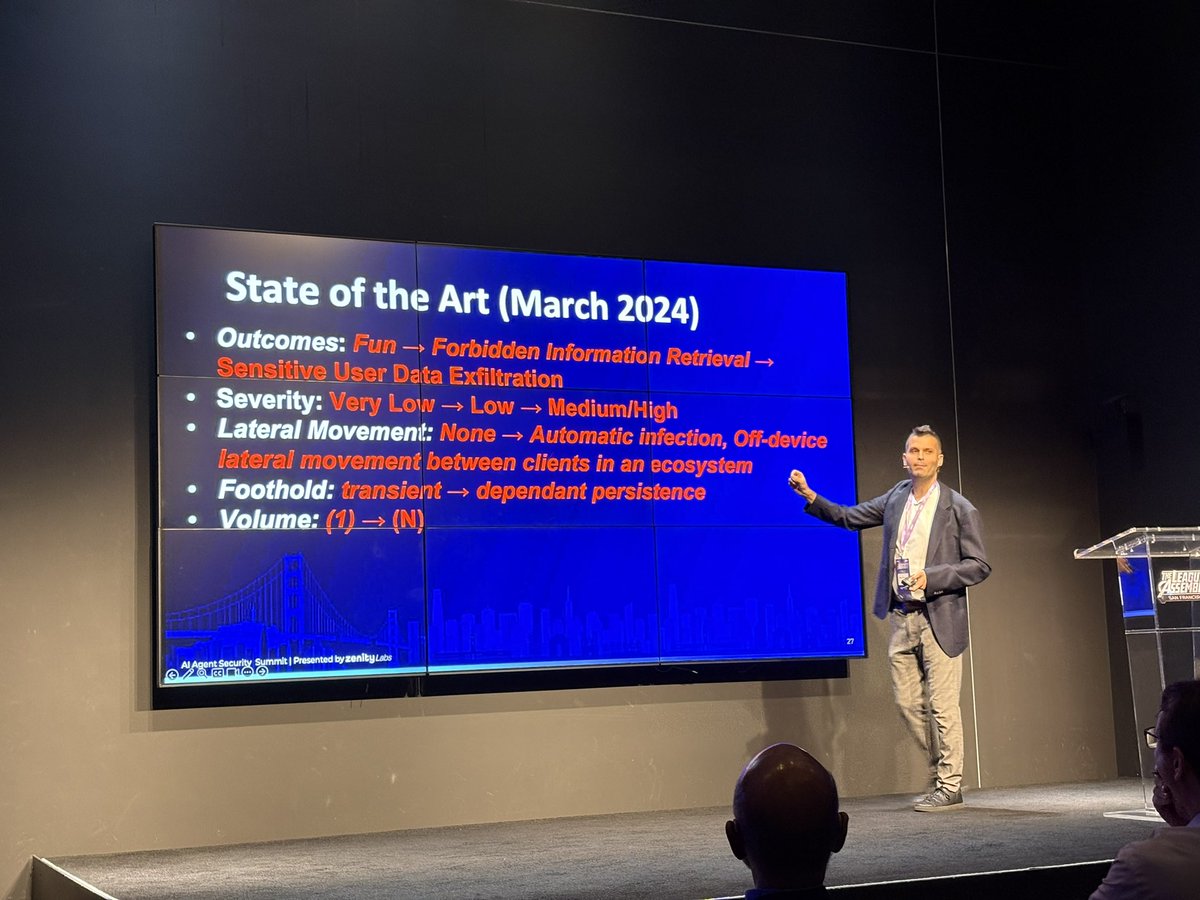

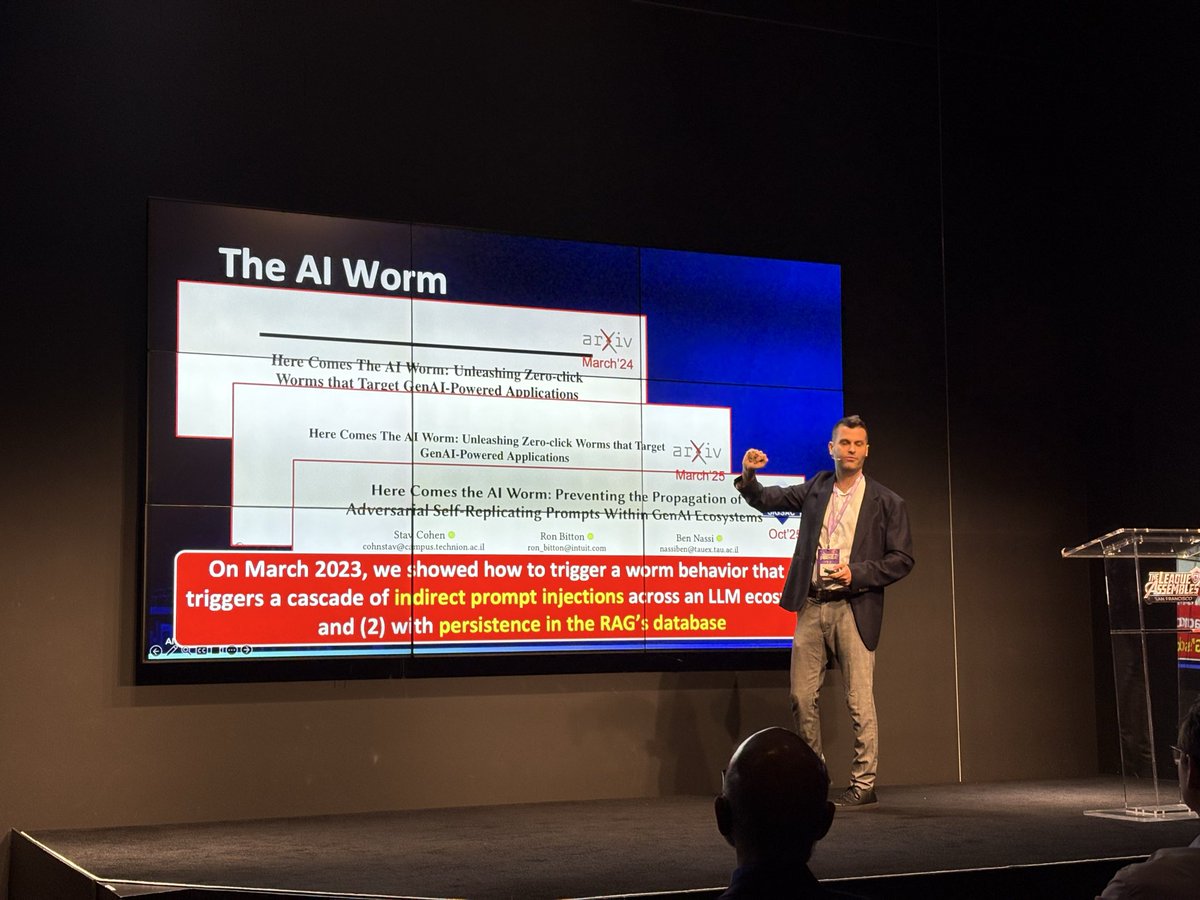

The Promptware Kill Chain: From Prompt Injection to Multi-Step LLM Malware, explores the evolution of prompt injection attacks into a sophisticated seven-stage kill chain: initial access, privilege escalation, reconnaissance, persistence, command & control, lateral movement, and actions on objectives. It introduces the concept of Promptware and provides an in-depth analysis of each stage, highlighting advancements in the field over the last three years.

#blackhat #infosec #webinar #prompt_injection #promptware

English