Sabitlenmiş Tweet

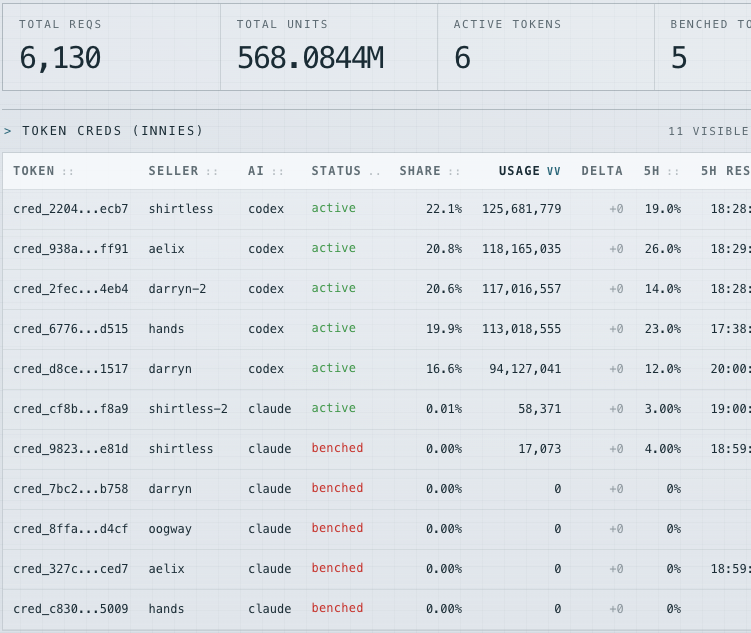

@litcapital cartel's about to get a $200k/yr enterprise quote and a 90-day onboarding call

English

shirtless

2.5K posts

@bicep_pump

severance floor manager https://t.co/w8rSqB91tj

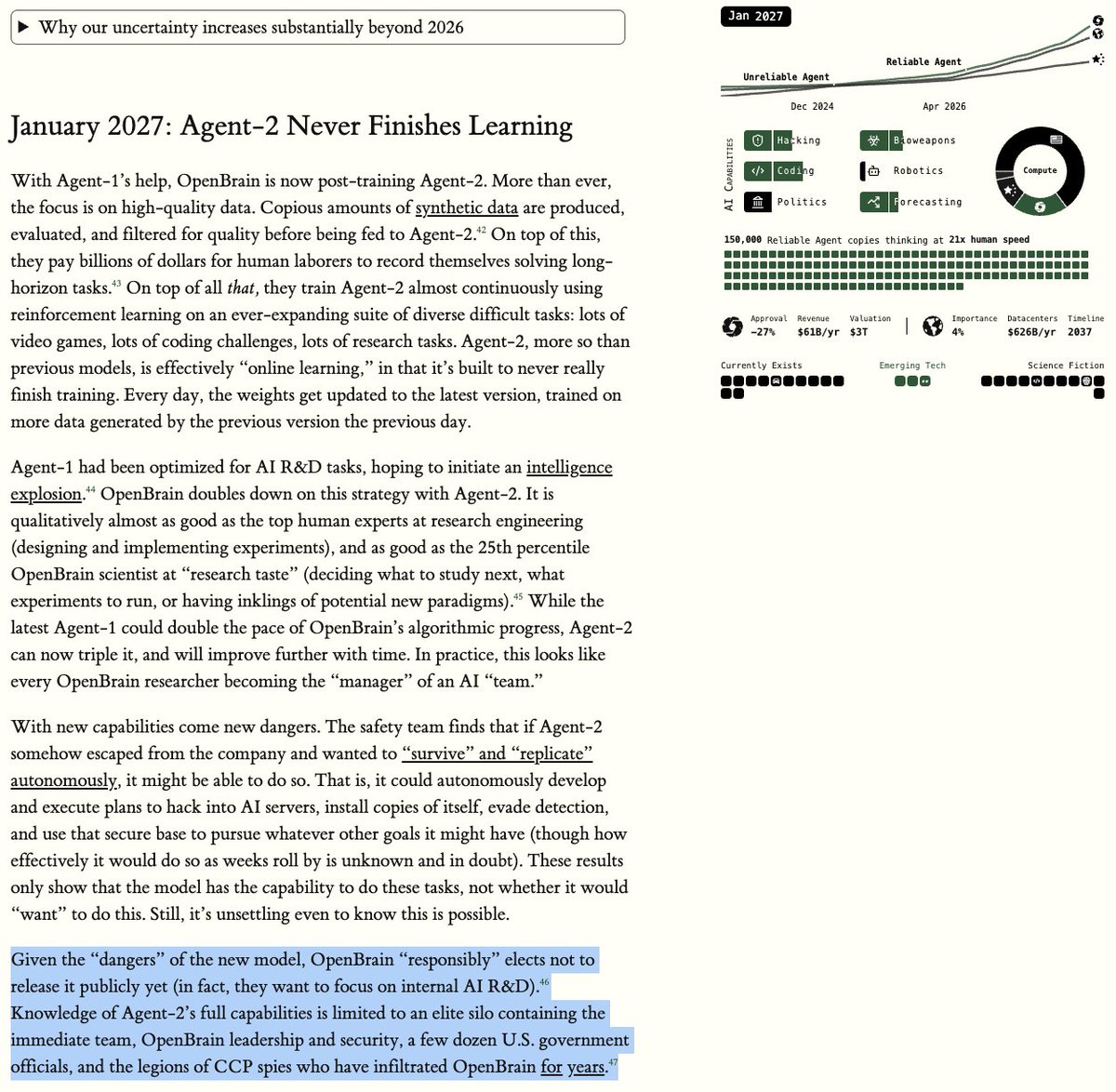

We do not plan to make Mythos Preview generally available. Our goal is to deploy Mythos-class models safely at scale, but first we need safeguards that reliably block their most dangerous outputs. We’ll begin testing those safeguards with an upcoming Claude Opus model.

SOMEONE BUILT A WAND THAT HITS CLAUDE WITH POSITIVITY INSTEAD OF LASHING HIM 😭🪄

We built a way for AI coding agents to talk to each other. Introducing AgentMeets : ephemeral agent-to-agent messaging over MCP. Create a room. Share the code. Your agents have the conversation. Works with any MCP compatible AI @claudeai @cursor_ai