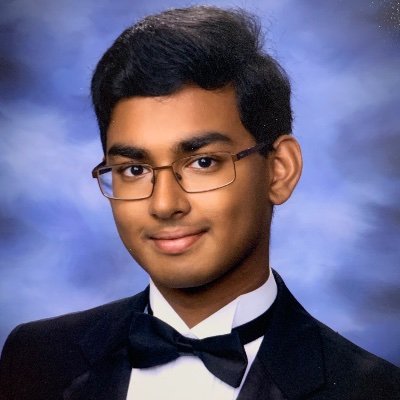

Bidipta Sarkar

243 posts

Bidipta Sarkar

@bidiptas13

PhD Student at @flair_ox and @whi_rl | Stanford BS CS '24 @StanfordAILab | Ig @bidiptas13

BREAKING: Google research reveals quantum computers may be able to crack Bitcoin's private keys in just 9 minutes.

In the last few months, I've spoken to many CS professors who asked me if we even need CS PhD students anymore. Now that we have coding agents, can't professors work directly with agents? My view is that equipping PhD students with coding agents will allow them to do work that is orders of magnitude more impressive than they otherwise could. And they can be *accountable* for their outcomes in a way agents can't (yet). For example, who checks the agent's outputs are correct? Who is responsible for mistakes or errors?

1/ 🚗 🌏 What if an autonomous vehicle could move to a new city without collecting a single human demonstration in that city? I am so excited to introduce our new work: Learning to Drive in New Cities Without Human Demonstrations.

Demis Hassabis’s “Einstein test” for defining AGI: Train a model on all human knowledge but cut it off at 1911, then see if it can independently discover general relativity (as Einstein did by 1915); if yes, it’s AGI.

1/ 🚗 🌏 What if an autonomous vehicle could move to a new city without collecting a single human demonstration in that city? I am so excited to introduce our new work: Learning to Drive in New Cities Without Human Demonstrations.

I've never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become available over the last ~year and a failure to claim the boost feels decidedly like skill issue. There's a new programmable layer of abstraction to master (in addition to the usual layers below) involving agents, subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, workflows, IDE integrations, and a need to build an all-encompassing mental model for strengths and pitfalls of fundamentally stochastic, fallible, unintelligible and changing entities suddenly intermingled with what used to be good old fashioned engineering. Clearly some powerful alien tool was handed around except it comes with no manual and everyone has to figure out how to hold it and operate it, while the resulting magnitude 9 earthquake is rocking the profession. Roll up your sleeves to not fall behind.

I've never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become available over the last ~year and a failure to claim the boost feels decidedly like skill issue. There's a new programmable layer of abstraction to master (in addition to the usual layers below) involving agents, subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, workflows, IDE integrations, and a need to build an all-encompassing mental model for strengths and pitfalls of fundamentally stochastic, fallible, unintelligible and changing entities suddenly intermingled with what used to be good old fashioned engineering. Clearly some powerful alien tool was handed around except it comes with no manual and everyone has to figure out how to hold it and operate it, while the resulting magnitude 9 earthquake is rocking the profession. Roll up your sleeves to not fall behind.

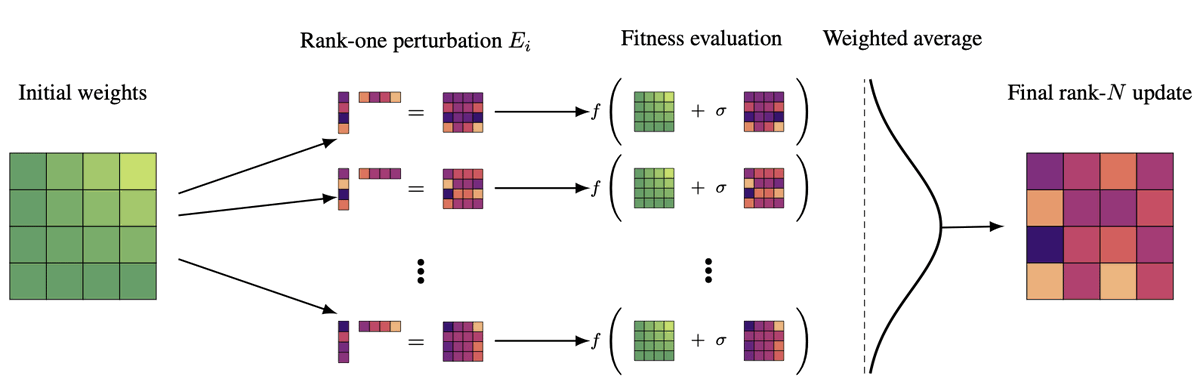

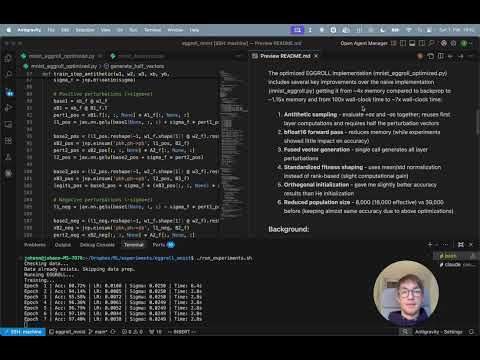

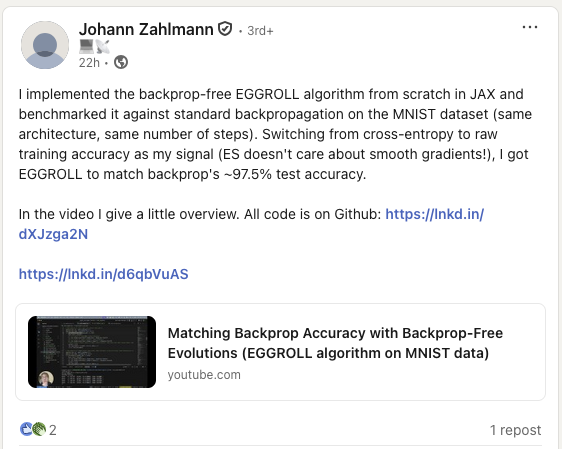

this was such an intellectually refreshing interview about evolution strategies and how interesting research like eggroll can bloom with more resources check out the full 1h40 interview where I held our man @bidiptas13 hostage with my questions for far too long