Yanping Huang

66 posts

How many languages can we support with Machine Translation? We train a translation model on 1000+ languages, using it to launch 24 new languages on Google Translate without any parallel data for these languages.arxiv.org/abs/2205.03983 Technical 🧵below: 1/18

Read all about Task-level Mixture-of-Experts (TaskMoE), a promising step towards efficiently training and deploying large models, with no loss in quality and with significantly reduced inference latency ↓ goo.gle/3I5ulXj

Read all about Task-level Mixture-of-Experts (TaskMoE), a promising step towards efficiently training and deploying large models, with no loss in quality and with significantly reduced inference latency ↓ goo.gle/3I5ulXj

Not gonna amplify that other tweet but I’ll instead point to this one. Speaking as a relatively newish member, I’ll say that I’ve found @uwcse to be a supportive place for women, w/ a strong commitment to diversity and inclusion from our leadership.

Today is the start of UW’s Winter Quarter, and #UWAllen is excited to welcome our students back. Without the benefit of a long break given our quarter system, instructors spent the holiday weekend preparing for the challenge of conducting the 1st week remotely due to omicron. 1/5

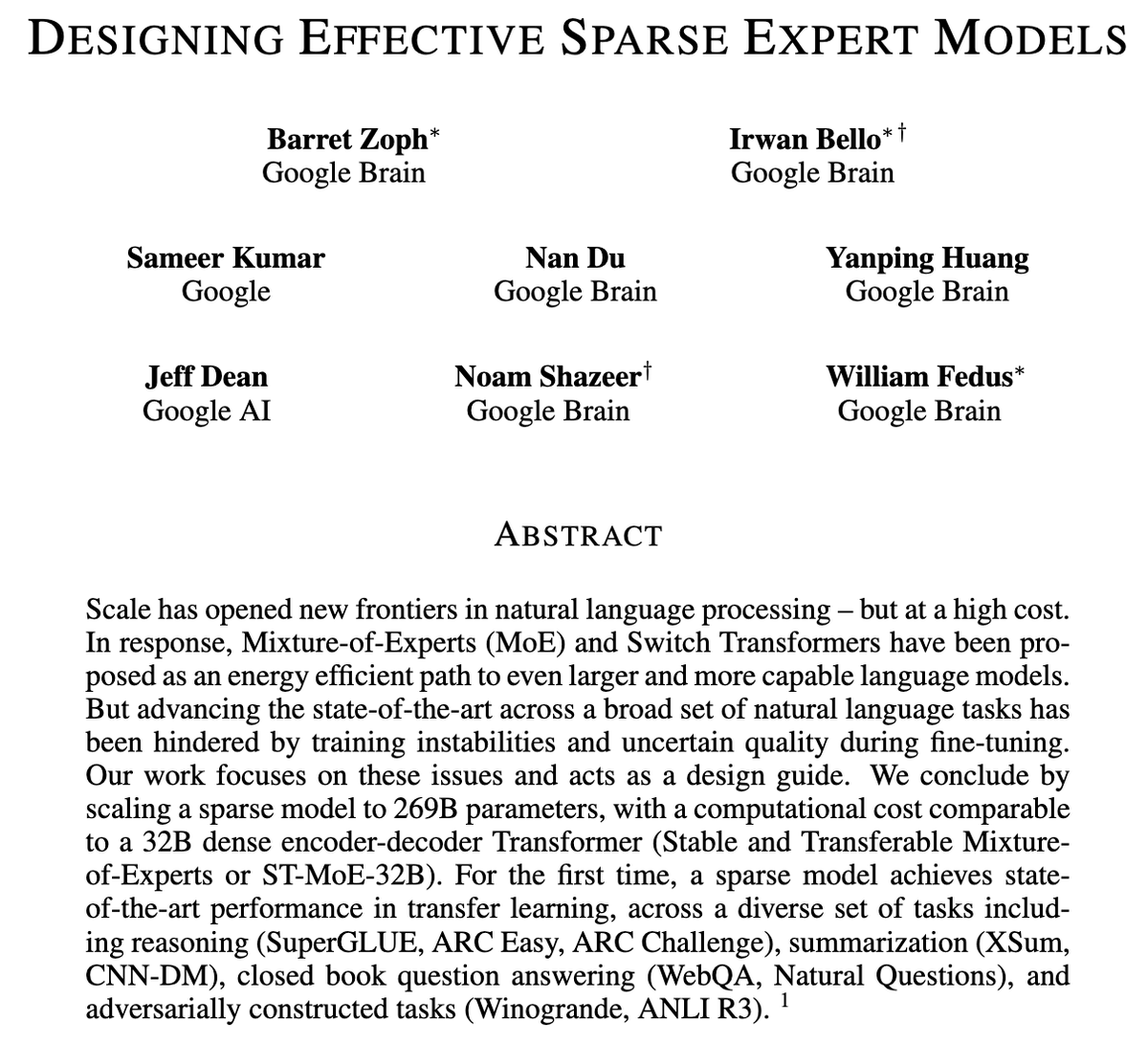

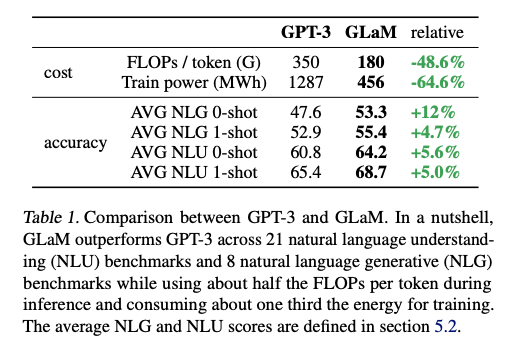

GLaM: Efficient Scaling of Language Models with Mixture-of-Experts 1.2T weight model that has better average zero and one-shot results than GPT-3 while using only ⅓ of compute for training. Arxiv: arxiv.org/abs/2112.06905 Blog: goo.gle/3dBNLWQ

We wrote a blogpost to unveil Google's model parallelism engine that allows the efficient training of large scale models like GShard-M4, LaMDA, and BigSSL.

Today we introduce the Generalist Language Model (GLaM), a sparsely activated model that achieves better overall performance on 29 few-shot #NaturalLanguageProcessing benchmark tasks with just a third of the training energy cost. Learn more on the blog ↓ goo.gle/3dBNLWQ

Today we present an open-source system to scale neural networks — often critical for improving model performance — by automatically parallelizing the model across devices, which enables researchers to more efficiently build and train large-scale models. goo.gle/3EEwloj