Blaine Dillingham

23 posts

Accelerationist AI policy is losing ground, and the current strategy does not give moderates a pro-AI case for 2028. Without it, they'll get pulled apart by anti-AI sentiment. Today, I argue accelerationism needs to change, or its defeat will make AI policy worse for everyone.

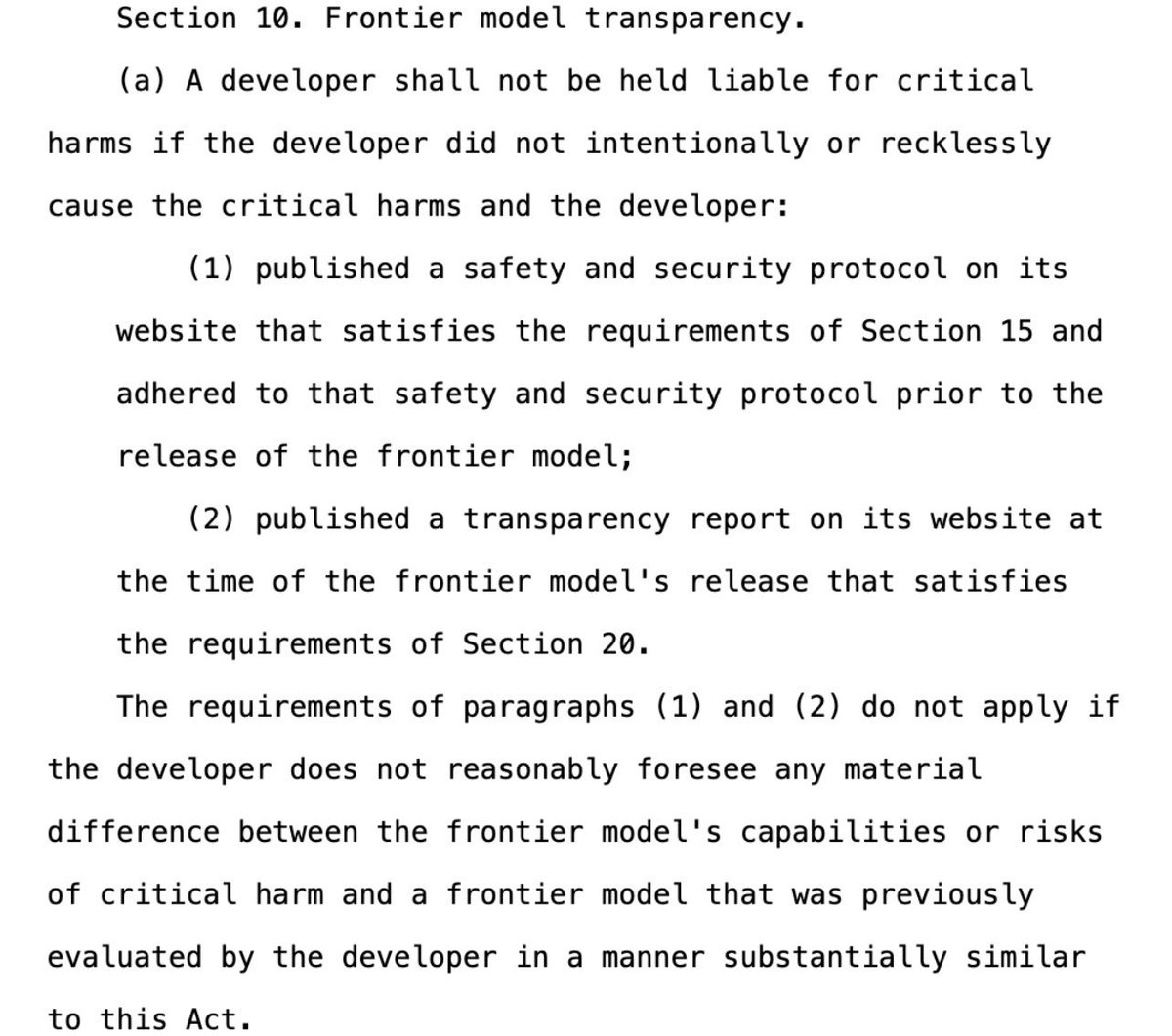

@blainedilli @MarshaBlackburn On model evaluation, the Act proposes creating a new program at DOE for predeployment testing. Beyond being duplicative of CAISI's eval capacity, requiring companies to transfer proprietary data and model weights to a gov't agency introduces serious security risks.

Book review 📚 Artificial-intelligence models will supposedly take over the world, but AI innovator Luc Julia tells Nature that they’re little more than glorified pocket calculators go.nature.com/4lPpuPd