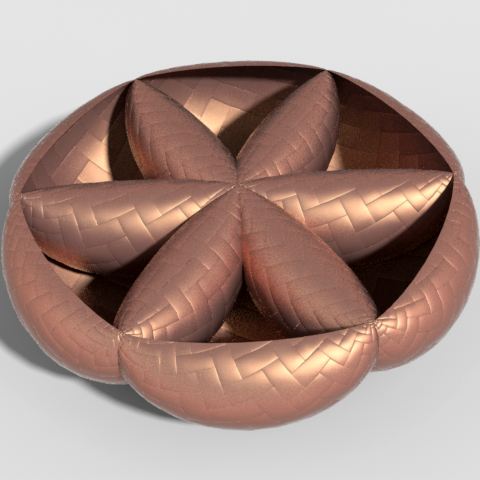

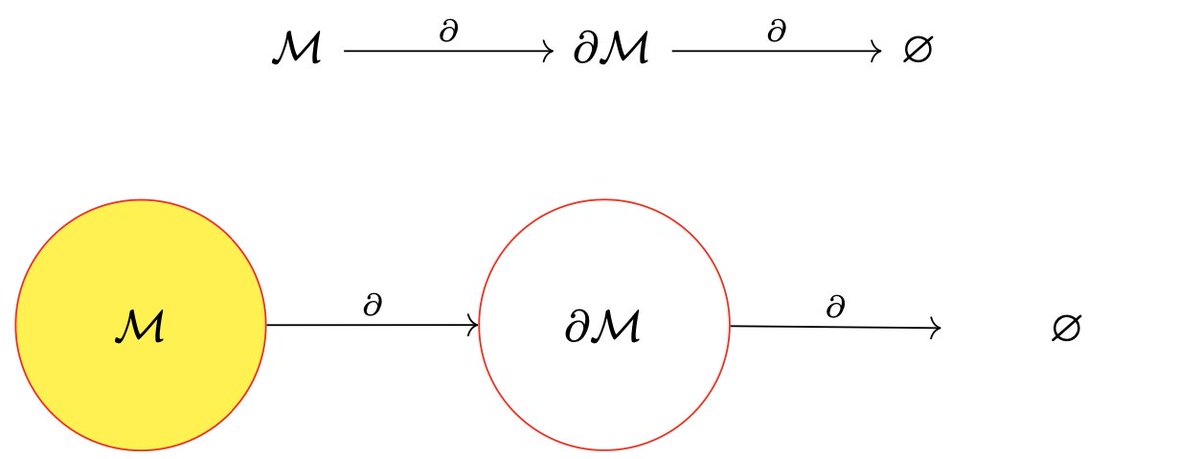

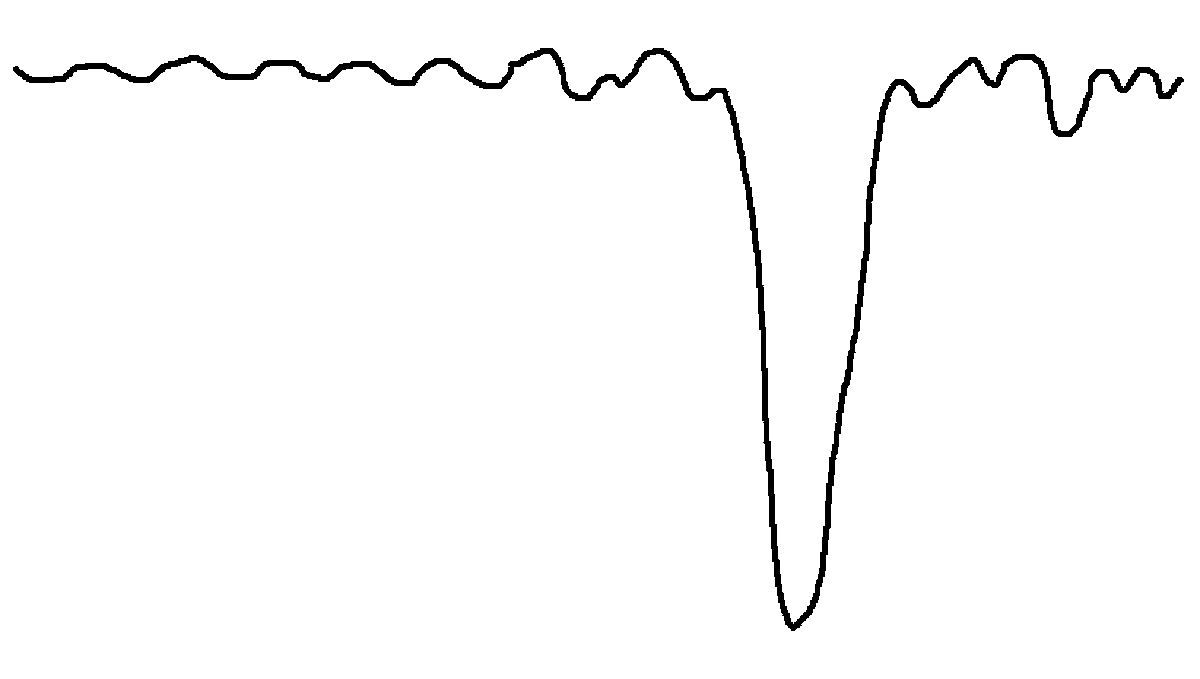

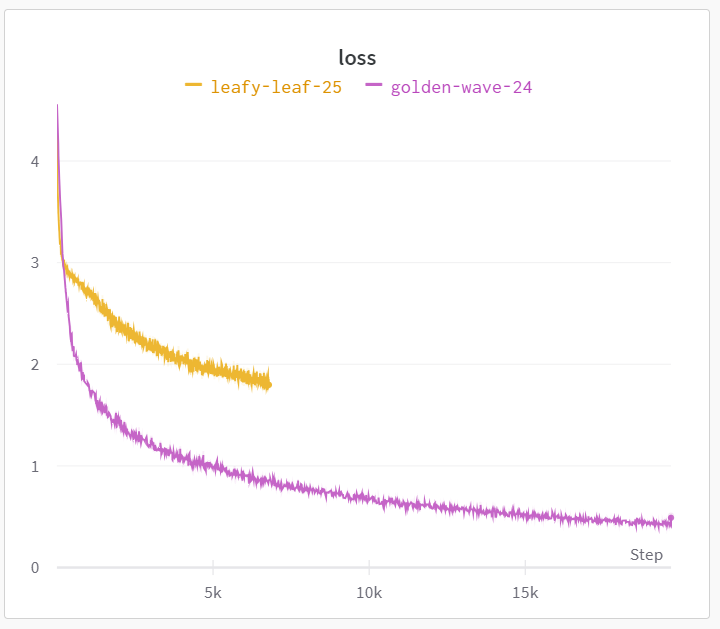

Eka means unity -- “one,” in Sanskrit and “first” in Finnish. We’re building intelligence for the physical world in its native language: forces. Until now, robotics faced a tradeoff — generality or speed. The real world requires both. Robotics also faced a data problem. Our Vision–Force–Action (VFA) model — the first of its kind — breaks the generality-speed tradeoff and the data barrier. It's a new foundation uniting performance, generality, and safety for putting capable robots in everyone's hands. Today, I am excited to share our journey of pushing robots beyond human limits. Today, dexterity becomes scalable. Today, I welcome you to the Era of Eka. Co-founded with @haarnoja, and so thrilled and grateful to be working with a dream team at @EkaRobotics. Learn more: ekarobotics.com