Join the team behind Gemini 2.5 as they dive into the model’s thinking and coding advancements. 🎙️Space starts at 12:20pm PT. Drop your questions below. x.com/i/spaces/1MYxN…

Sebastian Borgeaud

36 posts

@borgeaud_s

Research Engineer @GoogleDeepMind Lead for Gemini pre-training

Join the team behind Gemini 2.5 as they dive into the model’s thinking and coding advancements. 🎙️Space starts at 12:20pm PT. Drop your questions below. x.com/i/spaces/1MYxN…

Gemini 2.5 Pro #1 across ALL categories, tied #1 with Grok-3/GPT-4.5 for Hard Prompts and Coding, and edged out across all others to take the lead 🏇🏆

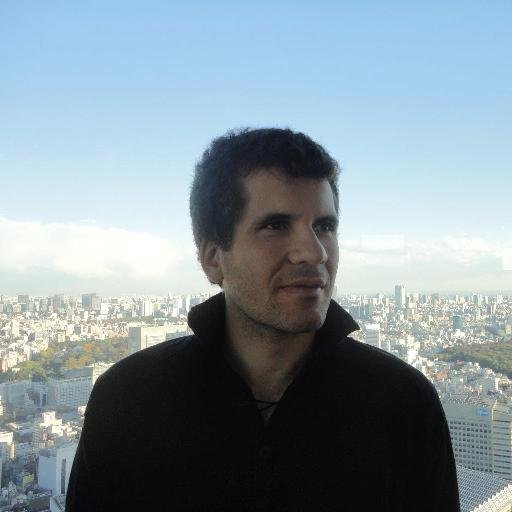

2.0 Pro Experimental is our best model yet for coding and complex prompts, refined with your feedback. 🤝 It has a better understanding of world-knowledge and comes with our largest context window yet of 2 million tokens - meaning it can analyze large amounts of information.

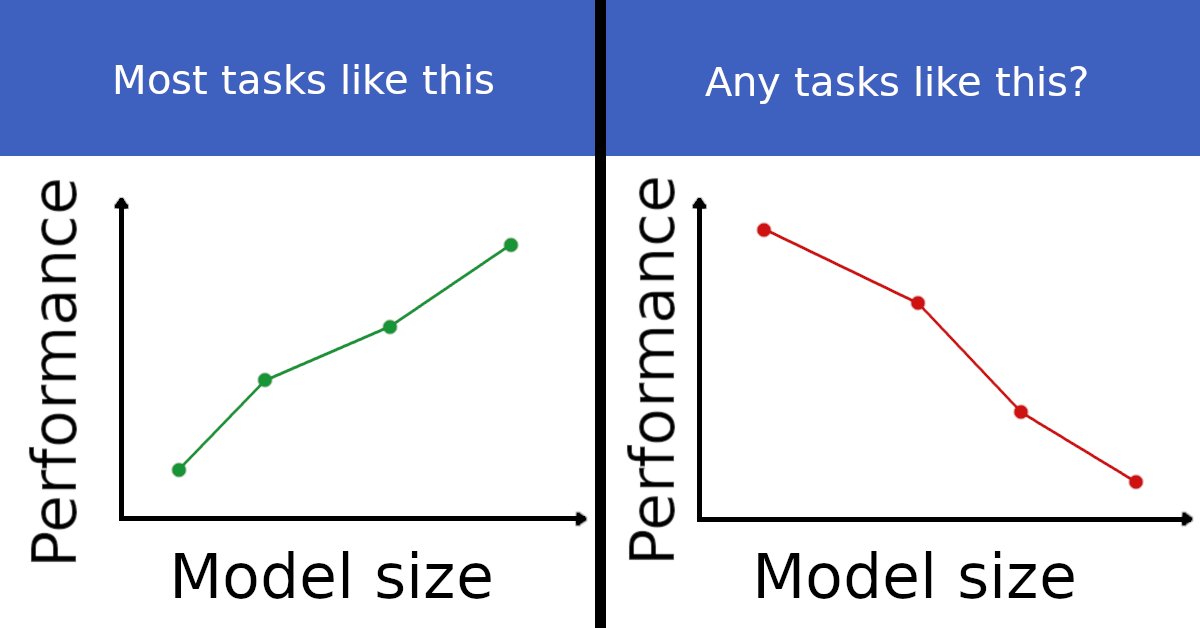

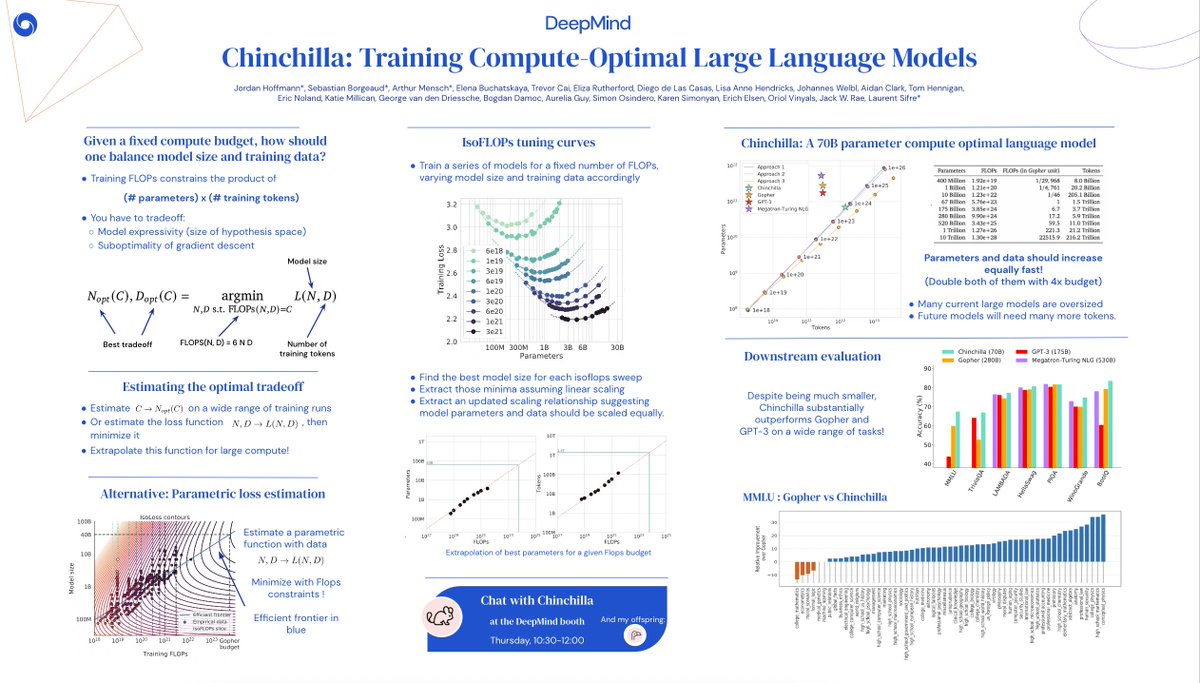

The Chinchilla scaling paper by Hoffmann et al. has been highly influential in the language modeling community. We tried to replicate a key part of their work and discovered discrepancies. Here's what we found. (1/9)

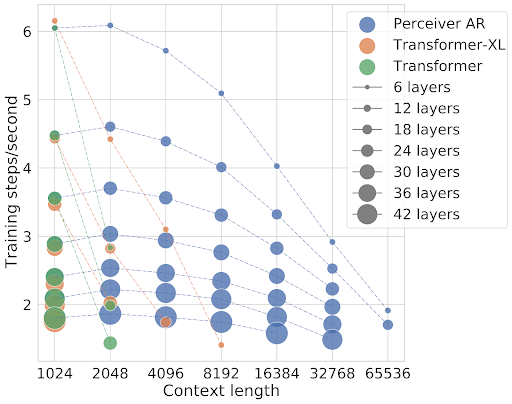

In December we began the Gemini Era, and we’ve continued to make relentless progress since. Today we’re thrilled to introduce the next generation: Gemini 1.5 - hugely enhanced performance, highly efficient architecture & long-context length breakthrough blog.google/technology/ai/…

And now we’re about to present our poster! Stand 304, right by the entrance! Can’t miss us, any and all questions welcome :)