Bowei Chen

28 posts

Bowei Chen

@bowei_chen_19

Ph.D. student at UW CSE @UwRealityLab, M.S. at CMU.

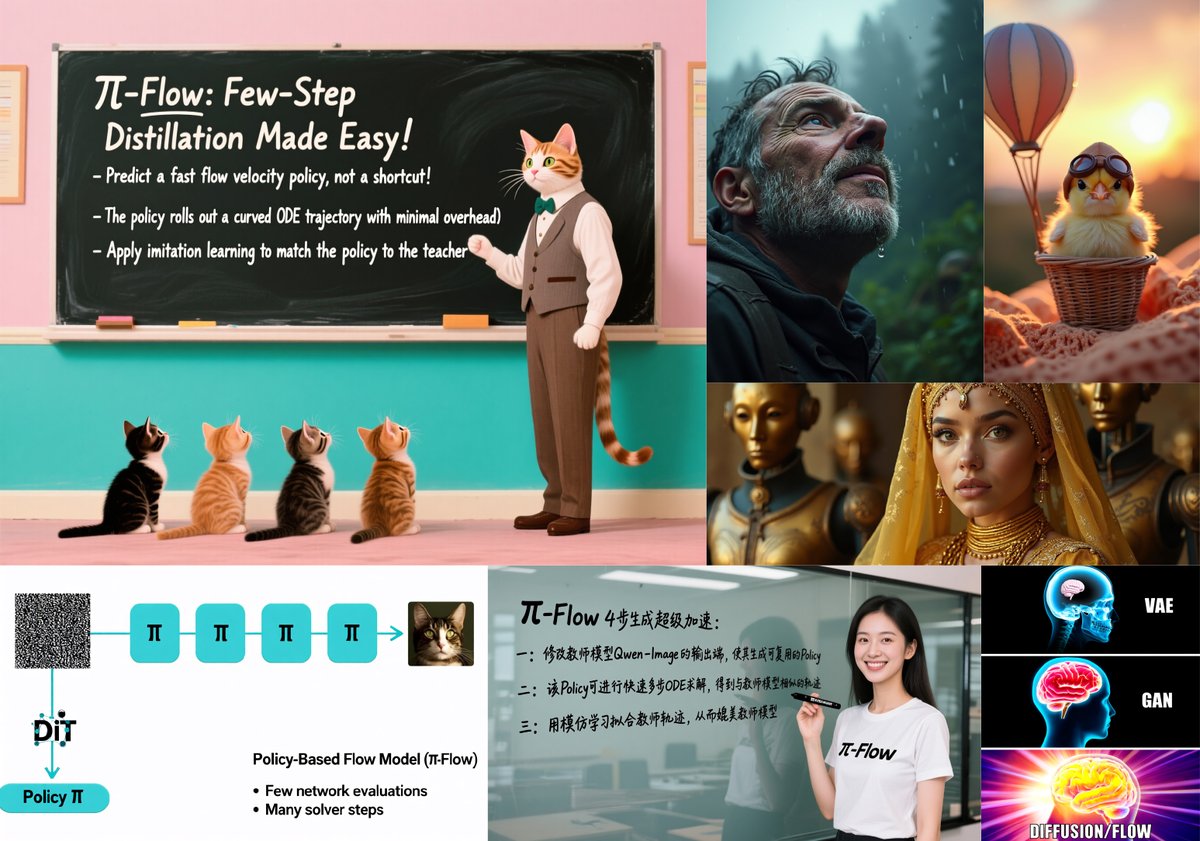

Too many REPA / RAE / representation alignment papers lately? I was lost too, so I wrote a blog post that organizes the space into phases and zooms in on what actually matters for general/molecular ML. Curious what folks think - link below! 🔗 Blog: kdidi.netlify.app/blog/ml/2025-1…

We found that visual foundation encoder can be aligned to serve as tokenizers for latent diffusion models in image generation! Our new paper introduces a new tokenizer training paradigm that produces a semantically rich latent space, improving diffusion model performance🚀🚀.

We found that visual foundation encoder can be aligned to serve as tokenizers for latent diffusion models in image generation! Our new paper introduces a new tokenizer training paradigm that produces a semantically rich latent space, improving diffusion model performance🚀🚀.

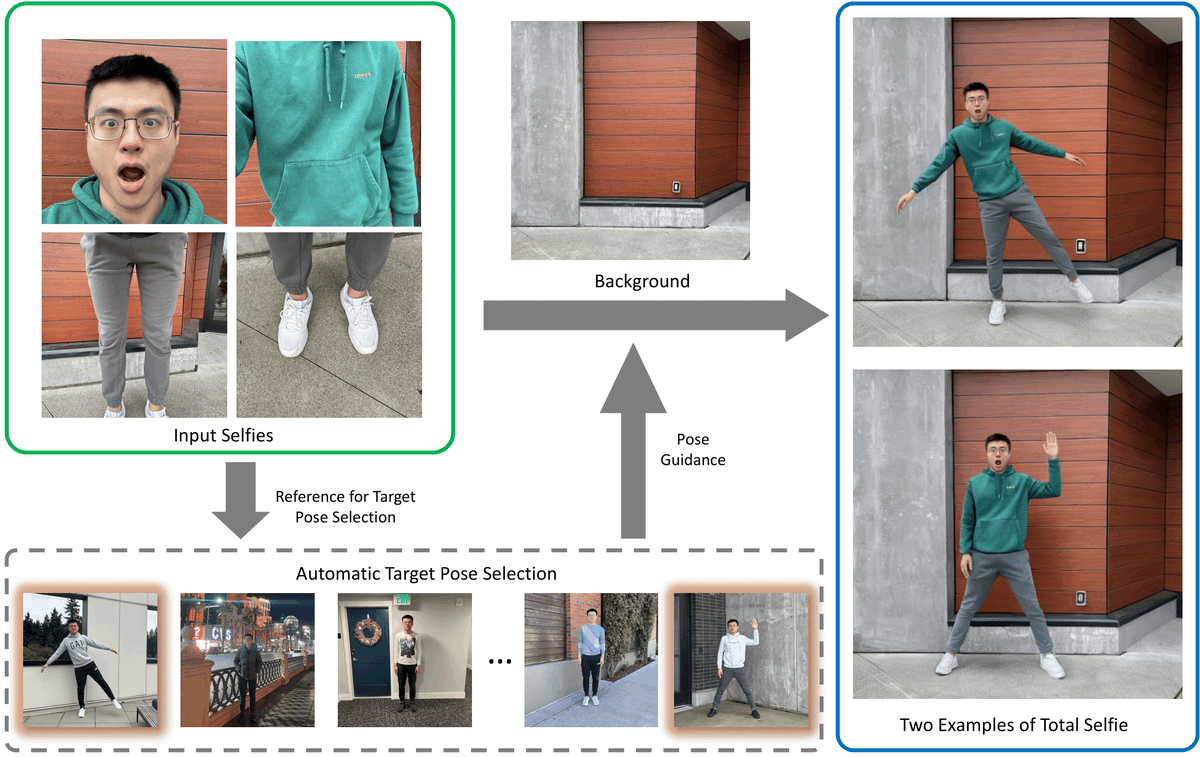

#CVPR2024 Arm-captured selfies only capture your partial body. Instead, what if you could capture a full-body photo that someone else would take of you in the scene? We present Total Selfie, which generates full-body selfies from photographs originally taken at arms length. 1/n