breandan

3.8K posts

MCP sucks honestly It eats too much context window and you have to toggle it on and off and the auth sucks I got sick of Claude in Chrome via MCP and vibe coded a CLI wrapper for Playwright tonight in 30 minutes only for my team to tell me Vercel already did it lmao But it worked 100x better and was like 100LOC as a CLI

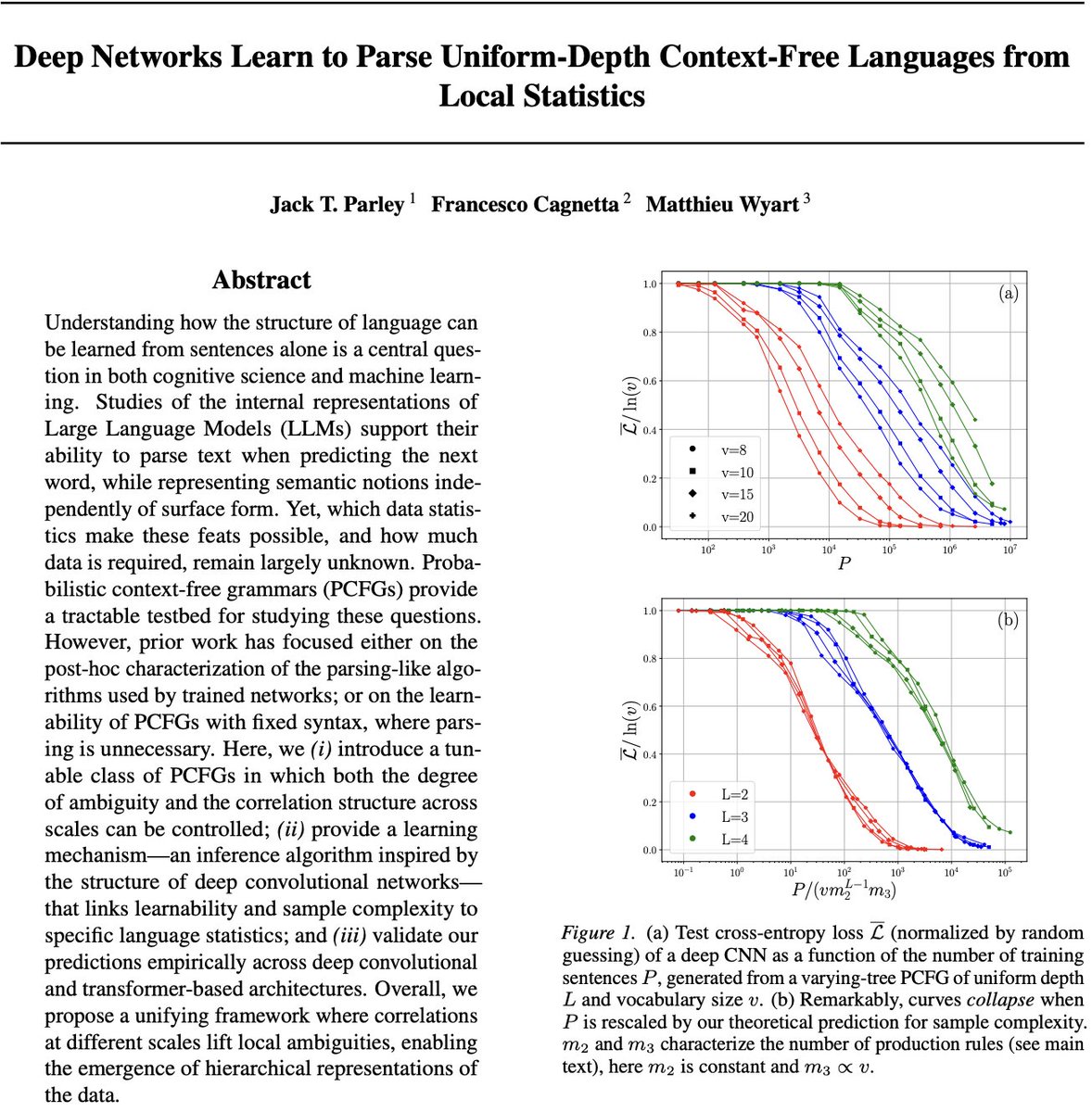

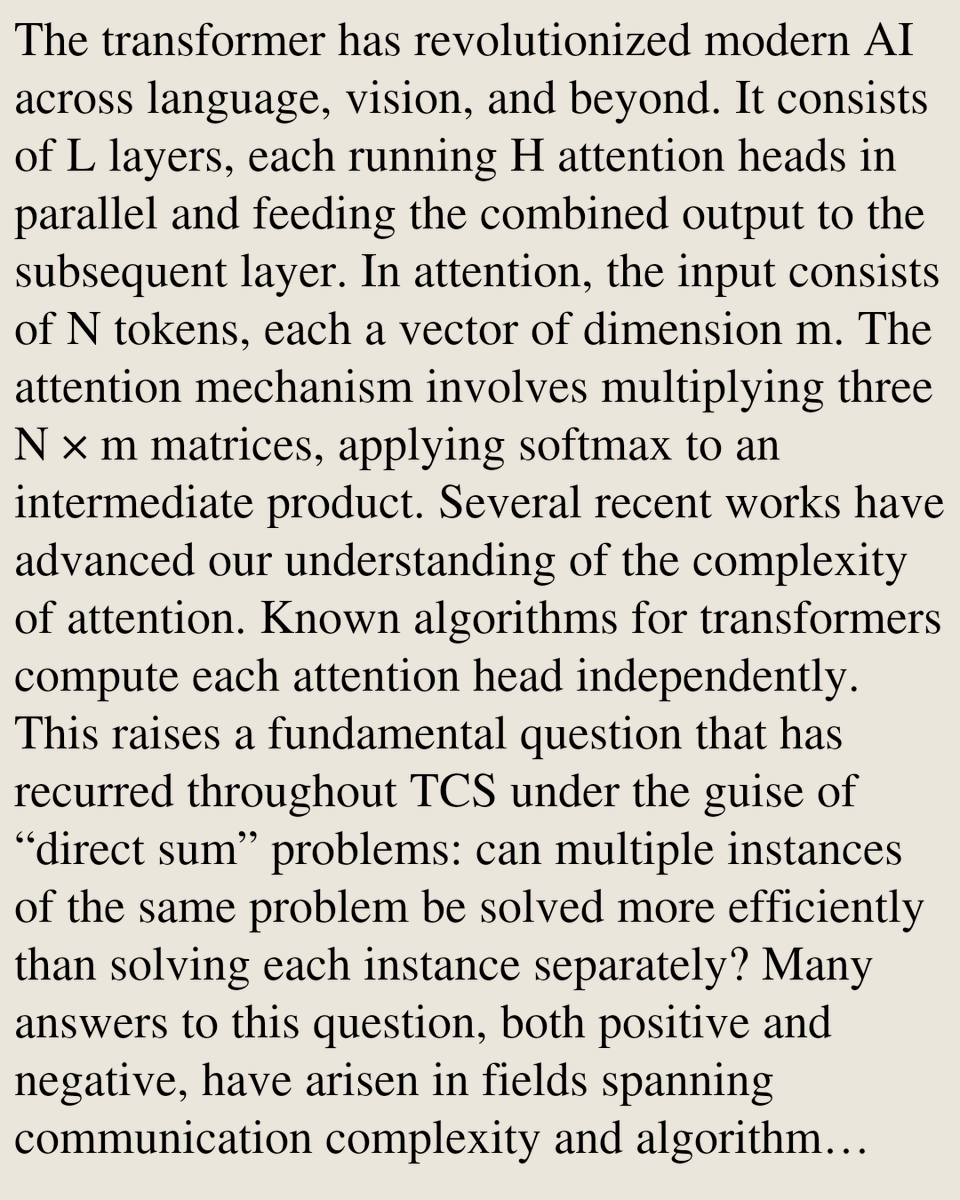

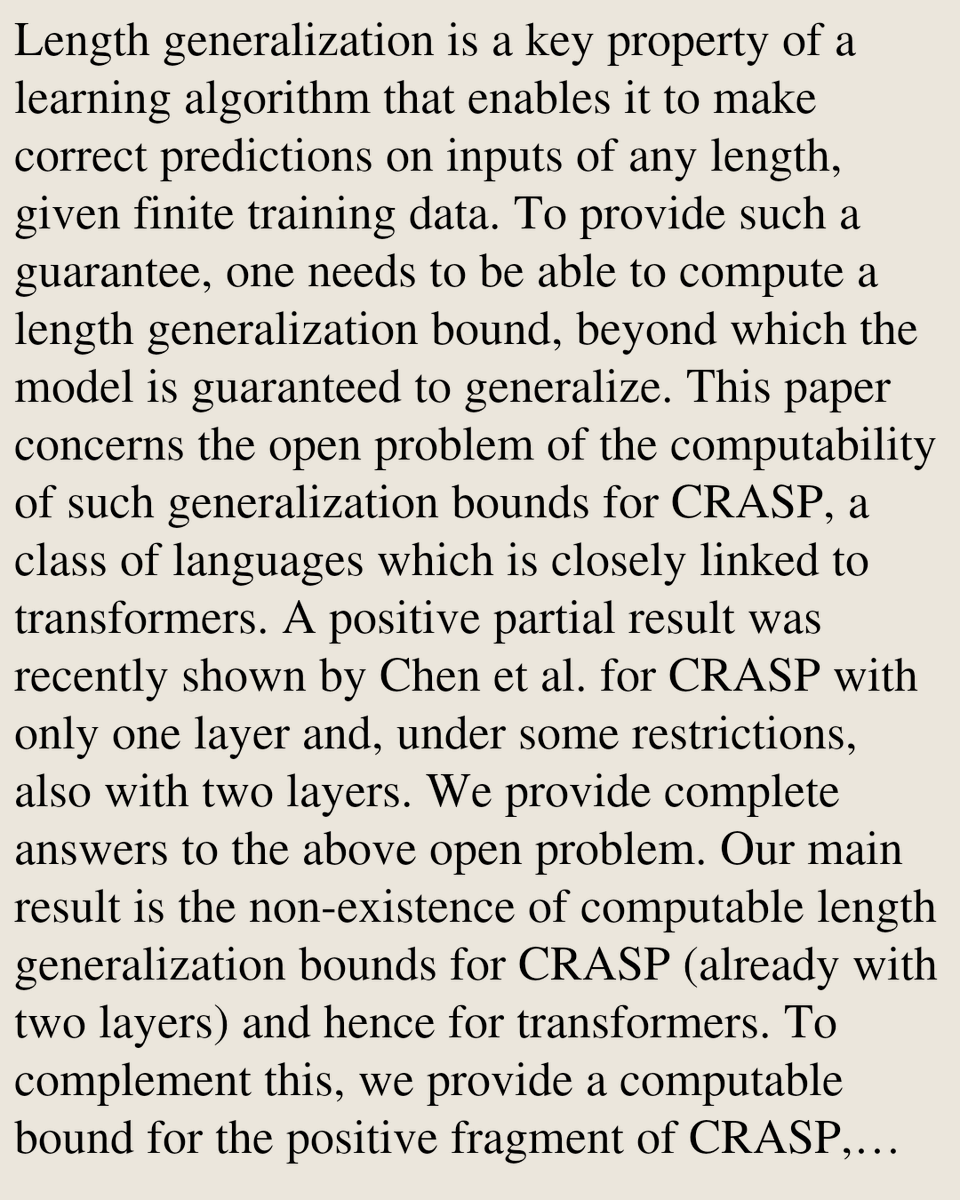

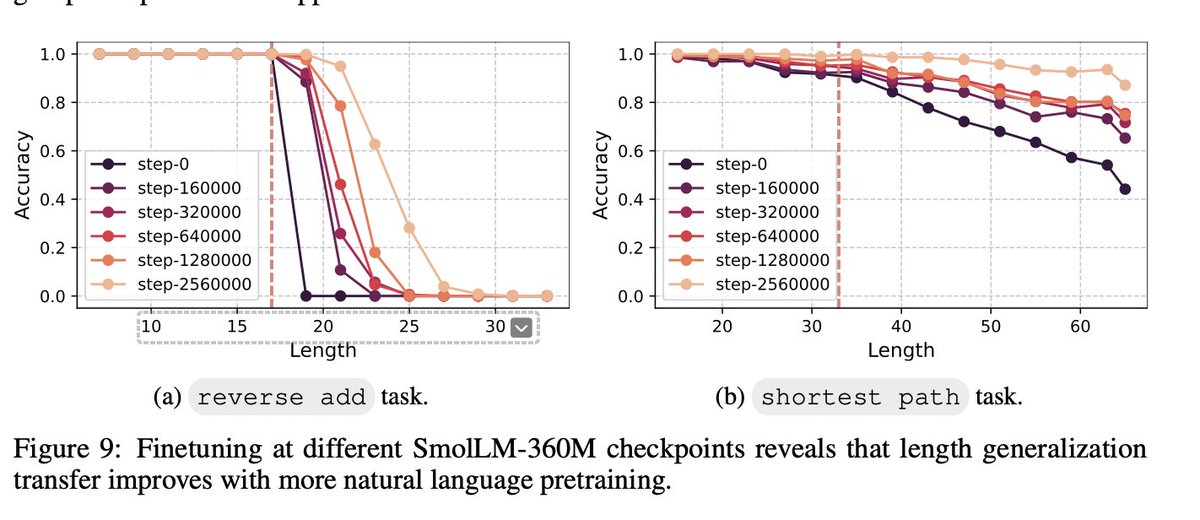

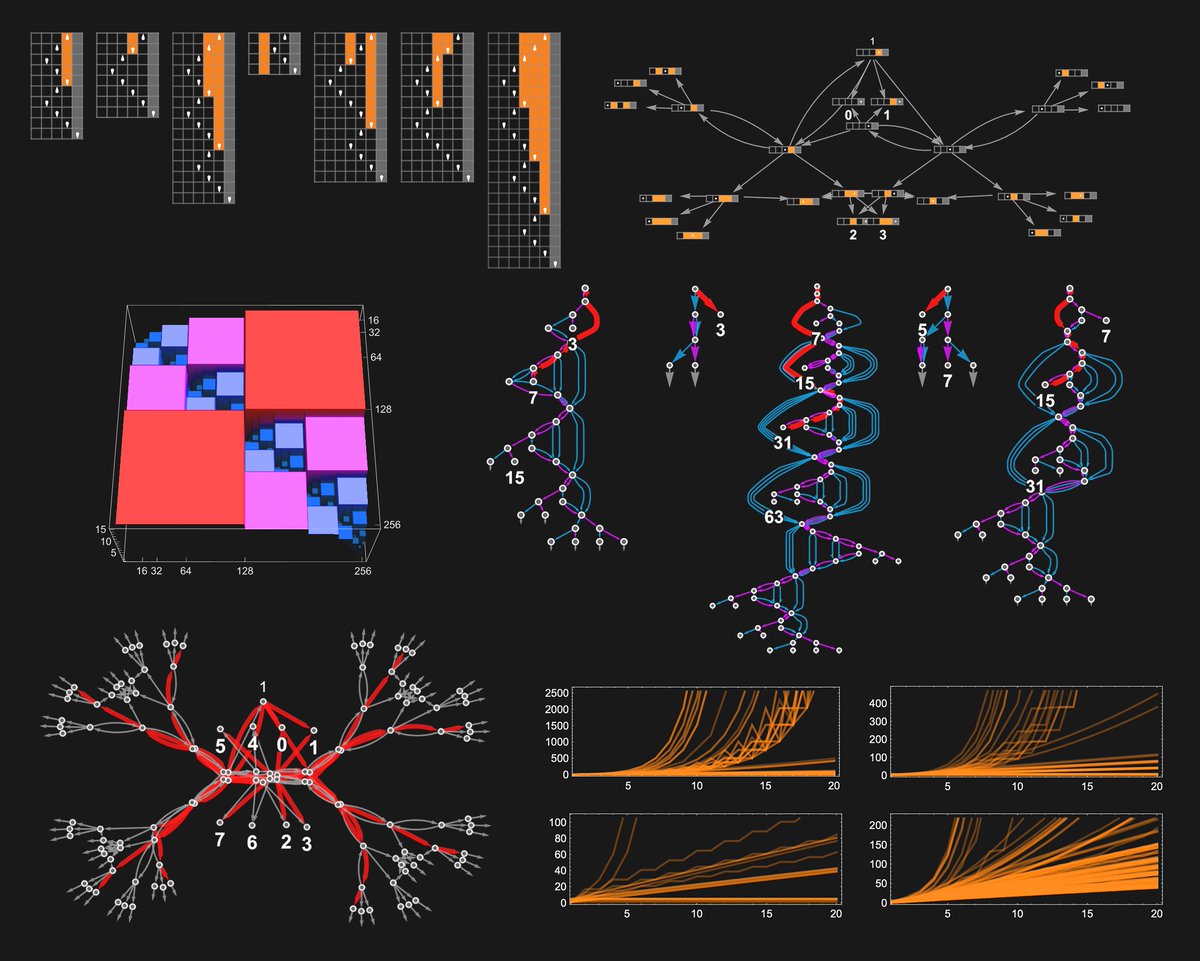

Announcing the first Workshop on Formal Languages and Neural Networks (FLaNN) 🍮! We invite the submission of abstracts for posters that discuss the formal expressivity, computational properties, and learning behavior of neural network models, including large language models.

Humanism sans theism is inconsistent. A humanism which puts its faith in man and his deeds alone is inconsistent, for left to our own devices, mankind tends towards self-destruction. True humanitarianism is to seek first the Creator who so loved the world he became man.