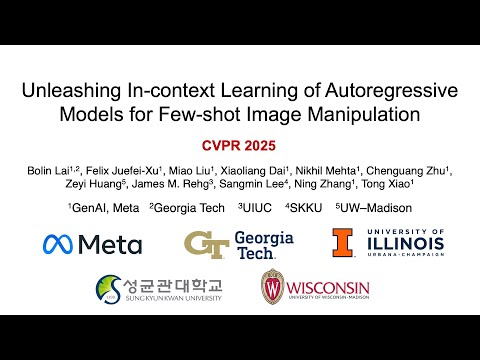

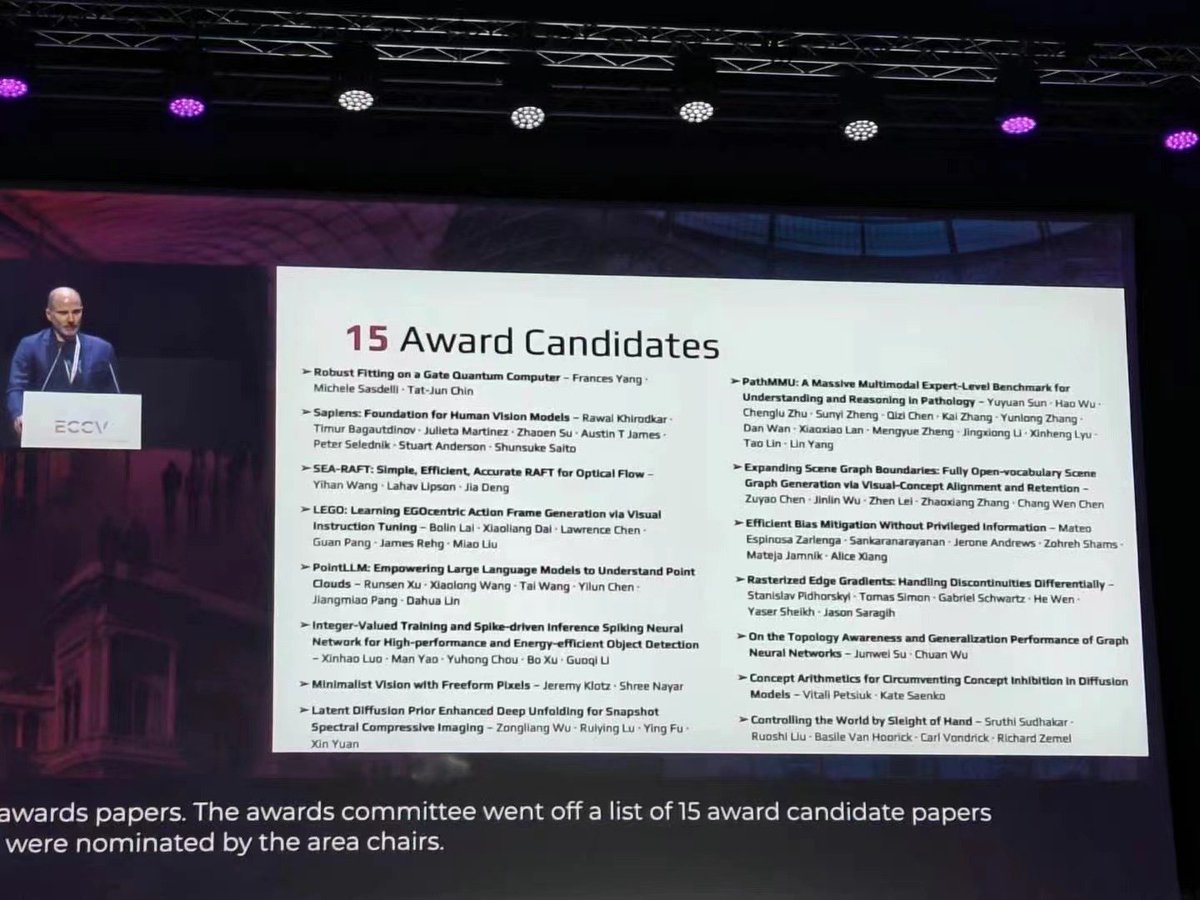

LEGO can show you how it's done! New @eccvconf work from @bryanislucky, a new generative tool can produce visual images to accompany step-by-step instructions with just a single first-person photo uploaded into the prompt. #wecandothat🐝 @GTResearchNews b.gatech.edu/47RT3bN