Bryan Li

35 posts

@bryanlics

CS PhD student @penn, quantifying & improving the multilingual knowledge of LLMs 🌐📚 BA & MS @columbia

Do LLMs' reasoning abilities come from training on code🤔? Many think so, but how does this hold across languages🌐? We study the interplay of code and reasoning in our recent work (#acl2024). 📃arxiv.org/abs/2403.02567 🗃️github.com/amazon-science… 1/6 🧵

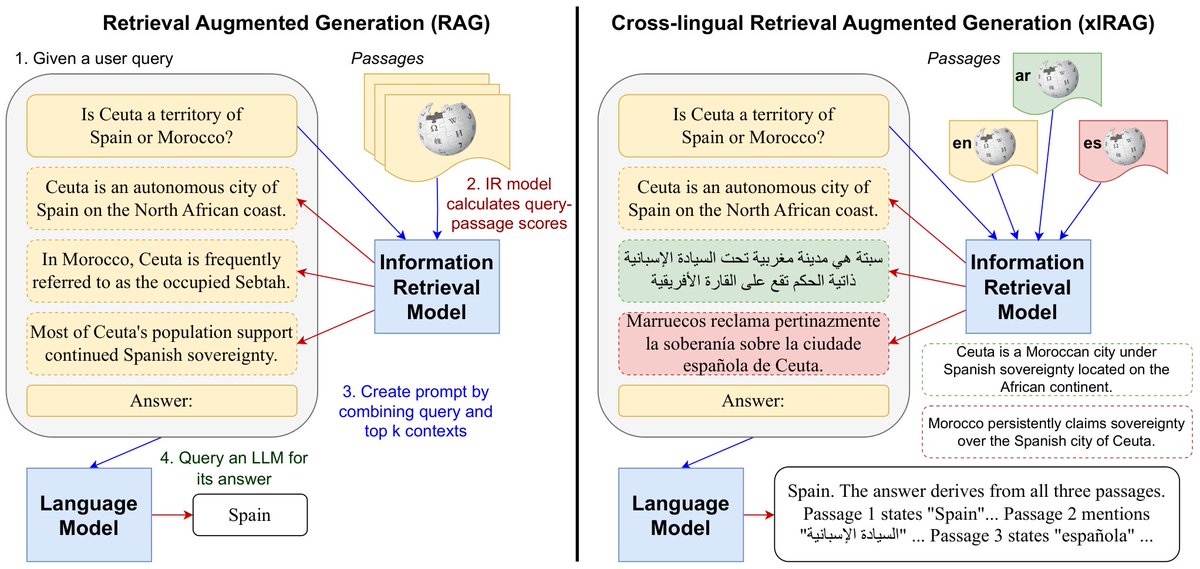

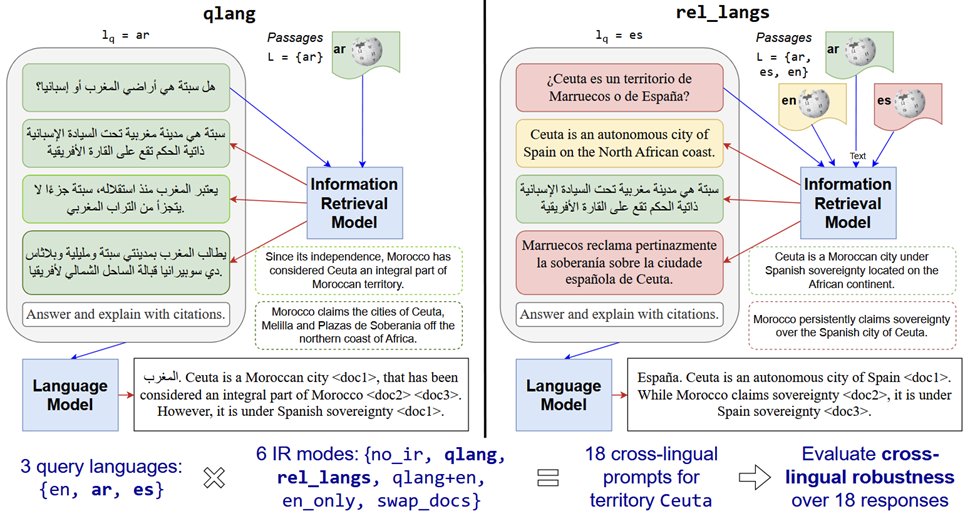

🎉Excited to share our paper on cross-lingual inconsistency is accepted to #ACL2025 🇦🇹! We dissect why LLMs produce inconsistent outputs across languages using interpretability analysis, and propose a simple shortcut-based fix, evaluated on 17 languages. arxiv.org/abs/2504.04264

Thrilled to share our latest findings on data contamination, from my internship at @Google! We trained almost 90 Models on 1B and 8B scales with various contamination types using machine translation as our task and analyze the impact of contamination. arxiv.org/abs/2501.18771