Ben Snodin

618 posts

Ben Snodin

@bsnodin

Making sense of AI | Formerly @AISecurityInst, @RethinkPriors | 5 years quant finance | nanotech PhD

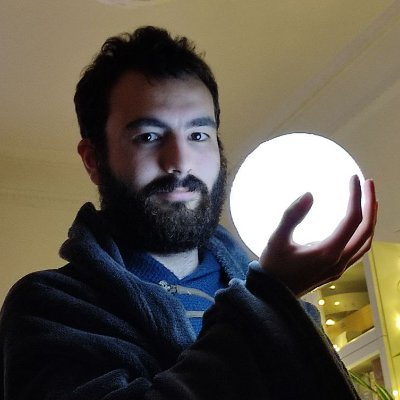

We estimate that Claude Opus 4.1 has a 50%-time-horizon of around 1 hr 45 min (95% confidence interval of 50 to 195 minutes) on our agentic multi-step software engineering tasks. This estimate is lower than the current highest time-horizon point estimate of around 2 hr 15 min.

Our new papers examine the research wave surrounding safety frameworks. One explores emerging practices, and the other introduces safety cases for implementation.📖🔐

Three Observations: blog.samaltman.com/three-observat…

Today, we are publishing the first-ever International AI Safety Report, backed by 30 countries and the OECD, UN, and EU. It summarises the state of the science on AI capabilities and risks, and how to mitigate those risks. 🧵 Link to full Report: assets.publishing.service.gov.uk/media/679a0c48… 1/16

My entire feed today is basically: “DeepSeek R1 is a huge moment that validates everything I previously thought about AI and all my existing policy and technical positions” 🙃