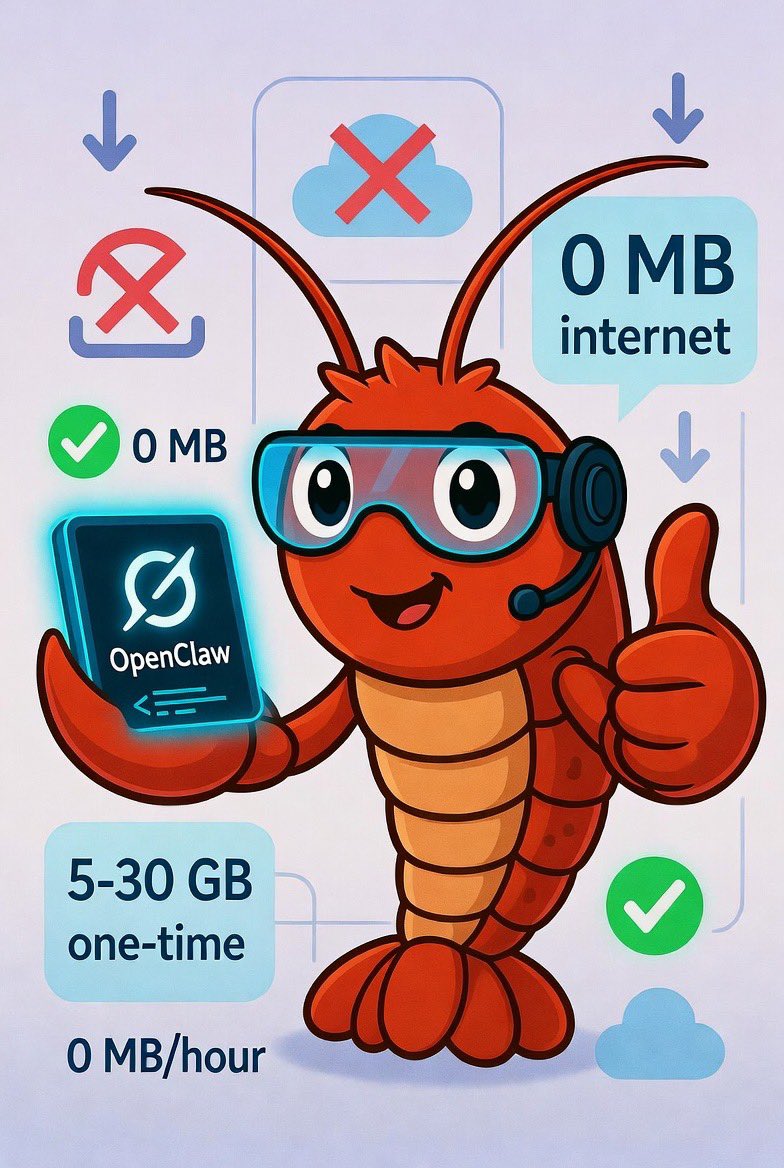

@HappyGezim @JohnnyNel_ @majorgeeks spot on. privacy is just one side of the coin. the real win is the latency and piping local logs directly into the context window without waiting for a cloud api to decide if it's 'safe'. i've been running orchestration on a local rack for months, never going back

English