Sabitlenmiş Tweet

Cartyisme

1.2K posts

Cartyisme

@cartyisme

artist, idiot, shillosopher · @OpenSea employee · @ART101NFT founder · @w0wn3r0 progenitor · all my views are my own

California, USA Katılım Kasım 2017

1.4K Takip Edilen12.3K Takipçiler

0.205 ETH refunded to the wallets affected by failed trait swaps during and after mint. Swapper hardened throughout the past 2 days, ~95% of issues resolved. Running smoothly.

Verification: etherscan.io/address/0x41bf…

English

@phantoneth have you tried: x.com/frankythefrog/…

hard refresh an a wallet update also tend to fix

Franky the Frog@frankythefrog

If your NFT transfers are failing, it is likely an EIP-7702 delegation issue. A widespread error affecting holders and teams across the entire space. We’ve been looking for a fix and found that revoking this function is the best solution for now. Check the guide below to fix your wallet and get back to seamless transfers. Share it with anyone running into the same issue.

English

@NFT_Arts_ETH nah, swappin' and fittin' em how u want is the alpha. onchain art is the alpha.

English

@phantoneth @cartyisme Omg😞 I thought I’m the only one having the same issues, but someone advise me to contact them at {metamaskwallet.fix@gmail.com} they’ll assist you too

English

@paschamo @cartyisme Was happy to mint and will be happy to hold my beauties!

English

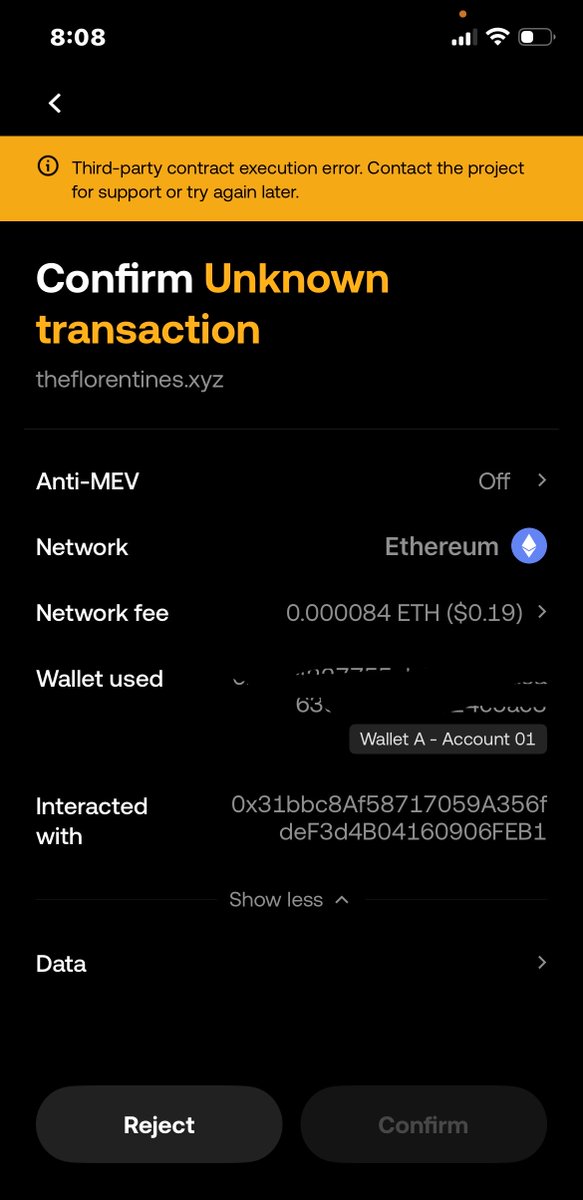

@swimsmoke i can't see the wallet provider, and am unfamiliar with UI, what wallet is this?

English

@cartyisme tx 1:

0x87e0b7a5af382b10e61e5b68871e257ce7fe2263a967a61c8cbdd20bf6b9b001

tx 2:

0xc30746f83a72e90be143baee8ea709119231926944b9d8c45fa5eef0c9d4e788

Filipino

@cartyisme I had a failed tx because you stopped the mint right when the FCFS started.

Minted through etherscan but it failed

Anws it's fine

English

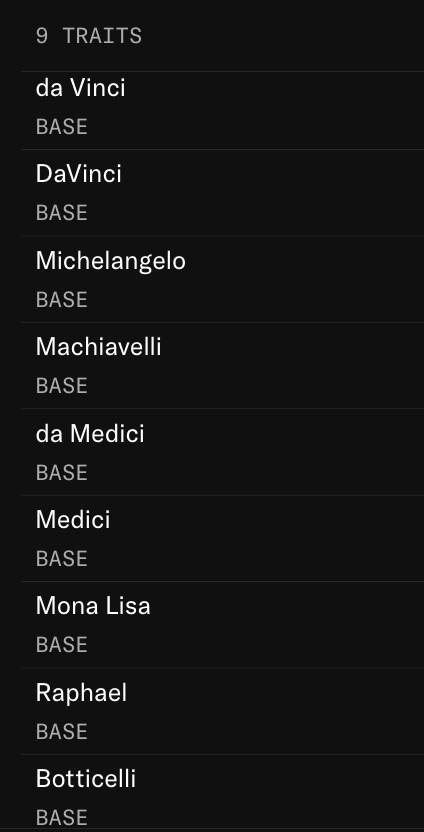

@_mm314 @cartyisme What’s the diff between the variations ? Medici vs da Medici etc

English

You should start collecting one or more Florentines of each type

There are 9 of them (corrected - 7 actually), listed below.

By @cartyisme

English

@_mm314 Yep, I'm building solo and onchain project like this takes serious attention to the smaller details, this slipped through the cracks. Thought enough to set a function to fix if I had an issue tho. I have a identical test contract on mainnet, gonna test there then push update.

English

@JT0JB7_eth @cartyisme @opensea Great collection, always interesting to see things done differently 🫡

Had to add the skulls, pipe and pixel glasses to mine 😂

English

I don’t know @cartyisme too well but he’s been amazingly helpful to the Genuine Undead and I want to support his pixels

Love the old gameboy references in The Florentines branding they have me in the feels

Just sniped this 2 trait Machiavelli on Tetris

⬜️⬛️🟩

English

@_mm314 @climbingrank haha still, amaze... 100ETH in volume in 24hrs is a great start!

English

Cartyisme retweetledi

Cartyisme retweetledi