Carson Chow

2.4K posts

Carson Chow

@ccc1685

Applied mathematics, biology, computational neuroscience

Katılım Aralık 2010

170 Takip Edilen142 Takipçiler

Explore this gift article from The New York Times. You can read it for free without a subscription. nytimes.com/2026/03/15/bus…

English

@TrentonBricken I admire the principled actions but the usual contract states that the product is to only be used for legal activities. But if random killing and mass surveillance is considered legal then I think we may have bigger problems.

English

@ATabarrok I mostly don't bother trying to get reimbursed. The Swiss method is the best. They just hand you a wad of Francs

English

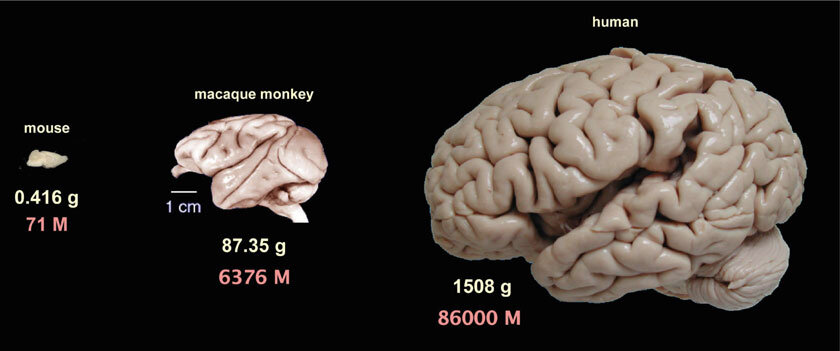

@SuryaGanguli 2) So it it may not be a fair comparison to use 20 W for the human use per token. On the flip side, the 20 W doesn't account for the energy to keep the brain alive such as pumping blood, making glucose, etc. Anyway it's not a trivial comparison.

English

Brain:

20 Watts * 20 yrs ~ 3500 kW-hours

Llama-405B:

700 W/GPU * 30M GPU hours ~ 21M kW-h

AI training = 6000 * human training

Chief Nerd@TheChiefNerd

🚨 SAM ALTMAN: “People talk about how much energy it takes to train an AI model … But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

English

@SuryaGanguli 1) The 20 watts, which is the average a human brain uses, is the baseline rate which would use 20 watts independent of what you are doing. Metabolic measurements seem to indicate that "thinking hard" has essentially non-measureable effect on energy.

English

AI inference energy cost for a >405B model:

~ 5 kWh per 1M output tokens (order of magnitude)

Human response time:

1M tokens * (3/4 words/token) / 2.5 (words/second) = ~ 83 hours for a human to say 1M tokens

Human energy cost:

20 watts * 83 hours = 1.66 kWh / 1M tokens

Good to hear from you @sschoenholz! The original post was addressing the incorrect statement that human training costs more energy than AI training.

For inference, the above is a rough comparison - it suggests for inference they are similar in energy costs, but operating at very different points on the tradeoff curve between power and time: AI is high power and fast, while humans are low power and slow, but energy cost, or the integral of power * time, is similar for both, with humans perhaps a little better, depending on the exact value assumed for the first statement.

Of course any individual human cannot answer all the questions an LLM can, but the energy cost of a human to *say* the same answer as the LLM is a little less.

Sam Schoenholz@sschoenholz

@SuryaGanguli 👋 Hi, hope everything is going great!! Just would observe that AI training is amortized over all inference from that model, which isn’t true for people! So LLMs are kind of a steal.

English

@nblqbl Beautiful example of the ambiguity of English. Grammati ally, it is not clear if "swirling grain pattern" applies to the apple or to the pate.

English

@SebastianSeung A premise of AGI zoomers is that instances of the AI can learn independently and link to each other. Human intelligence has several billion minds learning and processing information independently (or at least quasi-independently). Not sure the hive mind beats a decorrelated MCMC

English