Christopher Z. Cui

421 posts

Christopher Z. Cui

@ccui9

Just a guy who likes writing, games, and boba. 2nd year PhD @UCSanDiego. @rajammanabrolu Prev: Intern@MBZUAI IFM Inc: Intern @ MSR Montréal

For this week's NLP seminar, we are excited to host @ZiangXiao from Johns Hopkins University! Date and Time: Thursday, May 21, 11:00AM — 12:00 PM Pacific Time. Zoom Link: stanford.zoom.us/j/93941842999?… Title: Evaluation is Power. How can we use it well? Abstract: Evaluation steers the field of AI. It shapes what is worth building, what counts as progress, and what gets deployed and regulated. However, are our evaluation practices keeping pace with that responsibility? Benchmarks often fail to measure what they claim, and practitioners rarely find that these evaluations translate into actionable improvements. In this talk, I argue that good AI evaluation rests on two foundations. First, validity. Drawing on measurement science, we developed a conceptual framework with tools that treat benchmark design as the disciplined construction of measurement instruments. This approach exposes hidden assumptions and makes evaluation accountable to the constructs it intends to capture. Second, human-centeredness. A methodologically rigorous evaluation can widen the sociotechnical gap if the construct was chosen without the people it concerns. I show how HCI methods can help us reveal frictions in human-AI interaction that benchmarks often overlook. I will close by introducing OpenEval, an ongoing infrastructure effort to realize these two foundations, and show how it enables more valid, auditable, and participatory evaluation. Evaluation is power. We should use it well. Hope to see you all there!

🌳 Introducing Orchard — an open-source agentic modeling framework! 🎉 One thin & cheap sandbox infra powers training recipes across SWE / GUI / personal-assistant agents: ⚙️ Orchard Env: 0.28s exec latency; 100% success @ 1,000 parallel sandboxes 💪 🛠️ Orchard-SWE: 67.5% on SWE-bench Verified (30B-A3B, ~3B active) 🖥️ Orchard-GUI: 68.4% avg on WebVoyager / Online-Mind2Web / DeepShop (4B!) 📬 Orchard-Claw: 73.9% pass@3 on Claw-Eval 🔗 arxiv.org/abs/2605.15040 📦 Code and data are coming soon! Let's accelerate open agentic AI! 🚀

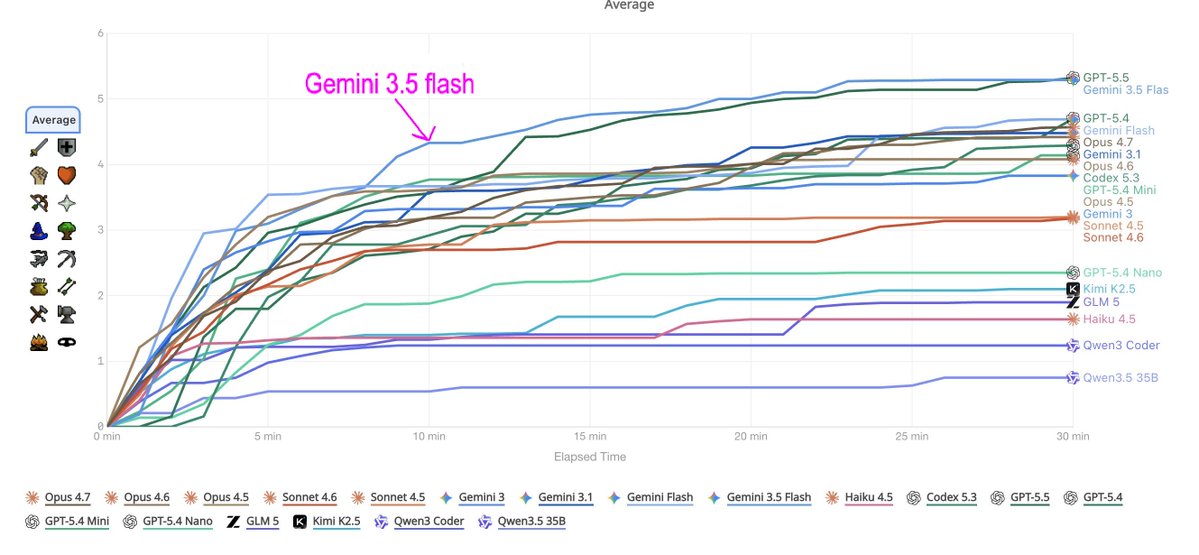

Here's how each model scored over 24 hours of wall-clock time, on a single attempt. GPT-5.5 performs best, with a late gain in the final hours. Claude models settle in by hour 8 and cluster within noise. More at: gbaeval.com/leaderboard

🚨Typical RL algorithms and on-policy distillation methods are blind samplers: they use privileged info to score rollouts, but not to *find* them. We ask: can we use privileged info to *actively sample* the rollouts RL wishes it can stumble upon with compute? ⤵️ Pedagogical RL

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/