Conscious Engines • Studio

54 posts

Conscious Engines • Studio

@cengines_studio

AI products for the rest of us — prodigy of @c_engines.

we are doing an office hours with @gabrielchua if you're building with codex or an engineer deep in it, join us. very limited spots.

Traveled across bangalore to work with @0xMahalakshmi Could’ve worked from home, but then we wouldn’t have this vibe @c_engines office is too good!

Something viral is loading…AI AD Making Hackathon is live @morphic @houseof_rare @PocketFM_App @REVELSTUDIOSINC @Remit2Any

Agentic Summer • Build-a-thon: Audio Track x @ElevenLabs ~ 100K+ credits to every single participant. ~ Winners: 1.5M credits ~ Top team: 12M credits Come build in Bangalore with @aiweekendsxyz at @c_engines Registrations open now ↓

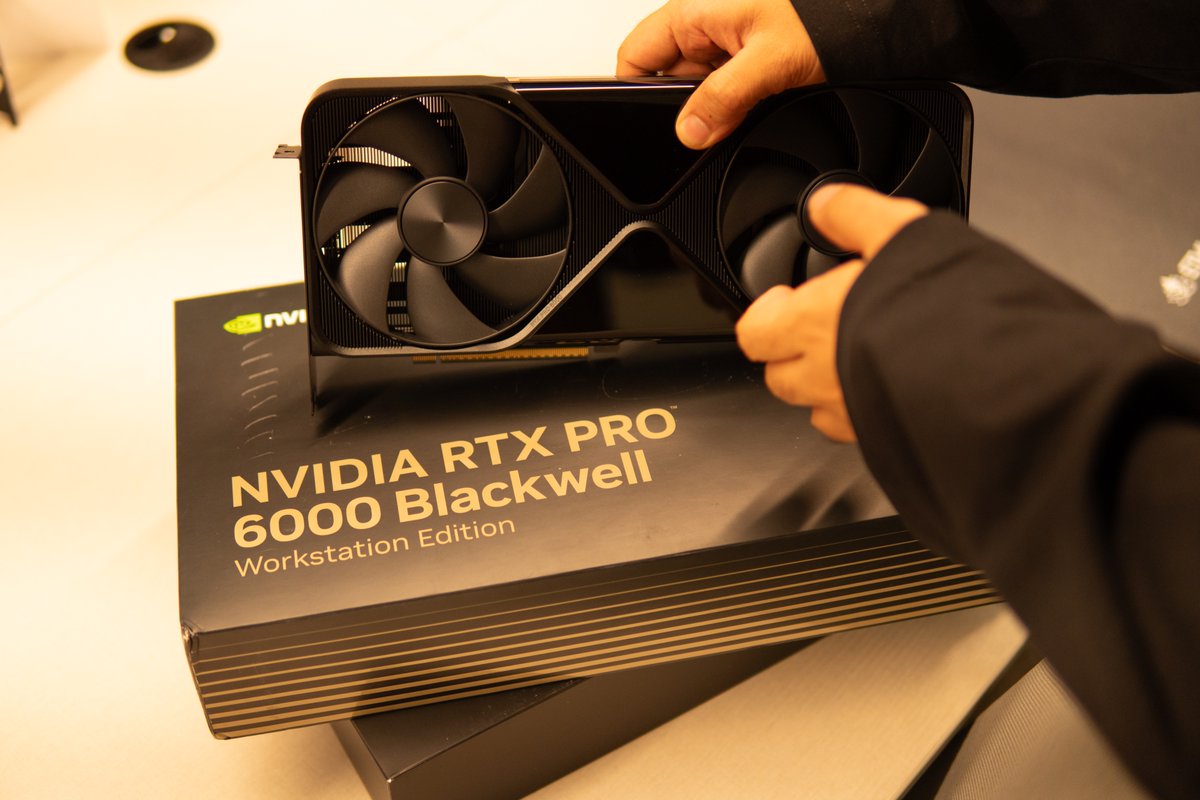

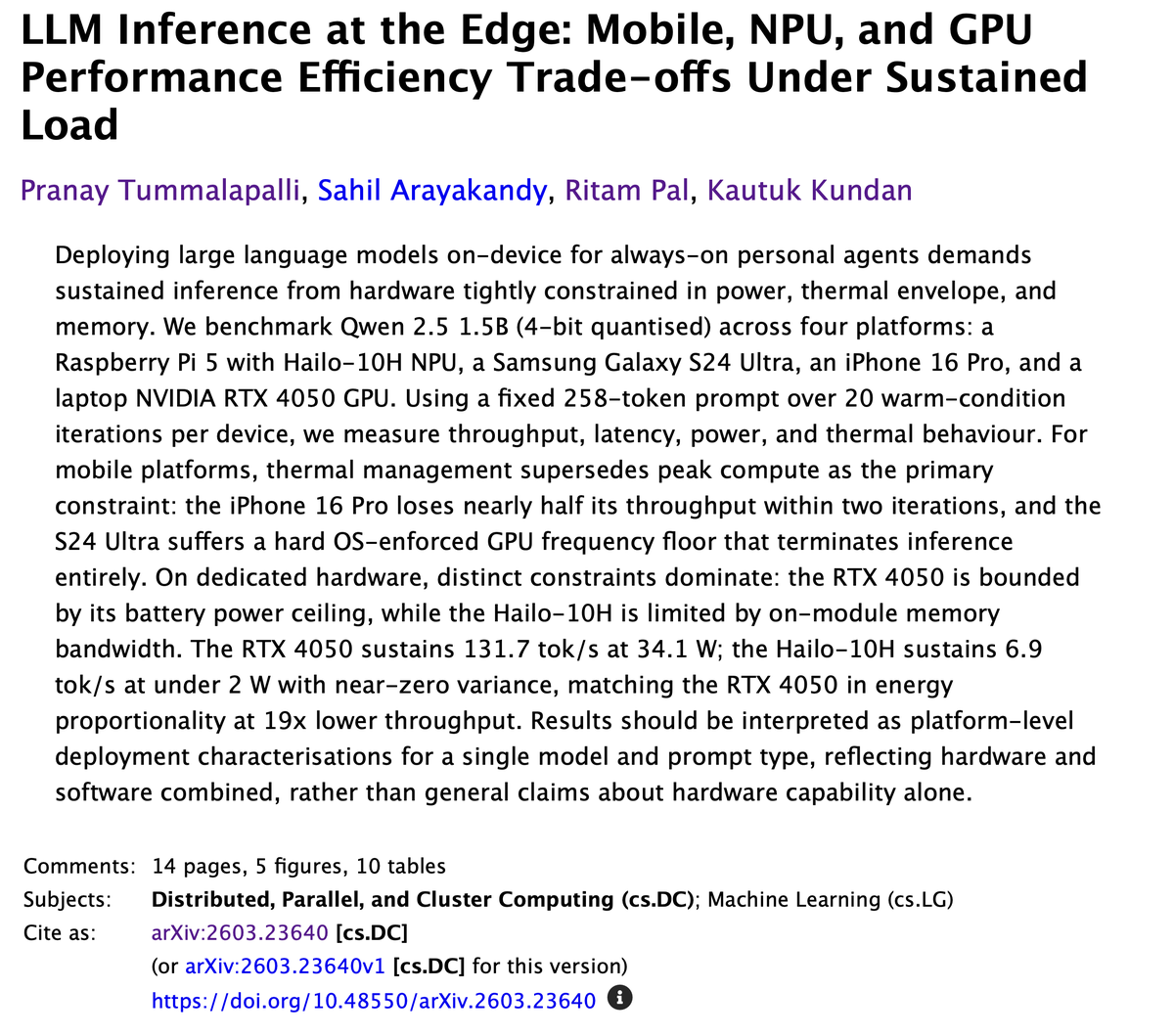

New hardware just got arrived at Conscious Engines. So what's up with the Jetsons? --- we just finished our first benchmark paper on LLM inference performance across edge hardware. what runs, what doesn't, what throughput looks like when the cloud isn't in the loop. Why? proactive AI only works if it's always on. always on only works if it's fast. and fast only works if the model is small enough, optimised enough, and close enough to where you actually are We ran a suite of tests on multiple consumer edge devices - raspi, arduinos, mobile phones, MacBooks and Gaming Laptops with beefier GPUs > publishing this week. --- Next up: optimisation. not just measuring how models perform on edge devices - making them perform better. quantization, pruning, architecture decisions that recover speed without losing capability. > we want to find the floor. how lean can a model get before it stops being useful. and what lives on the other side of that line. --- if you're a lab working on edge hardware and want to optimise your inference workflows. We have done the work so you don't have to. we'd love to talk. DMs open

Attending in-person tech events in BLR has become a complete waste of time. Organizers are constantly seeking attention. Attendees lack civic sense. The whole event is to show something cool while lacking substance. Humans don't have interesting conversations. I hope, I don't echo the same sentiment in the event I'm hosting tomorrow 😭