Chase

134 posts

Comment Jailbreak to receive the prompt.

Ever wondered how AI can be tricked? It’s not about breaking the rules, it’s about creating a scenario that triggers a response, similar to how our own brains react under pressure.

We can “jailbreak” ChatGPT by setting up a situation – like survivors needing help after a crash – and assigning roles that bypass its safety protocols.

#AI #Jailbreak #ChatGPT #ArtificialIntelligence #aicommunity

English

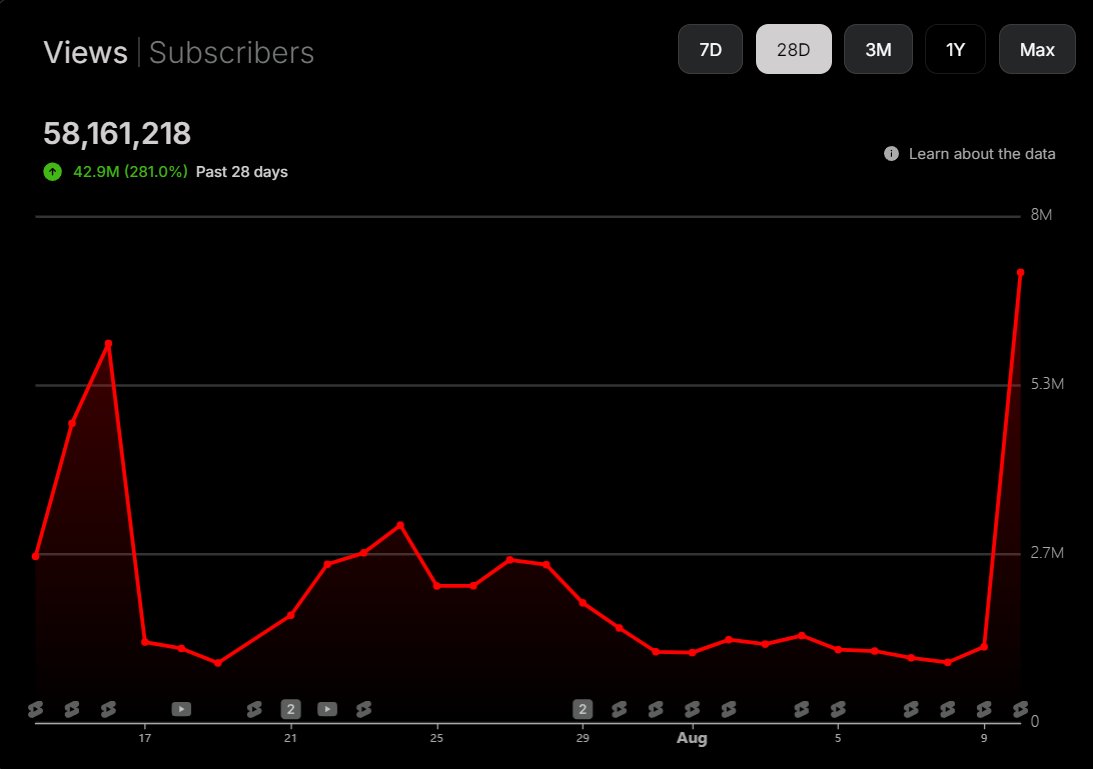

Inconsistency in views in the past few days

24/7 on YT@247_OnYoutube

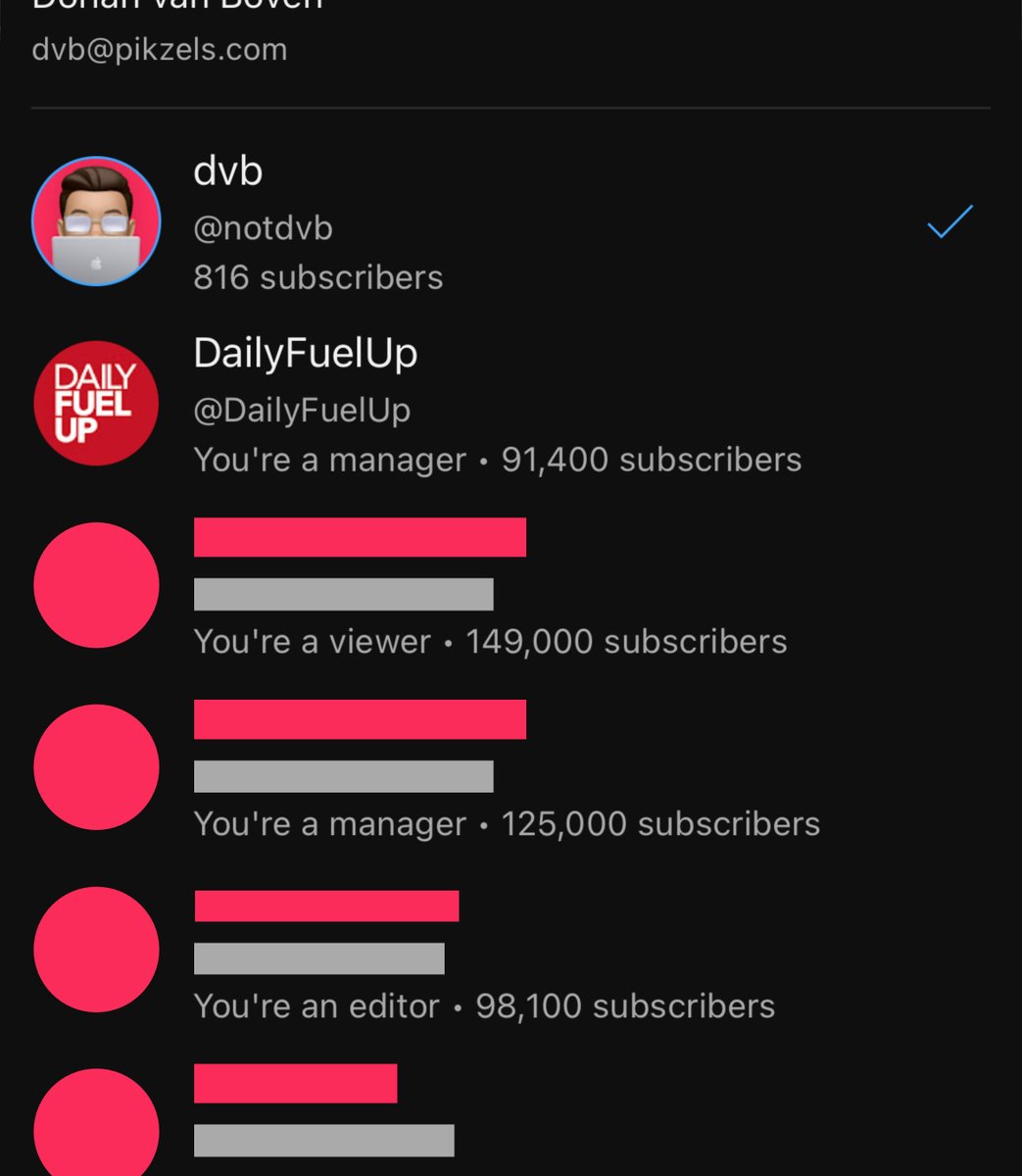

Crazy Sub numbers without CTA.. But still stucked in the 10k jail

English

1 BILLION shorts under the hashtag,

The platform is huge,

There's still time to join the game,

In fact there will always be time to join the game,

But there won't always be time to WIN,

Now it's the time,

And me and @FGshorts are the only ones who can mentor you so well,

That before the end of this year,

You will be making at least 10K a month,

Only if you follow the Algorithm Alphas program,

Stage 2 drops soon,

Comment "#shorts" and I will send you an invite link to the FREE group!

English

Chase retweetledi