Chen Wang

321 posts

Chen Wang

@chenwang_j

Final-year CS PhD @Stanford. Prev @GoogleDeepMind @NVIDIA @MIT_CSAIL. Robotics/Manipulation

It is great to know that my student Haozhi Qi @HaozhiQ , jointly supervised with Professor Jitendra Malik @JitendraMalikCV, is the recipient of the Lofti A. Zadeh Prize for 2024-25 given by the EECS Department of UC Berkeley for graduating PhD students. Congratulations!

Introducing Veo 2, our new, state-of-the-art video model (with better understanding of real-world physics & movement, up to 4K resolution). You can join the waitlist on VideoFX. Our new and improved Imagen 3 model also achieves SOTA results, and is coming today to 100+ countries in ImageFX.

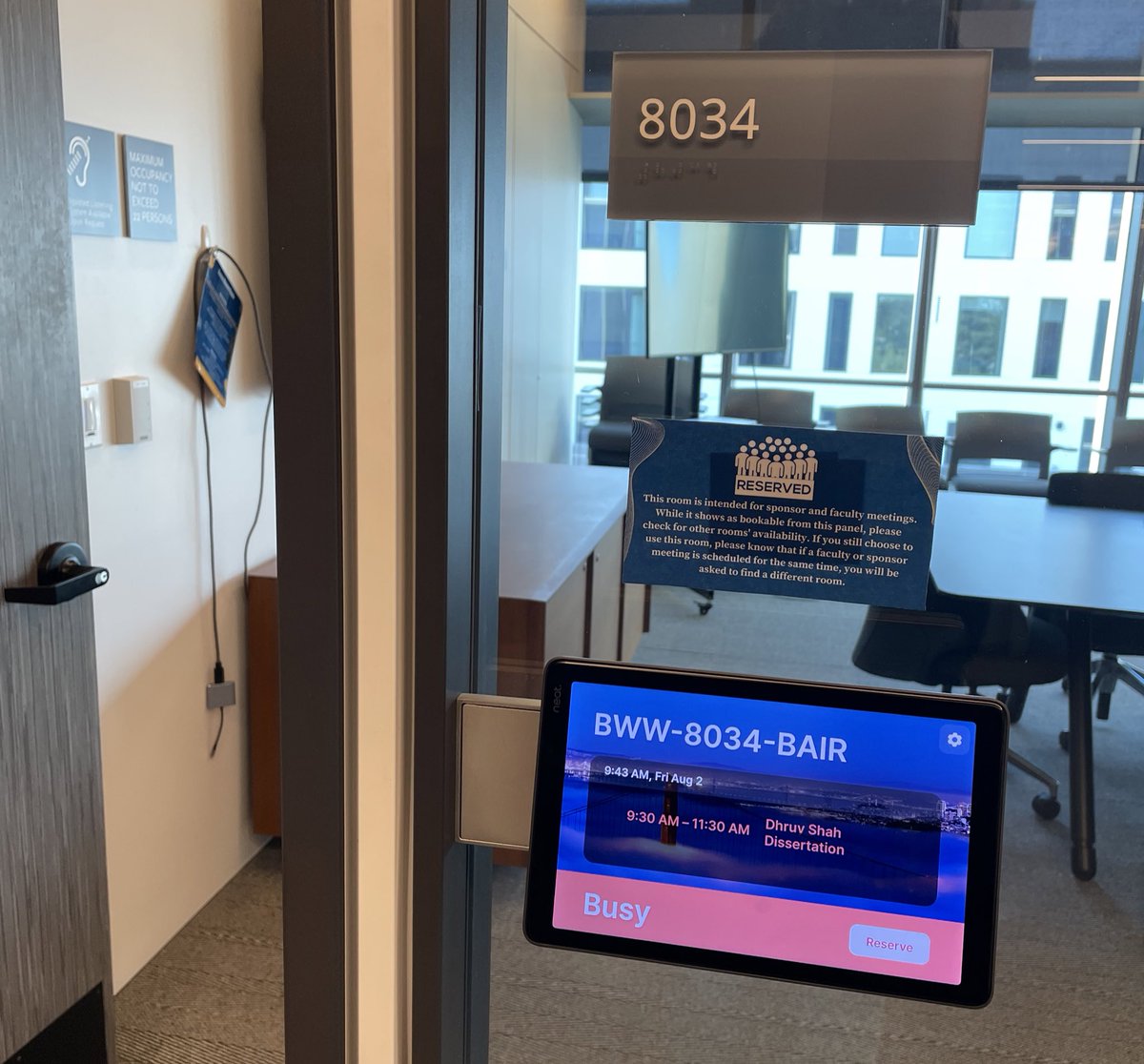

How can we collect high-quality robot data without teleoperation? AR can help! Introducing ARCap, a fully open-sourced AR solution for collecting cross-embodiment robot data (gripper and dex hand) directly using human hands. 🌐:stanford-tml.github.io/ARCap/ 📜:arxiv.org/abs/2410.08464

How can we collect high-quality robot data without teleoperation? AR can help! Introducing ARCap, a fully open-sourced AR solution for collecting cross-embodiment robot data (gripper and dex hand) directly using human hands. 🌐:stanford-tml.github.io/ARCap/ 📜:arxiv.org/abs/2410.08464

What structural task representation enables multi-stage, in-the-wild, bimanual, reactive manipulation? Introducing ReKep: LVM to label keypoints & VLM to write keypoint-based constraints, solve w/ optimization for diverse tasks, w/o task-specific training or env models. 🧵👇

🎺 Announcing our CoRL 2024 “Learning Robot Fine and Dexterous Manipulation: Perception and Control” in Munich. Join us to hear from an incredible lineup of speakers! And don’t miss the opportunity to submit your work and participate! Checkout: dex-manipulation.github.io/corl2024/