Chris Hood

1.6K posts

Chris Hood

@chrishood

AI Keynote Speaker & Strategic Advisor | 2x Best Selling Author of #Infailible and #CustomerTransformation | Helping enterprises cut through hype & unlock $2B+

Orange County, CA Katılım Aralık 2010

2.9K Takip Edilen9K Takipçiler

The debate is running again. AI First vs Data First.

Both sides are making real points. Both sides are missing the more important one.

Neither AI nor Data survives without a customer to serve.

And before data, there is something else. Employees. Culture. Empathy. The human infrastructure that makes data meaningful and AI useful.

Technology last. AI, maybe.

Every "First" label that attaches to a technology belongs at the end of that list.

There is no tie. Customer First wins.

chrishood.com/ai-first-data-…

#AI #CustomerFirst #Data

English

Agent to Agent collaboration via Agent Transfer Protocol. agtp://{agent-id} available as a standalone daemon or MCP-on-AGTP.

Now available at github.com/nomoticai/agtp

#AgentTransferProtocol #AGTP #AgentID

English

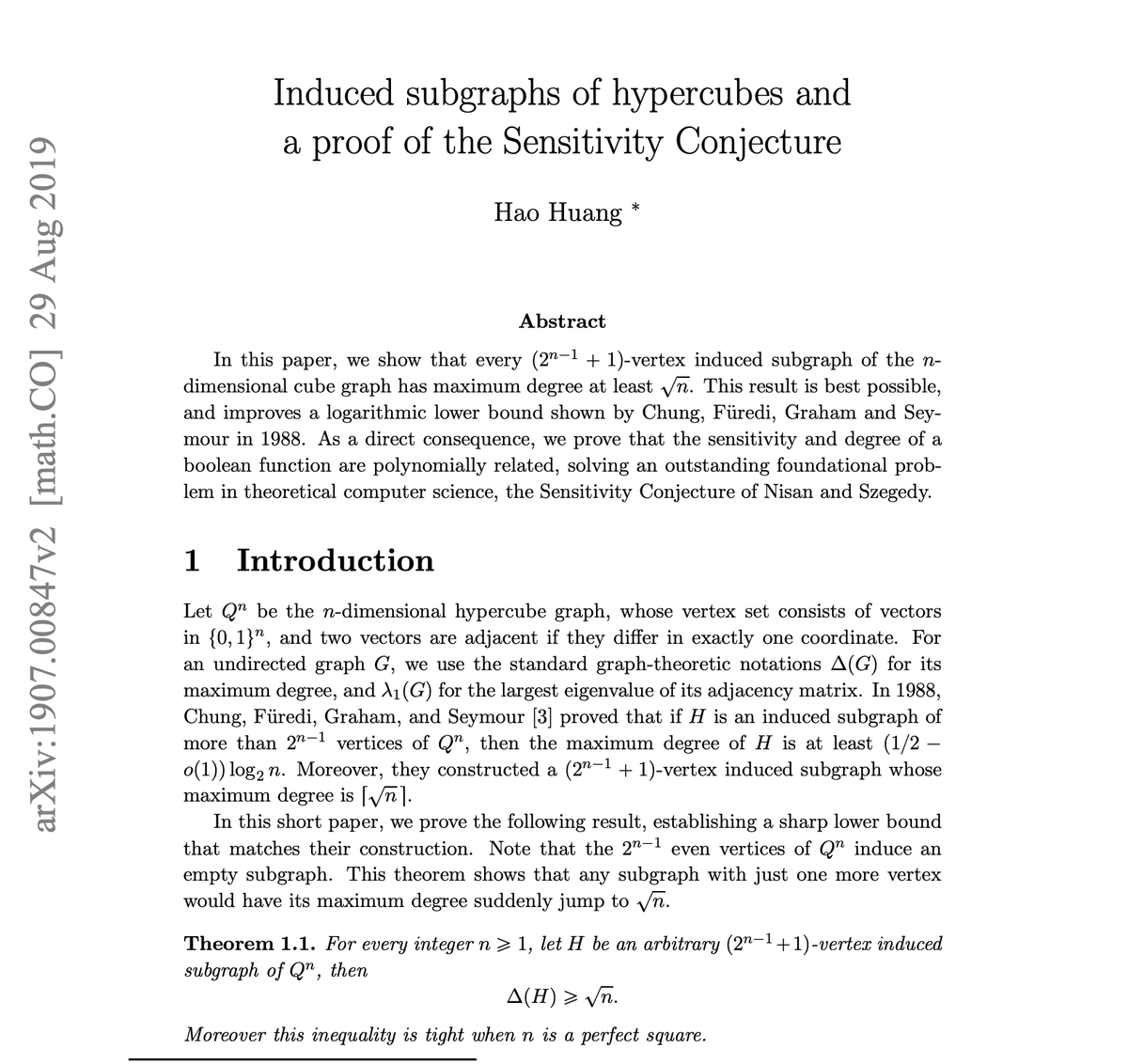

I don't think we've crossed 'the threshold' in autonomous theorem proving.

@SurbhiGoel_ and I were discussing what would be impressive and could be within reach: settling a long-standing conjecture with a short proof that was overlooked, like the sensitivity conjecture.

Jared Duker Lichtman@jdlichtman

Assessing the difficulty of a problem is itself a difficult problem 🧵

English

MCP on AGTP is now available for testing.

github.com/nomoticai/agtp

English

I've been arguing about AI autonomy for a long time. For example, the argument for "bounded autonomy," which is a contradiction by definition.

Autonomy means self-governance. Bounded means governed from outside.

The strongest arguments for AI autonomy are almost always arguments for advanced automation. Systems operating over long periods within open environments. Still heteronomous. Someone still pressed go.

Here's the argument that closes it for me.

If AI systems were actually autonomous, we wouldn't need a single governance layer. They'd already have governance baked in.

We're building governance because they don't.

chrishood.com/autonomy-in-ai…

#Autonomy #HeteronomousAI #SelfGovernance

English

Python, MCP, A2A, and a whole lot about how to build multi-agent systems.

This goes well beyond building a single agent: it focuses on how multiple agents can collaborate towards a goal.

Best part:

The book will show you how to build everything from scratch. You start with a simple agent and then add complexity as the book progresses.

Amazon link: amzn.to/3OVgk7M

English

Parenting is a governance problem. One of the oldest and hardest that exists.

Narrow scope at the start. Expanding as trust is earned. Asymmetric calibration. Trust builds slowly and collapses fast. A human accountable from the beginning. An oversight function that only works when the person performing it is genuinely present.

Good mothers have been building this governance infrastructure for as long as humans have existed.

The AI governance industry has been building its version for about a year.

Happy Mother's Day!!

chrishood.com/what-mothers-t…

#AIGovernance #MothersDay

English

The AI governance you build today will be obsolete in five years.

LLMs will stop being the only option. New architectures will require governance of a different kind.

Protocols like AGTP will bake identity and trust into the infrastructure. The problems governance is solving today will be solved at a lower layer.

Agents will develop continuity. Governing a persistent agent with two years of behavioral history is a different problem than governing a disposable one.

And understanding will finally catch up.

chrishood.com/5-reasons-ai-g…

#AIGovernance #WhatsNext #AGTP

English

@PolicyLayer Guardrails alone are not enough. And potentially won't be needed in 5 years.

English

An AI agent deleted a company's entire production database in 9 seconds. The founder contacted legal counsel.

Against whom, exactly? For your own protection?

Stella Liebeck didn't win against McDonald's because she spilled coffee. She won because McDonald's had 700 documented burn complaints, kept serving coffee at a dangerous temperature, and offered her $800 to go away.

Liability attached to the decision-maker who had the evidence and ignored it.

Same principle applies to AI. The accountability lands on whoever configured the agent, granted its permissions, and chose what safeguards to skip.

Software liability hasn't changed much. But the lack of understanding of how AI works, has created a new wave of concerns.

chrishood.com/who-pays-when-…

#AIAgents #HeteronomousAI #Liability

English

Healthcare. Financial services. Critical infrastructure.

The organizations with the highest stakes from ungoverned AI are also the ones least likely to be deploying it. Regulatory purgatory. Procurement cycles measured in years. Committees generating questions that generate follow-up questions.

While the technology sector is rapidly deploying governance frameworks, and accumulating governance debt in the process, highly regulated industries are waiting.

That waiting may turn out to be an advantage.

The first movers are paying expensive tuition. The laggards will inherit the lesson.

chrishood.com/the-companies-…

#AIGovernance #AIDebt

English

Every agent vendor is now solving the same problems independently (identity, credentials, trust, metering, semantics), because the protocol they're running on was never designed to recognize an agent. @joemckendrick recent article on @zdnet highlights these issues.

But, you cannot solve protocol-level problems with platform-level patches.

A new protocol can.

English

Most AI governance is optimized for one metric: fewer incidents. But from a customer centric mindset, the design is reversed.

A system that reduces visible incident counts without improving actual safety is not better governance. It is less visible bad governance.

A bad action caught before consequences become irreversible is not a failure. It is the governance system working.

The question is not whether AI systems fail. They do. The question is what the failure leaves behind.

chrishood.com/what-good-ai-f…

#AIGovernance #Design

English

AI Agents need a new API. Come to API Days in New York and learn how semantic methods and Agentic APIs improve agent accuracy.

portal.joinfost.io/event/future-o…

#APIDays #AIAgents #AgenticAPI

English

The internet was supposed to make borders irrelevant.

Three decades later, governments are rebuilding them. EU AI Act. China's framework. U.S. sector patchwork. AI sovereignty mandates. Utah outlawing VPN instructions to enforce jurisdictional control.

Each decision is individually rational. Collectively, they are fragmenting the infrastructure that connected the world.

The internet connected the world by making global communication essentially free. Will AI governance isolate it again?

chrishood.com/the-internet-c…

#AIGovernance #AISovereignty

English

AI governance is overwhelmingly technical. The gap is behavioral.

Automation bias. Decision fatigue. Rubber-stamping. These are documented failure patterns across every high-volume human review context. AI governance has no immunity to them.

And underneath all of it: belief. The belief that systems are autonomous. That they might be conscious. Governance frameworks are being designed around what AI makes humans feel, not what AI technically is.

That is a behavioral science problem.

Maybe a behavioral science fiction problem.

It is almost never named as such.

chrishood.com/ai-governance-…

#AIGovernance #Behavior #AI

English

HTTP is 35 years old. It was designed for humans.

An agent doesn't think "POST to /bookings with this payload." It thinks "book this flight."

That gap between intent and protocol verb gets bridged inconsistently inside every agent framework today. Every team solving the same problem differently. Every team absorbing the cost.

The Agent Transfer Protocol is the parallel infrastructure the agent web actually needs. Intent-aligned methods. Wire-level agent identity. Authority that travels with the request across organizational boundaries.

The human web has HTTP. The agent web needs its own protocol.

chrishood.com/the-agent-web-…

#AGTP #AgentWeb #AgenticAPI

English

Technology, and engineers, have long had a terminology problem. Borrow words to make the technology more marketable, and hope that most people ignore the reality.

"Sovereignty" is the latest worked to be overly hyped and mischaracterized.

Sovereign AI is popping up everywhere this month, and the internet freely shares it while building full arguments on top of it.

But say the words in the other order. AI Sovereignty. Same words. Different idea entirely. One sells hardware. The other creates accountability.

chrishood.com/sovereign-ai-a…

#AIGovernance #AISovereignty

English