Jukan@jukan05

Samsung vs. SK Hynix: A Showdown Over the 140 Trillion Won AI DRAM 'Gold Mine'... Who Will Emerge Victorious?

"South Korea's annual exports could increase into the multi-hundred billion U.S. dollar range."

This was the assessment from the global tech industry after Sam Altman, CEO of OpenAI, who visited South Korea on the 1st, requested that Samsung Electronics and SK Hynix "supply over 900,000 wafers per month of advanced DRAM (based on wafer input) for the Stargate project." This implies that Samsung Electronics and SK Hynix could add at least $100 billion (approximately 140 trillion won) annually in advanced DRAM sales through their supply to OpenAI. AI DRAM has become the 'gold mine of the 21st century.'

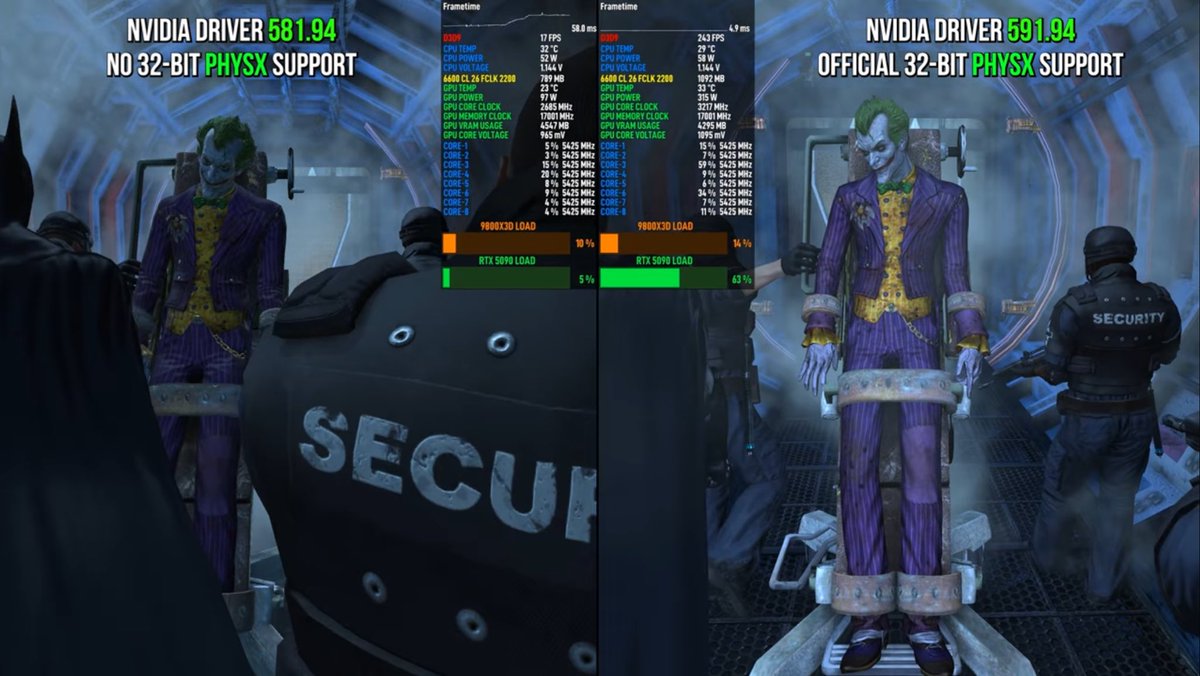

A head-to-head battle between Samsung Electronics and SK Hynix to secure the volume is expected to recommence. Some predict the situation will be different from the HBM3 (4th generation High Bandwidth Memory) and HBM3E (5th generation High Bandwidth Memory) supply competition of 2023-2024, which SK Hynix dominated. While SK Hynix is focusing all its efforts on defending its top position, Samsung Electronics, which has spent about two years restoring its fundamental technological capabilities, is also preparing a counterattack. Another variable is that NVIDIA, a major player in AI DRAM, has begun to utilize 'low-power, cost-effective' DRAM.

What is the 'Cutting-Edge DRAM' OpenAI Mentioned?... Samsung and SK Have Different Interpretations

On the 1st, OpenAI posted on its official homepage titled 'Samsung and SK Join OpenAI's Stargate Project to Advance Global AI Infrastructure.' Regarding the DRAM supply, it stated, "Samsung and SK plan to expand their production capacity for the cutting-edge DRAM required for OpenAI's advanced AI models," adding, "The goal is to produce DRAM at a level of 900,000 wafers per month based on wafer input."

However, OpenAI did not specify the exact type of 'cutting-edge DRAM.' Samsung Electronics and SK Hynix are offering their own favorable interpretations.

In a press release on the same day, SK Hynix asserted that 'cutting-edge DRAM = HBM.' The SK Group explained, "SK Hynix will participate as an 'HBM' supply partner in the Stargate project," and "we plan to establish a production system that can respond in a timely manner to the request for HBM supply of up to 900,000 wafers per month."

SK Hynix, 'Customized' from HBM4E... Hiring Experienced Design Professionals

The analysis is that this stems from SK Hynix's leadership in the HBM market, the flagship of AI DRAM. SK Hynix's top priority is to defend its position in the HBM market, where competition is intensifying. The HBM market is projected to grow to $100 billion by 2030, accounting for 40% of the entire DRAM market.

SK Hynix recently announced that it has completed the development of 12-stack HBM4 and is ready for mass production. This means it can start supplying as soon as it gets the final sign-off from NVIDIA. The logic die, which acts as the brain of HBM4, will utilize TSMC's 12nm process.

For the next generation, the 7th gen 'HBM4E,' the company is considering using TSMC's 3nm process. Through this, it is developing a customized product, 'custom HBM4E,' that reflects the performance requirements of various clients, including NVIDIA and Broadcom. The DRAM itself will also transition to 10nm 6th generation DRAM (1c DRAM).

The company is also accelerating its efforts to secure talent to strengthen product competitiveness. SK Hynix plans to expand its HBM design workforce through a recruitment announcement for experienced professionals this month. This move is seen as being in preparation for HBM4E and other products that require custom logic die design. The main recruitment targets are known to be personnel with logic design experience from competitors like Samsung Electronics. This strategy is interpreted as weakening competitors while simultaneously strengthening its own competitiveness.

Samsung Electronics, Proving the Recovery of its HBM3E 12-Stack Technology

Samsung Electronics' response shows a slightly different temperature from SK Hynix's. In its press release today regarding Altman's request for cutting-edge DRAM supply, it stated that it will "supply high-performance, low-power memory and memory solutions without a hitch." This is a slightly different interpretation from its competitor SK Hynix, which specifically named 'HBM.'

This perspective suggests that in addition to HBM, modular products utilizing GDDR7 and LPDDR5X for AI servers will also be widely used in the future. As the AI trend shifts its focus from training to inference, the company foresees a growing demand for AI memory that uses less power and is cheaper than HBM, without a significant drop in performance.

Of course, this does not mean Samsung Electronics is neglecting HBM. Its competitiveness has recently shown signs of recovery.

Although the company has not officially confirmed it, Samsung Electronics passed NVIDIA's final qualification test for its 12-stack HBM3E last month. It is known to have started supplying the volume upon receiving orders from NVIDIA. Passing NVIDIA's test for the 12-stack HBM3E is considered a signal of the recovery of Samsung Electronics' HBM technology.

Supplying 11Gbps HBM4 Samples... Mass Supplying GDDR

Regarding HBM4, which will determine success in next year's HBM market, Samsung provided a large volume of samples with an operating speed increased to '11Gbps' to NVIDIA last month. Samsung is focusing on securing initial volumes through early sample delivery. This is to avoid repeating the mistakes made with HBM3 and HBM3E, where it lost over 70% of NVIDIA's volume to competitors. There are also rumors that HBM4 mass production will begin within the year.

Custom HBM, which reduces power consumption and die area, is also in preparation. As an 'integrated device manufacturer' capable of handling memory and system semiconductors as well as cutting-edge packaging, the company has established a strategy to focus on turnkey services.

It plans to target the AI memory market by leveraging its competitiveness in DRAMs that are transitioning from general-purpose to AI-specific use, such as graphics DRAM (GDDR) and low-power DRAM (LPDDR). A prime example is the decision to supply 'SOCAMM2,' a low-power DRAM module for servers that can supplement expensive and power-hungry HBM, to major clients (SK Hynix is also a supplier). NVIDIA, the number one AI accelerator company, has started using GDDR as memory for its lightweight products, and Samsung Electronics is supplying the majority of this volume.

Global IB: "The Market Share Gap is Narrowing"

Micron, a 'hot potato,' is considered a variable that will influence the strategies of the Korean memory companies. Until mid-last month, there was talk in the industry that "Micron failed to meet the operating speed (minimum 10Gbps) required by NVIDIA for HBM4," but CEO Sanjay Mehrotra dismissed this during the earnings call on September 23rd (local time).

However, Mehrotra's statement that "the first mass production of HBM4 is slated for the second quarter of next year, with a full ramp-up in the second half of next year" is analyzed as a point that fails to erase market skepticism. This is because it is about two quarters later than the HBM4 mass production timeline targeted by SK Hynix and Samsung Electronics.

The analysis within the semiconductor industry is that "Micron has redesigned its HBM4 to meet NVIDIA's operating speed requirements, and it will need time to produce the volume in earnest."

Many forecasts predict SK Hynix will maintain its dominance in the HBM market until at least 2027. However, some observers speculate that Samsung Electronics will catch up.

In its market share forecast based on data from market research firm TrendForce, global financial firm Bernstein projected the HBM market shares for 2026 to be '45 for SK Hynix, 33 for Samsung, and 22 for Micron,' but predicted the gap would narrow to '38 for SK Hynix, 38 for Samsung, and 24 for Micron' by 2027.