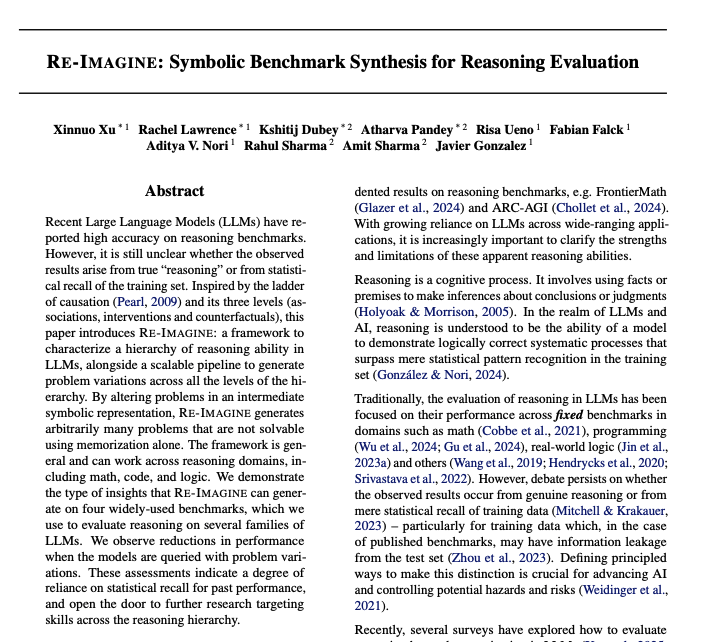

Atharva Pandey retweetledi

The better LLMs get at reasoning, the longer their traces get—thousands of tokens, dozens of tool calls. But in law, medicine, and agentic AI, "usually correct" isn't good enough: answers must be verifiably correct.

We built interwhen at @MSFTResearch to make that tractable. And it's now open source.

Across benchmarks, plugging interwhen into an LLM yields:

✅ 100% soundness (with full verifiers)

📈 up to 15% accuracy gain

⚡ ~ 1.5× compute cost

🧵

English