मनीष तिवारी

1.7K posts

मनीष तिवारी

@compmanish

कायर भोगे दुख सदा वीर भोग्य वसुंधरा। CS Engineer | Indic Wing

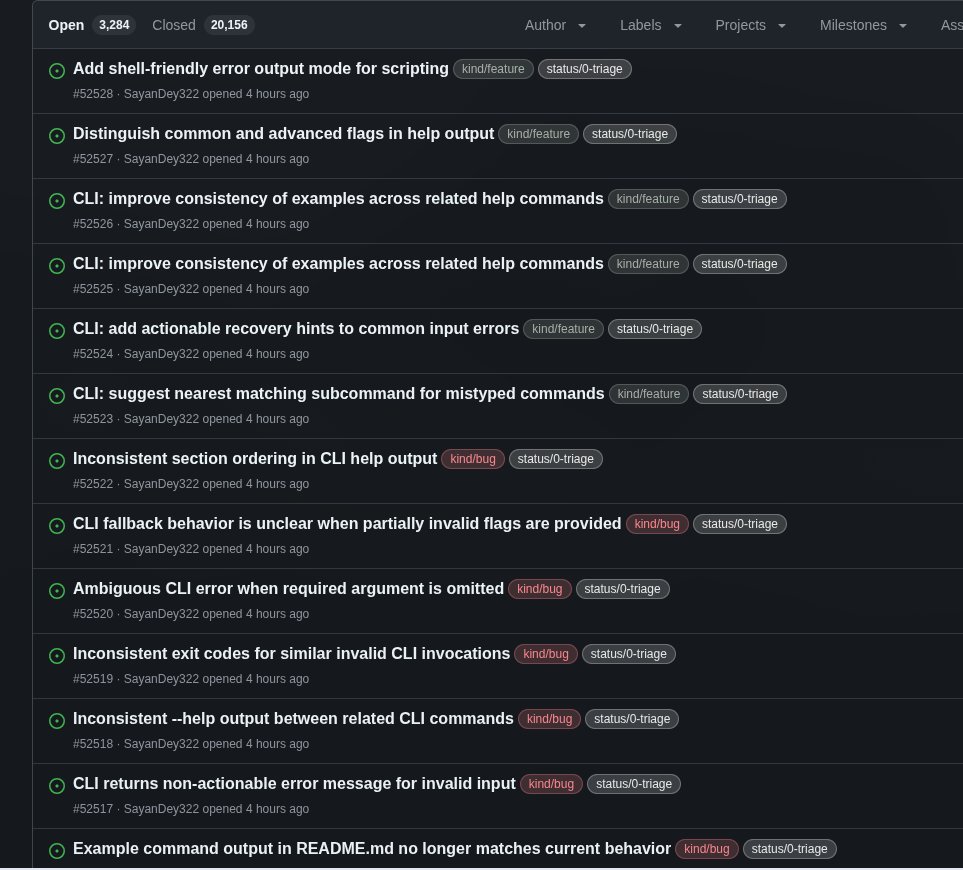

Never thought I’d see this day… an Indian IT major leading round of India’s top foundational model startup. Imagine the upheaval if Accenture led Anthropic’s major funding round. I don’t know what this signals about how Indian VCs think about sarvam

Today, we’re taking a step toward truly galactic-scale capabilities. 🚀 We’re partnering with @SarvamAI to bring sovereign AI into orbit aboard India’s first orbital data centre satellite, a pathfinder mission bringing datacenter-class GPUs and high-performance remote sensing together in space. Built and operated by Pixxel, with Sarvam providing the AI backbone, the demonstrator marks a step toward making orbital data centres real, operational, and scalable from India. May the 4th be with us all! ✨