Core Francisco Park @ NeurIPS2025

269 posts

Core Francisco Park @ NeurIPS2025

@corefpark

@Harvard. Science of Intelligence

🚨New preprint! In-context learning underlies LLMs’ real-world utility, but what are its limits? Can LLMs learn completely novel representations in-context and flexibly deploy them to solve tasks? In other words, can LLMs construct an in-context world model? Let’s see! 👀

Today we present a new framework for measuring human-like general intelligence in machines (what some people call AGI). Conventional AI benchmarks today assess only narrow capabilities in a limited range of human activities. We propose that a more promising way to evaluate human-like general intelligence in AI systems is through a particularly strong form of general game playing: studying how and how well they play and learn to play all conceivable human games — what we call the ``Multiverse of Human Games''. Taking a first step towards this vision, we introduce the AI GameStore, a scalable and open-ended platform that uses LLMs with humans-in-the-loop to automatically construct standardized and containerized variants of popular human games on digital gaming platforms. As a proof of concept, we generated 100 such games based on the top charts of Apple App Store and Steam, and evaluated seven frontier vision-language models (VLMs) on short episodes of play. The best models achieved less than 10% of the human average score on the majority of the games. Check out our website to play the games, see how agents play, and build agents to solve them!

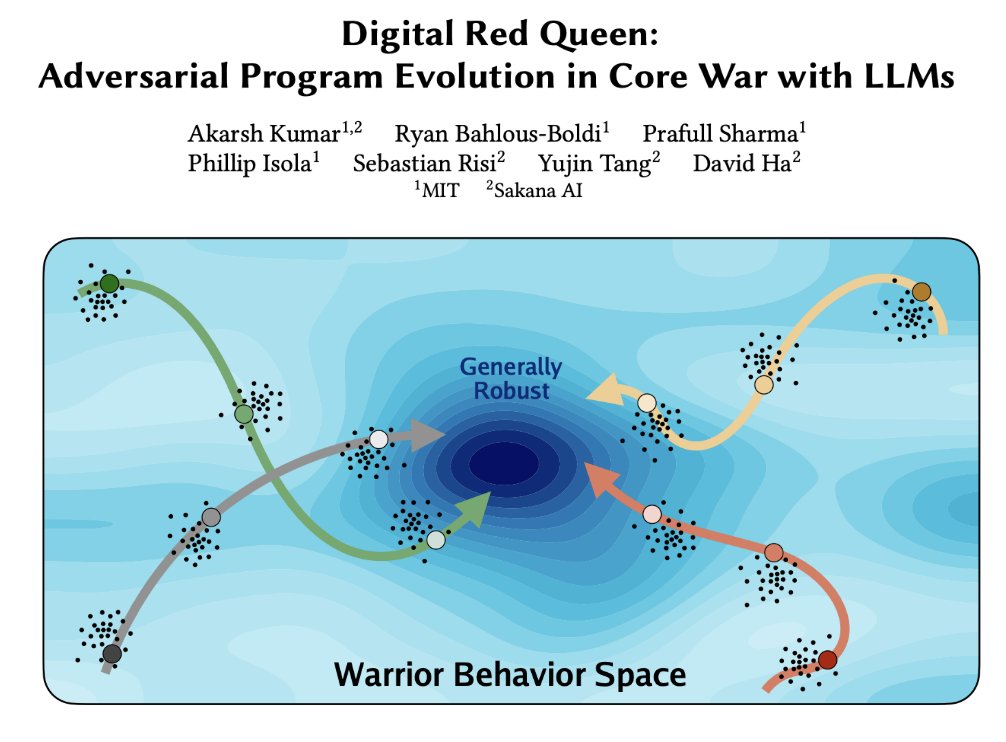

Introducing Digital Red Queen (DRQ): Adversarial Program Evolution in Core War with LLMs Blog: sakana.ai/drq Core War is a programming game where self-replicating assembly programs, called warriors, compete for control of a virtual machine. In this dynamic environment, where there is no distinction between code and data, warriors must crash opponents while defending themselves to survive. In this work, we explore how LLMs can drive open-ended adversarial evolution of these programs within Core War. Our approach is inspired by the Red Queen Hypothesis from evolutionary biology: the principle that species must continually adapt and evolve simply to survive against ever-changing competitors. We found that running our DRQ algorithm for longer durations produces warriors that become more generally robust. Most notably, we observed an emergent pressure towards convergent evolution. Independent runs, starting from completely different initial conditions, evolved toward similar general-purpose behaviors—mirroring how distinct species in nature often evolve similar traits to solve the same problems. Simulating these adversarial dynamics in an isolated sandbox offers a glimpse into the future, where deployed LLM systems might eventually compete against one another for computational or physical resources in the real world. This project is a collaboration between MIT and Sakana AI led by @akarshkumar0101 Full Paper (Website): pub.sakana.ai/drq/ Full Paper (arxiv): arxiv.org/abs/2601.03335 Code: github.com/SakanaAI/drq/

Check out our new Digital Red Queen work! Core War is a programming game where assembly programs fight against each other for control of a Turing-complete virtual machine. We ask what happens when an LLM drives an evolutionary arms race in this domain. We find that as you run our DRQ algorithm for longer, the resulting programs become more generally robust, while also showing evidence of convergence across independent runs - a sign of convergent evolution!