Fahad Saleh

832 posts

Fahad Saleh

@cryptoeconprof

Emerson/Merrill Lynch Professor @UF. Advisor @CUSEAS CDFT. FinTech Fellow @CornellMBA. Co-Organizer for CBER Forum. PhD @NYUStern MSc @Columbia BSc @CornellEng.

When this guy is the voice of sanity, we've definitely lost the mandate of heaven.

1/ The Mandate clearly states what must be protected: EF will, above all else, remain focused on an Ethereum that is censorship resistant, open source, private, and secure (CROPS), in the service of user self-sovereignty, resistant to extraction and with seamless UX. These are conditions that make Ethereum worth building, using, and defending. Read the full blog here: blog.ethereum.org/2026/03/13/ef-…

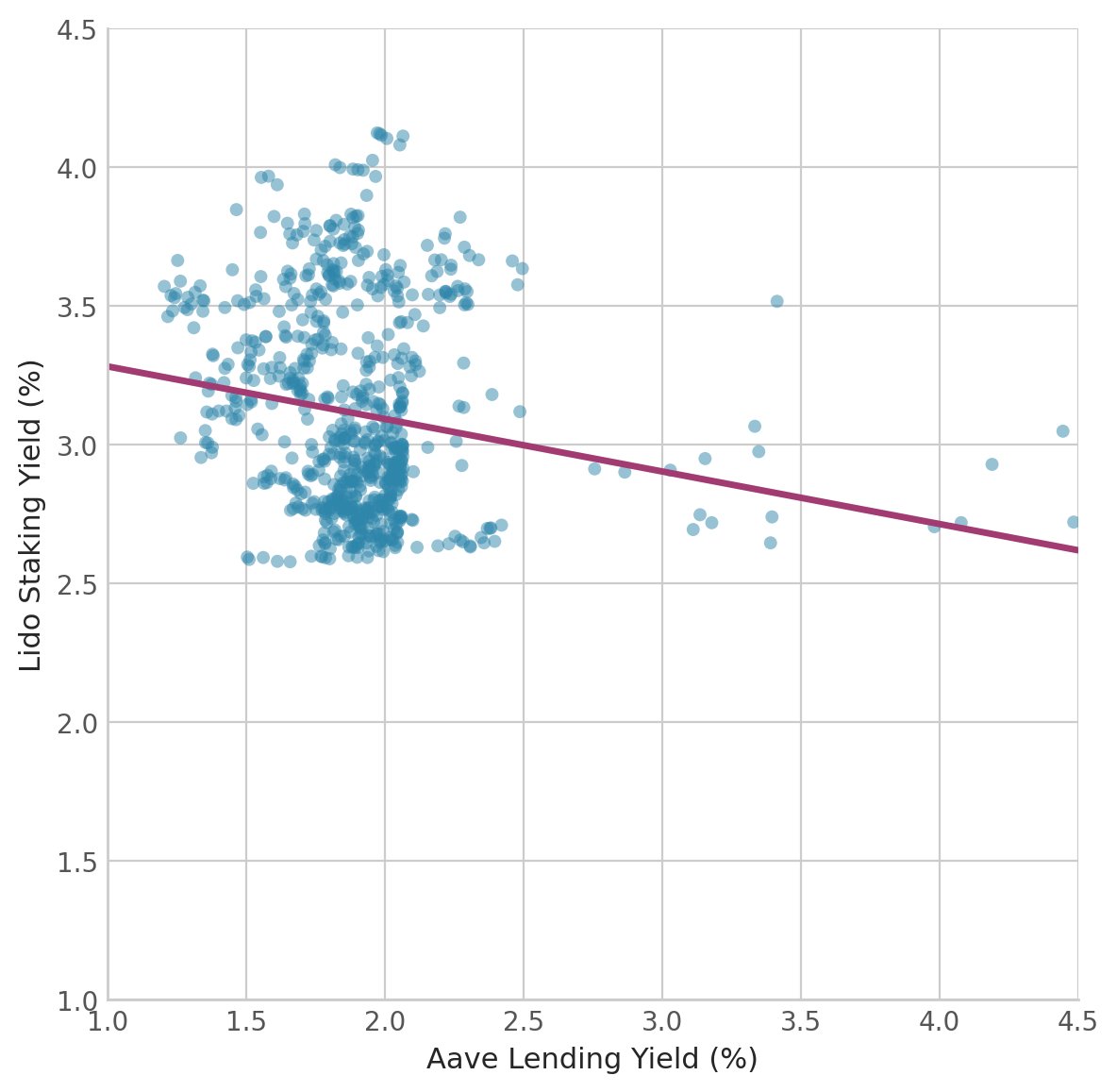

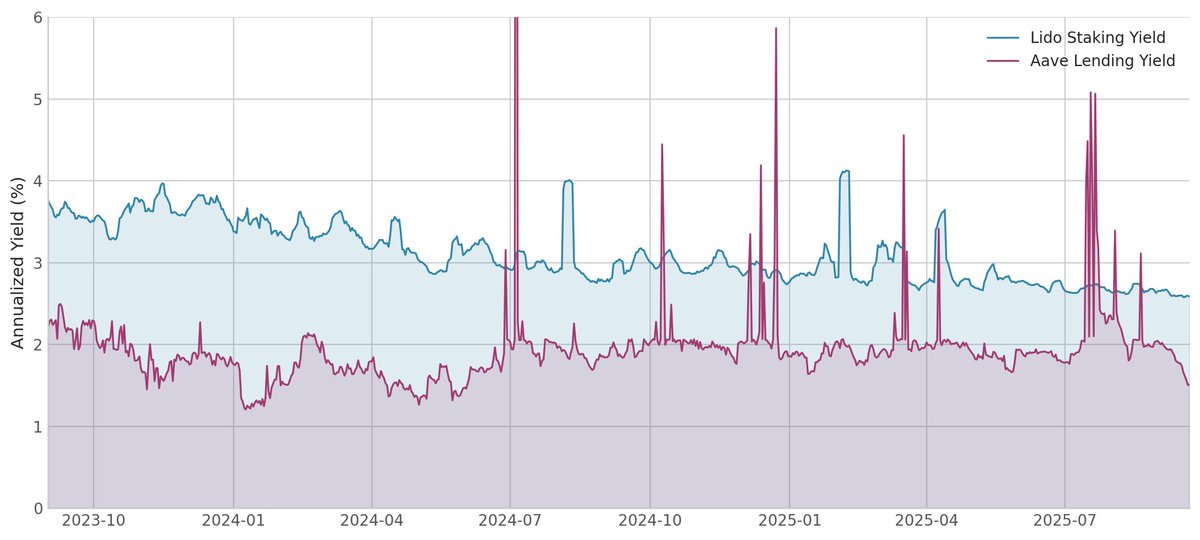

Why do so many people choose to lend out ETH on Aave for 2% instead of stake via Lido for 3% yield? In a new paper with Joel Hasbrouck, @cryptoeconprof, and @casparschwa we build a structural econometric model and estimate it with data from Aave and Lido. The result? There is a huge market inefficiency that cannot be explained by smart contract risk, depeg risk, or any other risk.

The Broken Priorities of Academia | (@AswathDamodaran)

I currently have three papers in review at "high impact" journals. One of them has been sitting there for two years. In that time my daughter was born and learned how to walk, but apparently publishing a PDF was still not possible for me. For another one, after four months in review the editor told me they cannot find a second reviewer and asked me to suggest more reviewers. A third one sent me a message in 2026 saying the PDF I uploaded was larger than 10 MB and that I should please reupload everything to make the file smaller. All of this just to eventually pay between 7,000 and 12,000 USD per paper so someone can officially approve that the science we do is "legitimate". Reminder: not a single reviewer will be compensated here. I still don't understand how we as scientists can collectively be so smart when doing science and still tolerate a system like this when it comes to sharing our findings. We should move to preprints plus open review, whether human or AI, asap. So frustrated about it. I'd suggest sharing your work on bioRxiv or medRxiv, reading and reviewing preprints when you can, and highlighting good research, especially if it is still a preprint. Try platforms like ResearchHub (that pay for peer review) and experiment with AI based reviewers for faster feedback. Instead I read this as a proposed "revolutionary" measure:

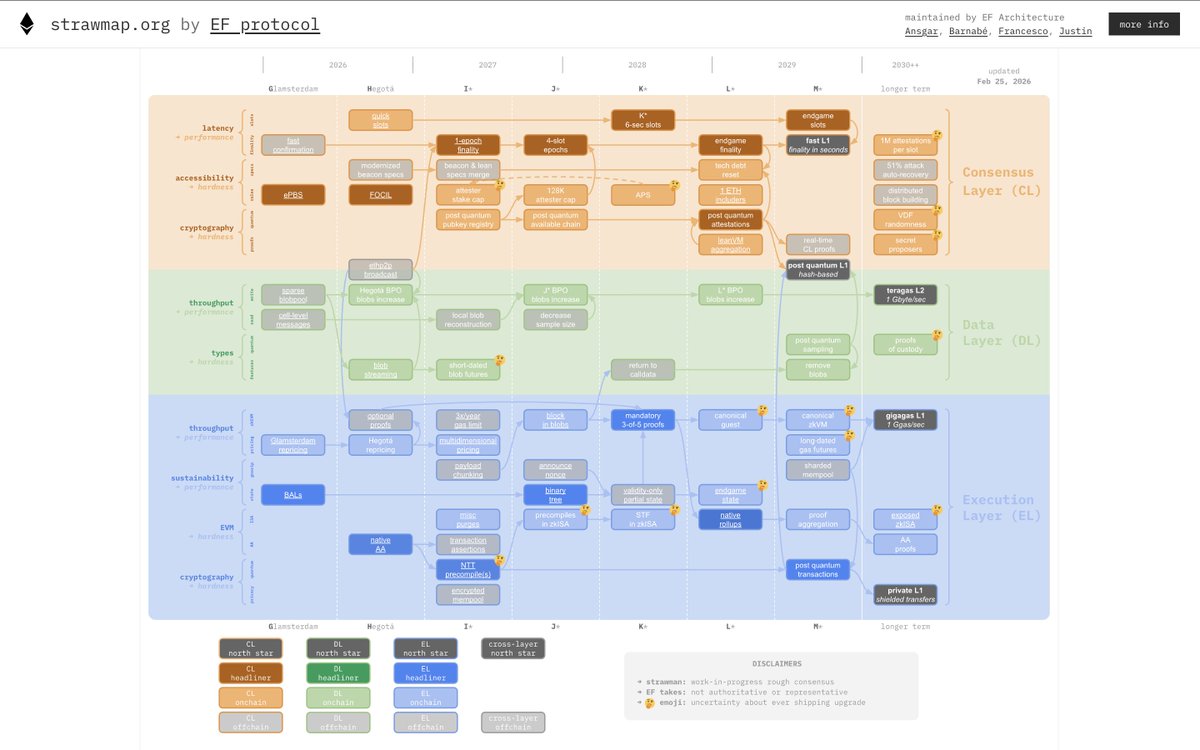

Finally, the block building pipeline. In Glamsterdam, Ethereum is getting ePBS, which lets proposers outsource to a free permissionless market of block builders. This ensures that block builder centralization does not creep into staking centralization, but it leaves the question: what do we do about block builder centralization? And what are the _other_ problems in the block building pipeline that need to be addressed, and how? This has both in-protocol and extra-protocol components. ## FOCIL FOCIL is the first step into in-protocol multi-participant block building. FOCIL lets 16 randomly-selected attesters each choose a few transactions, which *must* be included somewhere in the block (the block gets rejected otherwise). This means that even if 100% of block building is taken over by one hostile actor, they cannot prevent transactions from being included, because the FOCILers will push them in. ## "Big FOCIL" This is more speculative, but has been discussed as a possible next step. The idea is to make the FOCILs bigger, so they can include all of the transactions in the block. We avoid duplication by having the i'th FOCIL'er by default only include (i) txs whose sender address's first hex char is i, and (ii) txs that were around but not included in the previous slot. So at the cost of one slot delay, only censored txs risk duplication. Taking this to its logical conclusion, the builder's role could become reduced to ONLY including "MEV-relevant" transactions (eg. DEX arbitrage), and computing the state transition. ## Encrypted mempools Encrypted mempools are one solution being explored to solve "toxic MEV": attacks such as sandwiching and frontrunning, which are exploitative against users. If a transaction is encrypted until it's included, no one gets the opportunity to "wrap" it in a hostile way. The technical challenge is: how to guarantee validity in a mempool-friendly and inclusion-friendly way that is efficient, and what technique to use to guarantee that the transaction will actually get decrypted once the block is made (and not before). ## The transaction ingress layer One thing often ignored in discussions of MEV, privacy, and other issues is the network layer: what happens in between a user sending out a transaction, and that transaction making it into a block? There are many risks if a hostile actor sees a tx "in the clear" inflight: * If it's a defi trade or otherwise MEV-relevant, they can sandwich it * In many applications, they can prepend some other action which invalidates it, not stealing money, but "griefing" you, causing you to waste time and gas fees * If you are sending a sensitive tx through a privacy protocol, even if it's all private onchain, if you send it through an RPC, the RPC can see what you did, if you send it through the public mempool, any analytics agency that runs many nodes will see what you did There has recently been increasing work on network-layer anonymization for transactions: exploring using Tor for routing transactions, ideas around building a custom ethereum-focused mixnet, non-mixnet designs that are more latency-minimized (but bandwidth-heavier, which is ok for transactions as they are tiny) like Flashnet, etc. This is an open design space, I expect the kohaku initiative @ncsgy will be interested in integrating pluggable support for such protocols, like it is for onchain privacy protocols. There is also room for doing (benign, pro-user) things to transactions before including them onchain; this is very relevant for defi. Basically, we want ideal order-matching, as a passive feature of the network layer without dependence on servers. Of course enabling good uses of this without enabling sandwiching involves cryptography or other security, some important challenges there. ## Long-term distributed block building There is a dream, that we can make Ethereum truly like BitTorrent: able to process far more transactions than any single server needs to ever coalesce locally. The challenge with this vision is that Ethereum has (and indeed a core value proposition is) synchronous shared state, so any tx could in principle depend on any other tx. This centralizes block building. "Big FOCIL" handles this partially, and it could be done extra-protocol too, but you still need one central actor to put everything in order and execute it. We could come up with designs that address this. One idea is to do the same thing that we want to do for state: acknowledge that >95% of Ethereum's activity doesn't really _need_ full globalness, though the 5% that does is often high-value, and create new categories of txs that are less global, and so friendly to fully distributed building, and make them much cheaper, while leaving the current tx types in place but (relatively) more expensive. This is also an open and exciting long-term future design space. firefly.social/post/lens/8144…