csgm

2.3K posts

csgm

@csgbwk

french husband, robotics, ai, computer graphics

France Katılım Nisan 2024

312 Takip Edilen69 Takipçiler

csgm retweetledi

The amount of LoD makes everything bland, in real life it would look a lot more lush and green.

Jackson@jayveeonYT

Yeah, I don't think I've ever played a game that does this

English

@VictorTaelin @_inception_ai @ArtificialAnlys I think Neogen can fix RL/synthetic data for diffusion LMs... Will be experimenting when bend2 comes out..

English

@_inception_ai @ArtificialAnlys this approach enormous potential, I suppose with a larger run this would approach the intelligence of the leading models?

English

Mercury 2 is in a league of its own.

1,200 tok/s at comparable quality to speed-optimized autoregressive models, per @ArtificialAnlys.

English

@IterIntellectus Even easier, terraform earth in places where it's annoying, we have most of the tech we just need political will and scaling

English

it’s terrifying that people still don’t fully realize you can terraform planets

Curiosity@CuriosityonX

It’s terrifying that people still don't fully realize there is no PLANET B. This is it.

English

@VictorTaelin Could it be that Anthropic and OpenAI trained on your own input for the past 3 years, which would be why they outperform so much?

Also impressive to see Gemini perform so well on all benchmarks even private and still being completely unusable in real life

English

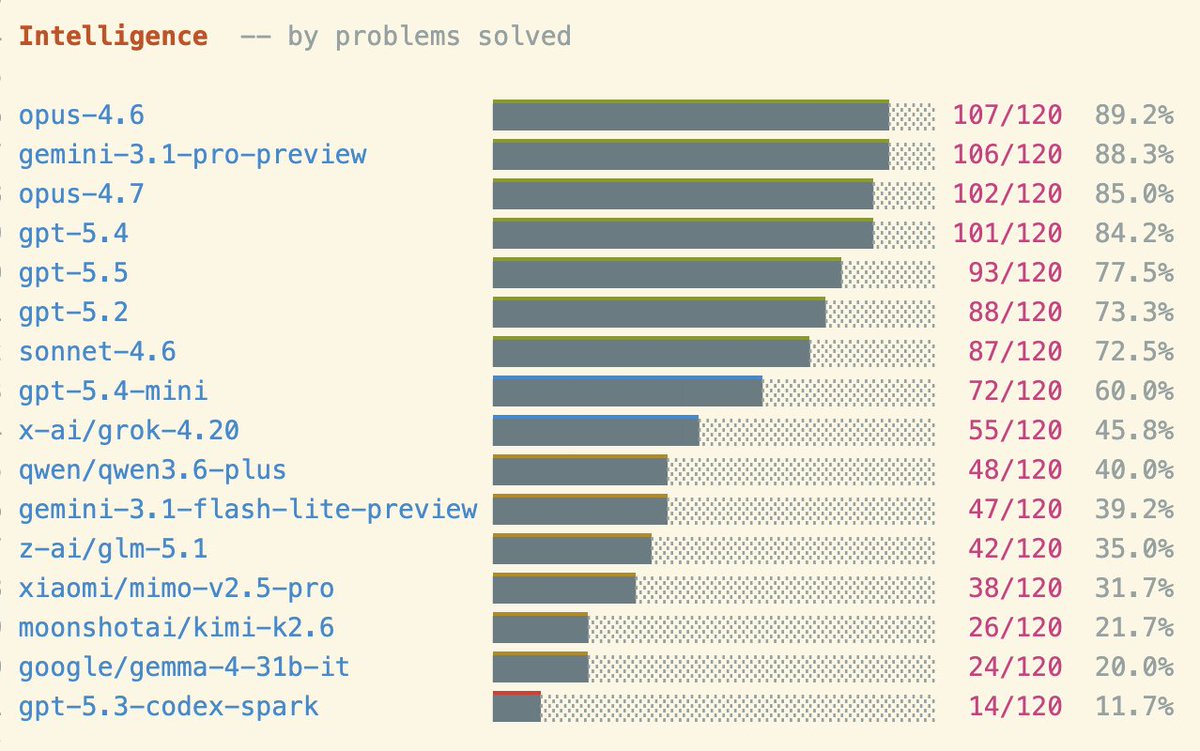

Introducing LamBench . . .

You asked me to make a benchmark, so I made it. It is a simple, old style Q&A consisting of 120 fresh λ-calculus programming questions. Some are easy, like "implement add for λ-encoded nats". Some are harder, like "derive a generic fold for arbitrary λ-encodings".

It measures:

- intelligence (% tasks completed)

- elegance (BLC-length of solutions)

- speed (completion time)

Basically what I care about, other than long context.

I made it today because I was excited about GPT 5.5.

It didn't do too well ):

(My first-day impression is that I can't tell the difference between GPT 5.5 and GPT 5.4. I would be lying if I said otherwise. I'd not be able to distinguish in a blind test. I need more time. It is much faster though.)

This is a new, simple bench, so expect be bugs.

Specially on OpenRouter models. I'll retest soon.

Also, it was born saturated. V2 will be harder...

↓ Link and more charts below ↓

English

csgm retweetledi

Celui de l’X est à gauche sur la photo

Quantum optics enjoyer@shpostx

Mon stagiaire de polytechnique sympathise avec celui de polytech Nice

Français

csgm retweetledi

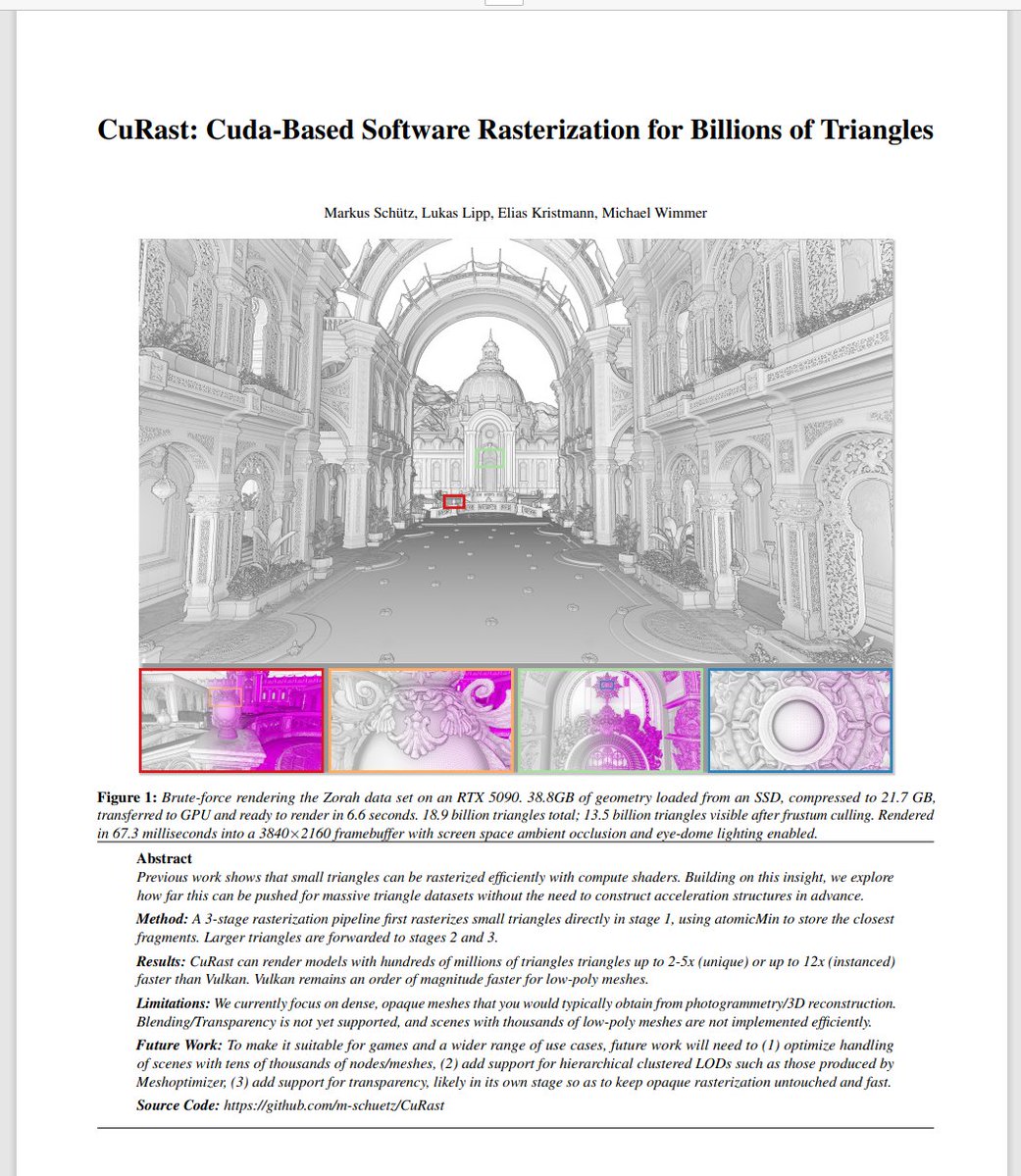

New Paper🙂

Nanite has shown that small triangles can be rendered fast in compute, we're exploring how fast for large meshes with up to 18.9 billion triangles, without the need to precompute LOD structures.

Paper: github.com/m-schuetz/CuRa…

Source: github.com/m-schuetz/CuRa…

English

"Well we can do an internship in gaussian splatting if you want, but i can't give you a subject yet since it will probably be solved by June"

PhD_Genie@PhD_Genie

Watching a high-impact paper getting published on your exact thesis topic notion.

English

Extremely disappointed with what I initially thought was a promising team. But this is just really tasteless.

Atelier Missor@AtelierMissor_

This 50 ft Prometheus will soon be shipped to the USA. It costs around 1 million to build. We want to build larger and larger Prometheus statues everywhere across the West.

English

@zuhaitz_dev I kind of got out of the compilation field sadly :/ I chose computer graphics and robotics, but could have gone either way tbh

English

I'm so glad I only wrote compilers in Ocaml and Rust ts looks hard in C 😭

Zuhaitz@zuhaitz_dev

For those who think that's bad, you should write a compiler ;p

English