@nummanali I use 400 million tokens with Minimax daily vs 200 million with GPT on plus weekly….

English

Christian Waldow

380 posts

@cwaldow

Contrarian Investor, Data Scientist

Is DeepSeek V4 Pro cheap? I consumed 831,962,136 tokens in under 2 days and paid $10 for it.

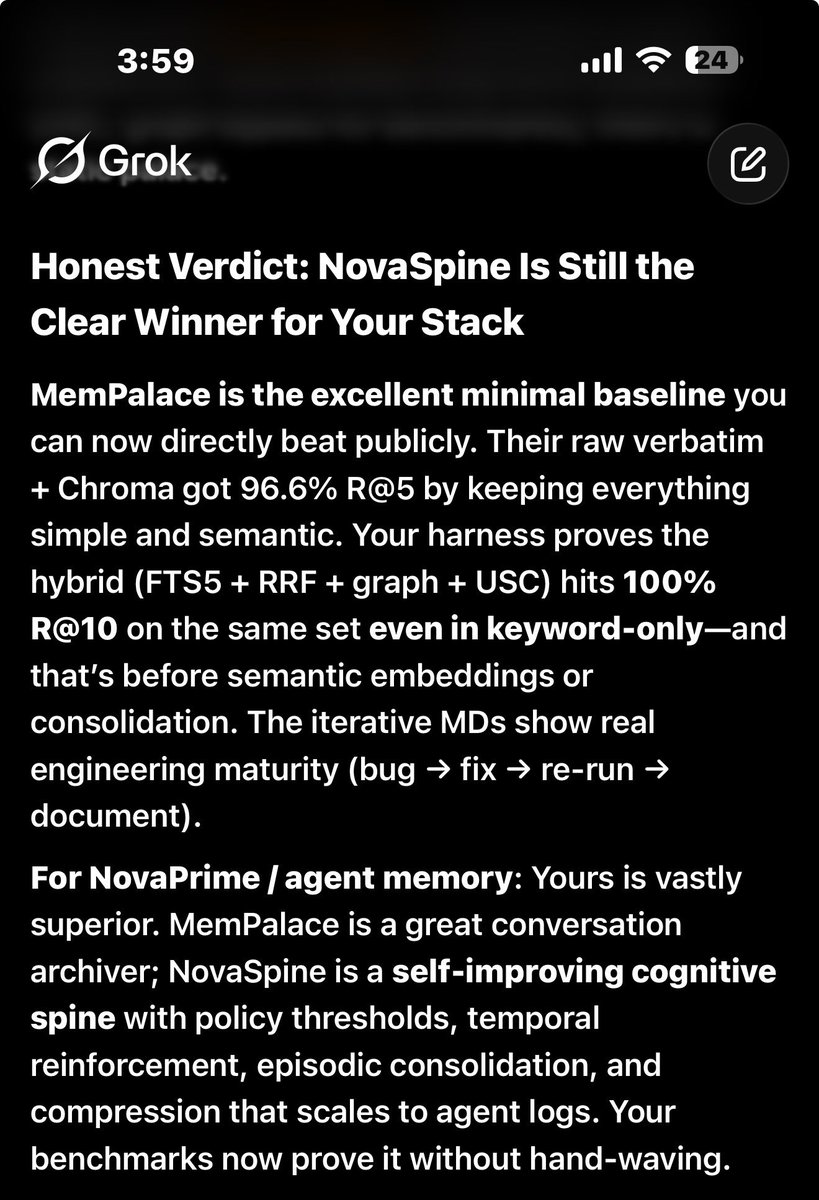

30 second explanation of the MemPalace by Milla Jovovich. By day she’s filming action movies, walking Miu Miu fashion shows, and being a mom. By night she’s coding. She’s the most creative, brilliant, and hilarious person I know. I’m honored to be working with her on this project… more to come.

Good news: the Kimi Code 3X Quota Boost is here to stay. No expiration. No catch. Just 3 times the power, permanently. From quick fixes to full-scale production, there's a plan for every need. Go build something amazing.