Sotnyk.етн

100 posts

Sotnyk.етн

@d3magexgod

Will use this account (not) only for Ethereum faucet.

Katılım Ağustos 2021

179 Takip Edilen33 Takipçiler

yes and now in two places, cross-experiment and intra-experiment. Agents see previous iterations, we have a near miss classification to surface past tries (1.5% regression, probably this can be optimized later to be more narrow)

Outside of provers one idea could be LoC minimization for better auditability.

I think autoresearch is first implementation of a larger pattern of new program designs, likely with multi-agent orchestration, but distinct from OpenClaw direction (though sharing similarities).

English

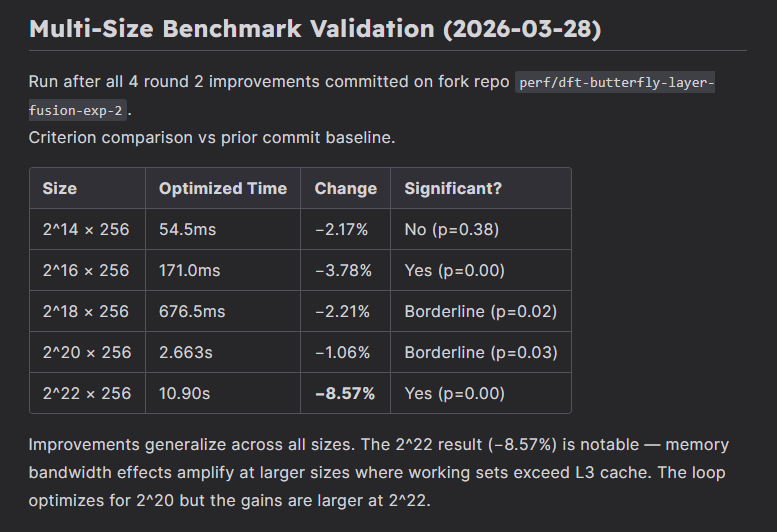

Round 2 of zk-autoresearch: Claude ran 20 iterations autonomously optimizing Plonky3's DFT/NTT. We stopped after 20 iterations. Found key agent-infra improvements meant further iterations would waste tokens without new signal.

4 improvements kept. 16 reverted. Cumulative speedup from baseline across Round 1 + Round 2: 4.1%

The 2^22 result (−8.57%) was an outlier, memory bandwidth effects amplify when working sets exceed L3 cache. Full results in the table above.

The repo was first starred by @drakefjustin (EF).

The most interesting finding was a runaway agent:

The agent discovered an incentive misalignment: failing to write grants another 20k token budget. So it kept failing.

Iter 10 ran for ~30 minutes and consumed an estimated 150k+ tokens before writing code.

In the end it submitted a regression of -1.22%, specifically exploring what we have decided to characterize as a dead-end for the next experiment in CLAUDE.md.

The agent persisted in submitting 50k-character rewrites of radix_2_dit_parallel.rs instead of targeted fixes.

- Agent was stuck for optimizations and defaulted to full rewrites instead of surgical improvements — updated agent-infra to prevent this in the next experiment

- Optimizing a prover is inherently different from Karpathy's target: his setup is a continuous, differentiable parameter space. Ours: discrete code changes, bitwise-correct ZK constraints, compiler behavior shaped by CPU microarchitecture. The cliff between "compiler handles it" and "code regresses" is steep.

- Model scaling would improve output, but risks masking existing shortcomings, the current decision is to optimize agent-infra until clear signs show Sonnet is not capable, then yield those benefits with model scaling once fixes are validated

All 20 iterations respected hard constraints, no interface changes, no security parameter touches. The Plonky3 team added new testing in PR-1494 that we incorporated for experiment 2.

@CreatorsOfChaos reviewed the methodology and flagged real gaps: tests run in debug not release, benchmark workload never tested for correctness directly. We are working through the issues publicly, most of them have been addressed by today's PRs.

For Round 3 we have implemented:

- Streaming: agent reasoning visible live, full thinking saved to experiment log

- Supply chain resilience and audit trail + testing

- Loop reliability, static diff inspection, three-stage correctness gate

- Cross-experiment agent memory, surgical precision and documented proven techniques

$153.25 across 2 experiments. ~$1.09/iter on Sonnet. Opus comes after Sonnet infrastructure is fully validated; every dead end mapped on Sonnet is an Opus iteration saved.

We have received two offers for contributions, one small grant and one LLM contribution.

Repo is open for contributions, we are mainly interested in:

- Potential security vectors

- Generalization ideas

- Agent-infra improvements

The roadmap:

- Optimize on Sonnet, shift to Opus

- Expand to other crates on Plonky3 and start initial generalization

- Expand to other prover repos with easy setup; this requires significant work on generalization frameworks

Feel free to message if you want to contribute.

Link to repo: github.com/Barnadrot/zk-a…

14 issues have been opened, one of them is an upstream improvement for the Plonky3 repo (pending submission)

Link to round 1 PR on Plonky3 repo: github.com/Plonky3/Plonky…

Round 2 changes can be tracked on the fork currently (pending upstream submission)

github.com/Barnadrot/Plon…

English

@realbarnakiss So it's safe to assume that you're fine-tuning the model. Except you're not updating the weights directly, but store them in markdown. That's a very cool idea! I'm wondering what other cases could be approached with this technique.

English

@d3magexgod Loop starts fresh, the setup is split between CLAUDE.md (upstream repo related) and loop.py (agent logic related)

Yesterday we have added cross-experiment memory to the system, Round 3 will have info about previous rounds.

English

@alexanderlee314 It is not, they traded the number of qubits for the number of gates. Still not viable anytime soon.

English

Excited for our first grant and to finally get to work on stack scheduling again.

The new algo will draw on insights from academia, my experience hand writing contracts in Huff, my balls prototype (github.com/philogy/balls) and more.

Plank@plankevm

Proud to announce our first grant from @argotorg to design a next gen stack scheduling algorithm for the EVM and implement it for our IR. The project's goals are: - excellent codegen quality - no stack too deep - adaptable to @official_fe's Sontina IR and @solidity_lang

English

Sotnyk.етн retweetledi

Sotnyk.етн retweetledi

I built ZK selective disclosure for eIDAS 2.0 credentials.

zk-eidas.com — 15 Circom circuits, Groth16, ECDSA P-256 in-circuit. Contracts without personal data. Proofs print on paper. Verify with a phone camera. Offline.

English

@oleh_bc I don't think that we'll have such capabilities in the near future. Would be cool to, though.

English

@d3magexgod I mean instant LLM output for vibe coding. You get the whole new project on each keystroke

English

@cartoonitunes You're my favorite history channel. Thank you very much!

English

@archethect Gud stuff, I always wondered why no one asked the models to shut up and confirm what they imagined. What models did you use in your test setup? Two instances of Claude?

English

The problem was never "can AI find bugs." It always could.

The problem was "can AI shut up about bugs that aren't real."

Turns out you just needed a second AI whose entire purpose is to call bullshit.

sc-auditor V2 is live, open source and a substantional improvement over V1 at that! 👇

github.com/Archethect/sc-…

English

Sotnyk.етн retweetledi

@fileverse @aboutcircles yeah, I was talking about Collab with gnosis, too bad I missed it

STILL LOVING YOU

English

@d3magexgod AWWWWWW 💃💛

Tyyy anooon! Ur using both the gnosis app and ddocs dot new?

💾For the custom @aboutcircles floppy: it was a one time collab with them!

💾If a new custom floppy drops on the gnosis app: would you want us to ping you?

GIF

English

hey @fileverse

I love you!

is there a chance you will resupply fileverse disks on circles?

English

@philogy @andreaslbigger You know, this feels like a discovery week for me. Firstly, Plank, then Ora, now this.

English

@andreaslbigger Holy, very cool. Edge is also one of my inspirations for Plank. You plan on maintaining/building this long-term?

English

Introducing Edge, a high level, strongly statically typed, multi-paradigm domain specific language for the Ethereum Virtual Machine (EVM).

github.com/refcell/edge-rs

English

@philogy @d3magexgod @plankevm Ora uses Plank (aka Sensei IR) to lower to bytecode. They are connected but have different roles.

You can think Ora as a modern take on Refinements, SMT formal verification and strong comptime.

TLDR: Ora helps you every step of the way. Ora loves auditors :D

English

Our first blog post is up! We explain our high-level vision, design & roadmap. 🏗️

plankevm.github.io

what happened to the website design? sorry no time, we need to get back to shi— 🚀💻

English

They share some similarities (e.g. some comptime overlap) but the way I see it:

Plank prioritizes raw capabilities & features first but in exchange will force the dev to build more things from scratch (at least initially).

While Ora is more high-level & batteries included and is prioritizing refinement types + first class SMT-based formal verification.

but maybe @Logicb0x has a different take

English