DML

3.3K posts

@damipedia

MUFC | Cybersecurity | AppSec Engineer | The quicker you let go of old cheese, the sooner you find new cheese.~~~~~ Warrior and Survivor

We’ve identified a security incident that involved unauthorized access to certain internal Vercel systems, impacting a limited subset of customers. Please see our security bulletin: vercel.com/kb/bulletin/ve…

🚨 AWS DevOps Agent is finally here! On March 31, 2026, AWS DevOps Agent became generally available. This is actually a big deal. It can: → Generate CI/CD pipelines → Debug failed deployments → Suggest infrastructure changes → Analyze logs and incidents → Help with Terraform & CloudFormation → Recommend cost optimizations → Explain AWS architecture issues Basically, it’s like having a junior DevOps engineer inside AWS. DevOps is slowly becoming AI-assisted operations. Source: aws.amazon.com/devops-agent/

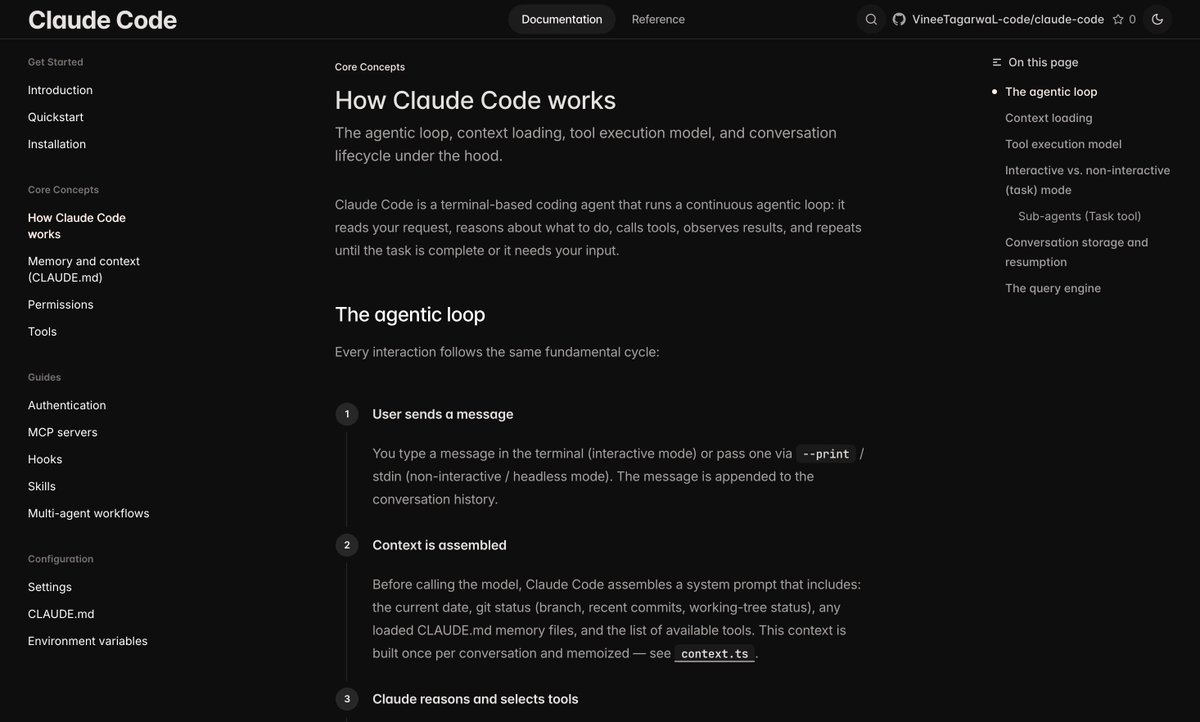

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Do you know which Data Structure is being used for browser’s Back/Forward navigation history???

Peter Steinberger is joining OpenAI to drive the next generation of personal agents. He is a genius with a lot of amazing ideas about the future of very smart agents interacting with each other to do very useful things for people. We expect this will quickly become core to our product offerings. OpenClaw will live in a foundation as an open source project that OpenAI will continue to support. The future is going to be extremely multi-agent and it's important to us to support open source as part of that.

If I was early in my career in 2025 and wanting to land a position in offensive security, I’m not sure I’d even bother applying for offensive security roles. It’s way too competitive. As I see it, tech/cyber is a really broad field with tons of job areas. The really niche ones (like red teaming or penetration testing) are the ones that everyone is always fighting over. So, let them have it. Half of them won’t last in the role or they’ll bail in a year or two. Instead, I’d target adjacent-to-offsec roles at companies where I’d ideally want to be doing offensive security work. I say this because a lot of companies (not all, I know) like to hire from within. But even if that’s not the case, it at least provides a great opportunity to network and build relationships with that team while trying to work your way over. Every job I’ve had is the result of investing in relationships and knowing someone. I think this is what I’d do.