Daeun Lee

488 posts

Daeun Lee

@danadaeun

PhD student @unccs advised by @mohitban47 | Intern @AIatMeta, @AdobeResearch | Multimodal, Video, Embodied AI, Post-training, RL

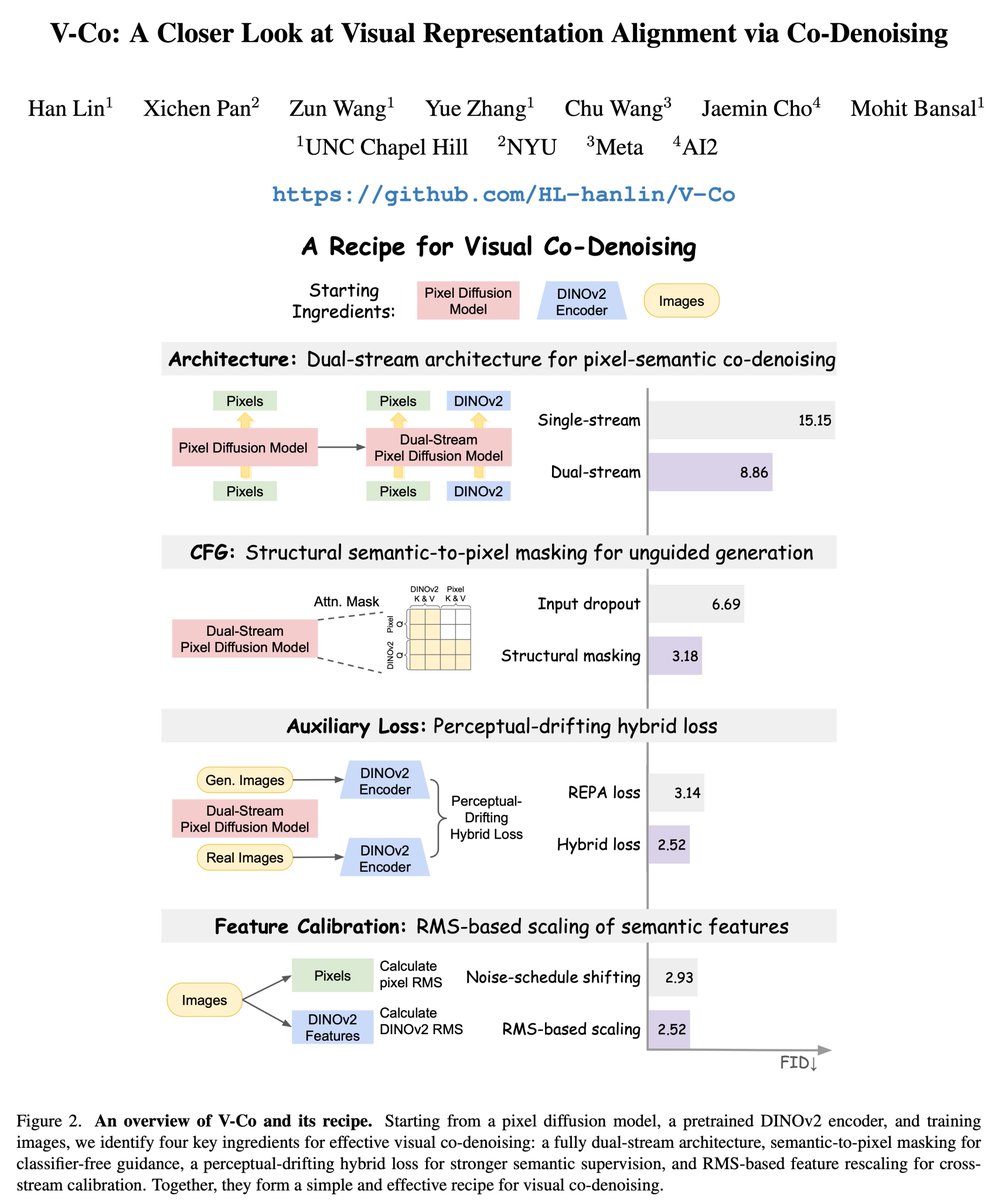

🚀 Excited to share V-Co, a diffusion model that jointly denoises pixels and pretrained semantic features (e.g., DINO). We find a simple but effective recipe: 1️⃣ architecture matters a lot --> fully dual-stream JiT 2️⃣ CFG needs a better unconditional branch --> semantic-to-pixel masking for CFG 3️⃣ the best semantic supervision is hybrid --> perceptual-drifting hybrid loss 4️⃣ calibration is essential --> RMS-based feature rescaling We conducted a systematic study on V-Co, which is highly competitive at a comparable scale, and outperforms JiT-G/16 (~2B, FID 1.82) with fewer training epochs. 🧵 👇

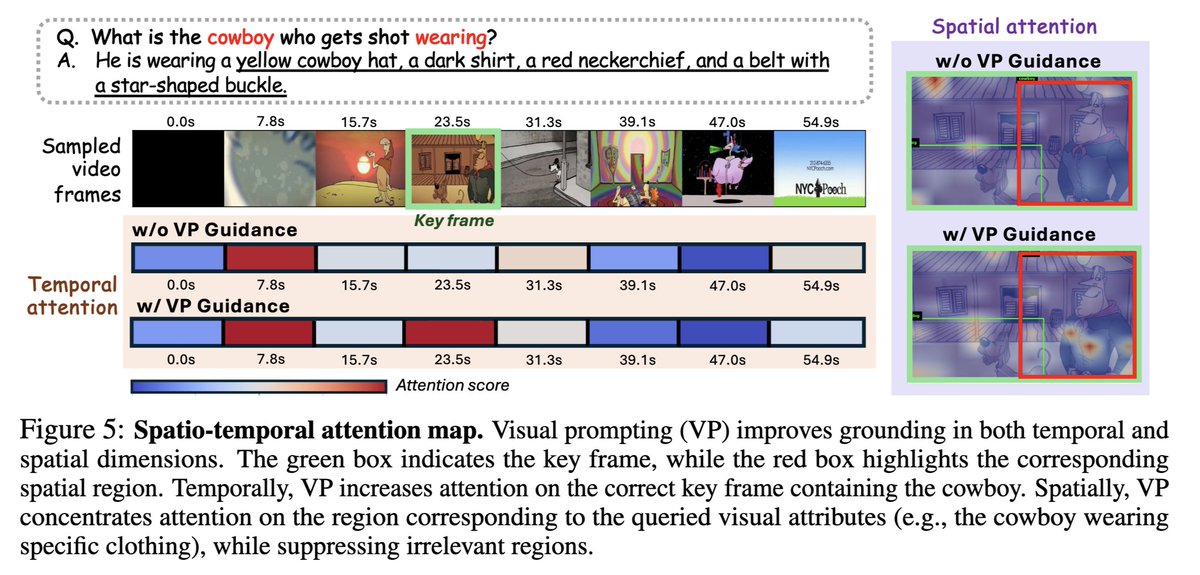

🚨 Excited to share VisionCoach, an RL framework for reinforcing grounded video reasoning via visual-perception prompting and self-distillation! 🧠 Video reasoning models often miss where to look or rely on language priors. Instead of only supervising final answers, we encourage the model to learn to attend to the right visual evidence. ⚽️ VisionCoach uses RL to reward correct visual attention, with dynamic visual prompting as a training-time coach for better spatio-temporal grounding, while keeping inference simple and tool-free via self-distillation. ⭐️ Achieves state-of-the-art zero-shot performance across video reasoning, video understanding, and temporal grounding benchmarks (V-STAR, VideoMME, World-Sense, VideoMMMU, PerceptionTest, and Charades-STA). 👇🧵

🚨 Excited to share VisionCoach, an RL framework for reinforcing grounded video reasoning via visual-perception prompting and self-distillation! 🧠 Video reasoning models often miss where to look or rely on language priors. Instead of only supervising final answers, we encourage the model to learn to attend to the right visual evidence. ⚽️ VisionCoach uses RL to reward correct visual attention, with dynamic visual prompting as a training-time coach for better spatio-temporal grounding, while keeping inference simple and tool-free via self-distillation. ⭐️ Achieves state-of-the-art zero-shot performance across video reasoning, video understanding, and temporal grounding benchmarks (V-STAR, VideoMME, World-Sense, VideoMMMU, PerceptionTest, and Charades-STA). 👇🧵