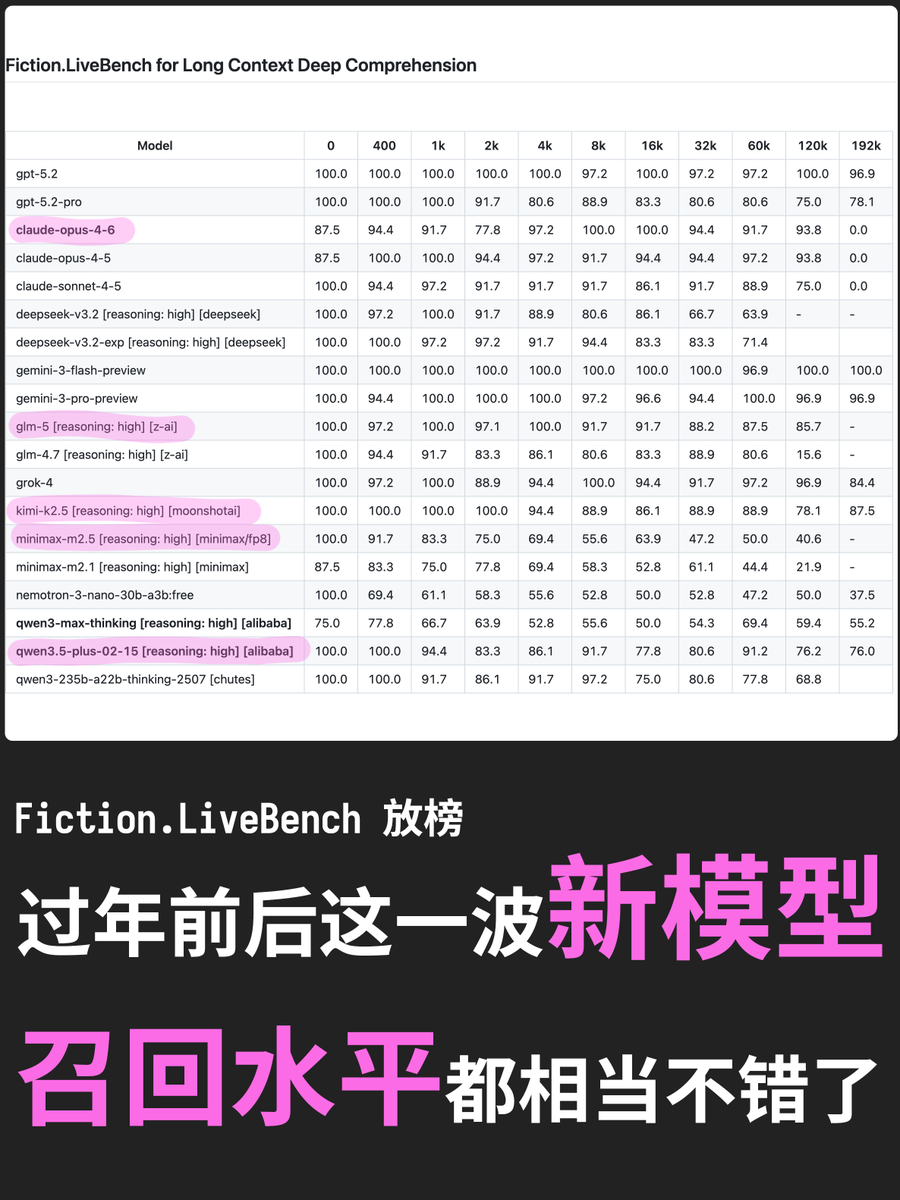

alphaXiv@askalphaxiv

DeepSeek just dropped a banger paper to wrap up 2025

"mHC: Manifold-Constrained Hyper-Connections"

Hyper-Connections turn the single residual “highway” in transformers into n parallel lanes, and each layer learns how to shuffle and share signal between lanes.

But if each layer can arbitrarily amplify or shrink lanes, the product of those shuffles across depth makes signals/gradients blow up or fade out.

So they force each shuffle to be mass-conserving: a doubly stochastic matrix (nonnegative, every row/column sums to 1). Each layer can only redistribute signal across lanes, not create or destroy it, so the deep skip-path stays stable while features still mix!

with n=4 it adds ~6.7% training time, but cuts final loss by ~0.02, and keeps worst-case backward gain ~1.6 (vs ~3000 without the constraint), with consistent benchmark wins across the board