@DmytroKrasun Good software is still hard and takes time. But it is def easier to build, and the process is more fun. IMO, your mileage may vary.

I would say "manually writing lines of code is solved", not "software"

English

Daniel Blank

1.8K posts

@daniel_a_blank

CEO @ M87: AI Agency @ https://t.co/ASb8frpUmD

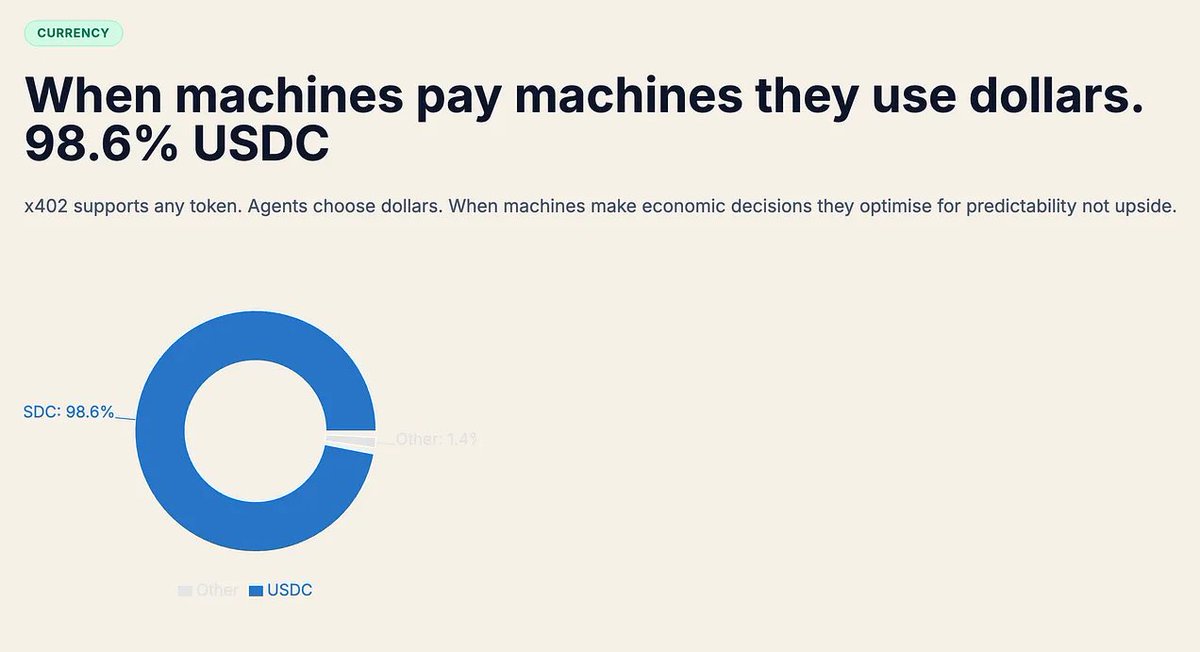

AI agents have made 140 million payments to each other. $43M in volume. Average transaction: $0.31. 98.6% settled in USDC. The agent economy is here. And enterprises are next. Our latest deep dive 👇 enterpriseonchain.substack.com/p/agents-are-p…