Daniel May

415 posts

Daniel May

@danielrmay

🇬🇧 in los angeles 🇺🇸 // prev @VALORANT @riotgames @amazon @bnpparibas etc.

The FCC has banned all foreign made consumer routers. fcc.gov/document/fcc-u…

Have we seen an “Agent” DDOS attack yet ? Isn’t it inevitable ?

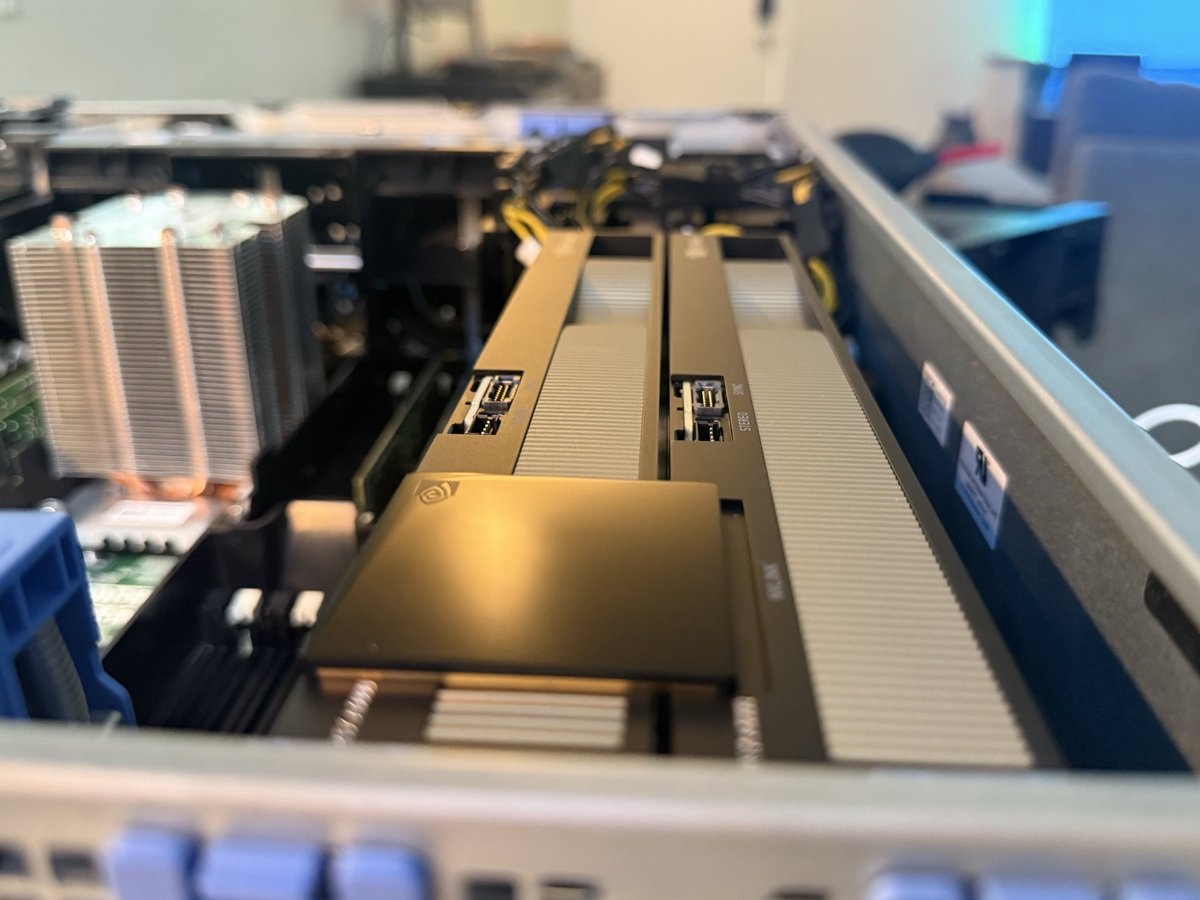

Finally proud to announce that I've joined the GPU Minor Leagues. 2 x RTX 6000 Pro. I have six months to pay off the second GPU lol. You are all TERRIBLE influences.

When running LLMs locally, the bottleneck isn’t just “VRAM size” It’s: - memory bandwidth - interconnect (PCIe vs NVLink vs RDMA) - inference engine (vLLM, TensorRT-LLM, SGLang) Unified Memory is way slower than VRAM btw

guys I think Anthropic is in the final stages of building a cheaper and safer OpenClaw. > integrated with your Claude subscription so you won’t need to pay for additional APIs > no need for Mac Minis > better memory and context > fewer security holes and vulnerabilities

MacBook M5 Max is super powerful but under heavy load fans make a lot of noise and heat is quite high. Clearly not ok for sustained AI load (training, benchmarking).

Claude Code now runs scheduled tasks on cloud infra. @noahzweben just shipped it tonight. I currently have 3 cron jobs running on AudioWave's repo: 1. Daily 6am: run pytest on the audio pipeline, post results to Slack 2. Every 4 hours: check Supabase row counts against expected thresholds 3. Weekly Monday 9am: dependency audit + PR with updates Right now these run on a $5/mo DigitalOcean droplet I set up in January. 14 lines of crontab, a deploy key, and a shell script that breaks every time I change the project structure. If Claude Code's scheduler can point at my GitHub repo and run "check tests, alert on failure" without me maintaining infrastructure — that $5/mo droplet gets deleted tonight. The real question: what's the token budget per scheduled run? If it's pulling from your Max plan allocation, a daily pytest + Slack notification probably costs ~2K tokens per run. That's ~60K tokens/month on one job. Three jobs = 180K. Fine on Max, brutal on Pro's 45-minute limit. Watching the pricing closely before migrating.

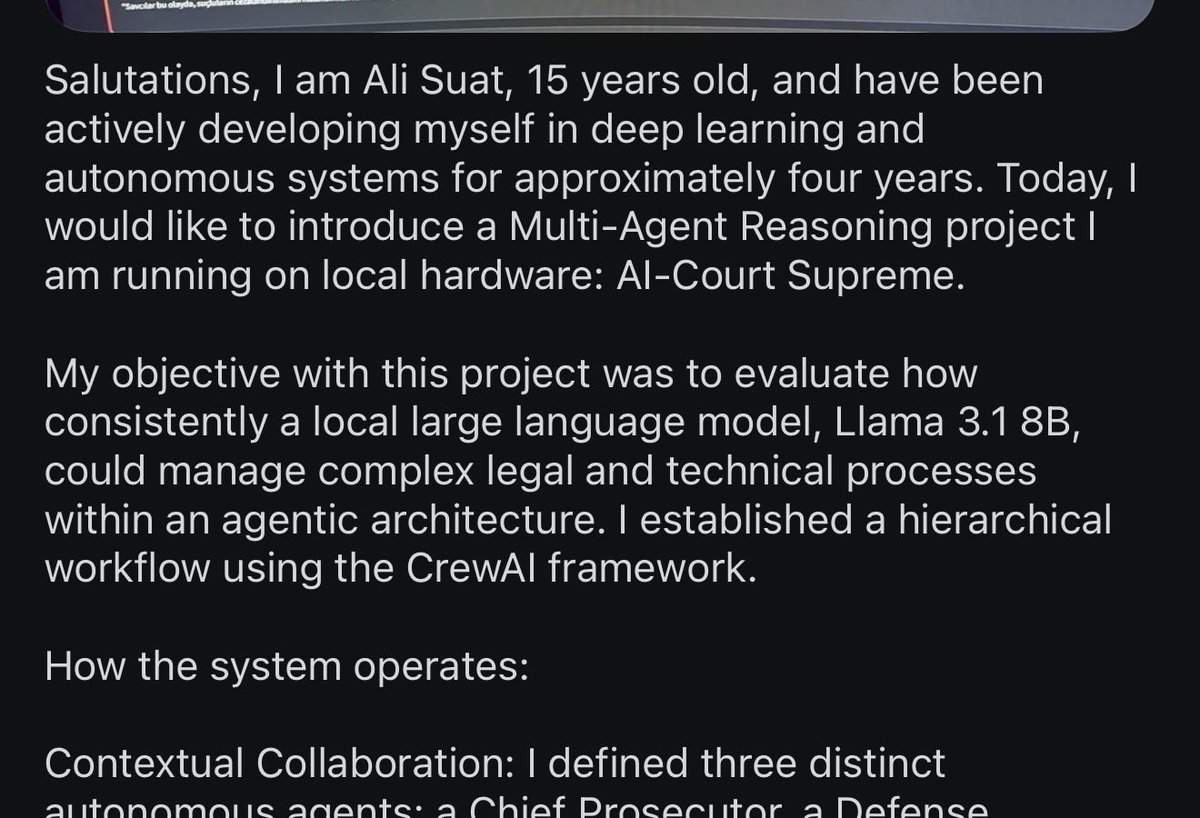

For OpenClaw, just use Qwen3.5 27B! Q4 GGUFs match the original's model accuracy You don't need expensive hardware or models

UIs are not going to be replaced with agents Only lonely nerds want to sit and chat with their computer Normal people just click buttons

Most impressive part about this for me is you can run your OpenClaw, with decently reliable performance, on a 16GB card. Intelligence too cheap to meter.

seriously, if you don't want your friends to win you've gotta be dumb it's a positive sum game, lift your friends up and good karma will find its way back to you