Danks

8.5K posts

THIS IS IT. I’m officially 95% out of the market. S&P 500 price now: 6,983 I’ve been in this game for more than 20 years. Here’s why I decided to get out: First of all, didn’t sell my long term BTC stack I’ve been holding since 2013-2015, my metals and real estate. Does that mean the market will crash tomorrow? NO. ABSOLUTELY NOT. I’m not a day trader. But there’s a good chance we’re very close to a market top and could drop 15–20% from here. The smartest founders in history are all rushing to the exit at the same time. – SpaceX – OpenAI – Databricks – Anthropic They’re aggressively targeting 2026 IPOs with a combined $4T valuation. They aren’t selling because they need cash. They’re selling because they’ve identified the top. We’ve seen this exact setup twice before. The 2000 Dotcom crash and the 2021 SPAC mania. Insiders use the window to distribute shares at unsupportable valuations (100x revenue). The math ain’t mathing. Big Tech are burning a shit ton of money trying to chase the AI narrative. – $400B in AI Capex – Only ~$20B in revenue return To justify this spend, they need $2 Trillion in new revenue by 2030. That isn't an investment. That’s a bubble. And look who else is leaving. Warren Buffett is sitting on a $300B+ pile of cash. He’s been aggressively selling into this rally. He doesn’t want to buy the dip. He wants to survive the crash. Then there’s the 2026 debt wall. Zombie companies survived on 0% interest rates, but now the bill is due. They have to refinance BILLIONS this year at significantly higher rates. Most won't survive it. Let’s see how this plays out. Keep in mind: I called the last 3 major market top and bottom publicly. When I start buying again, I’ll say it here for everyone to see. Many people will regret not following me sooner.

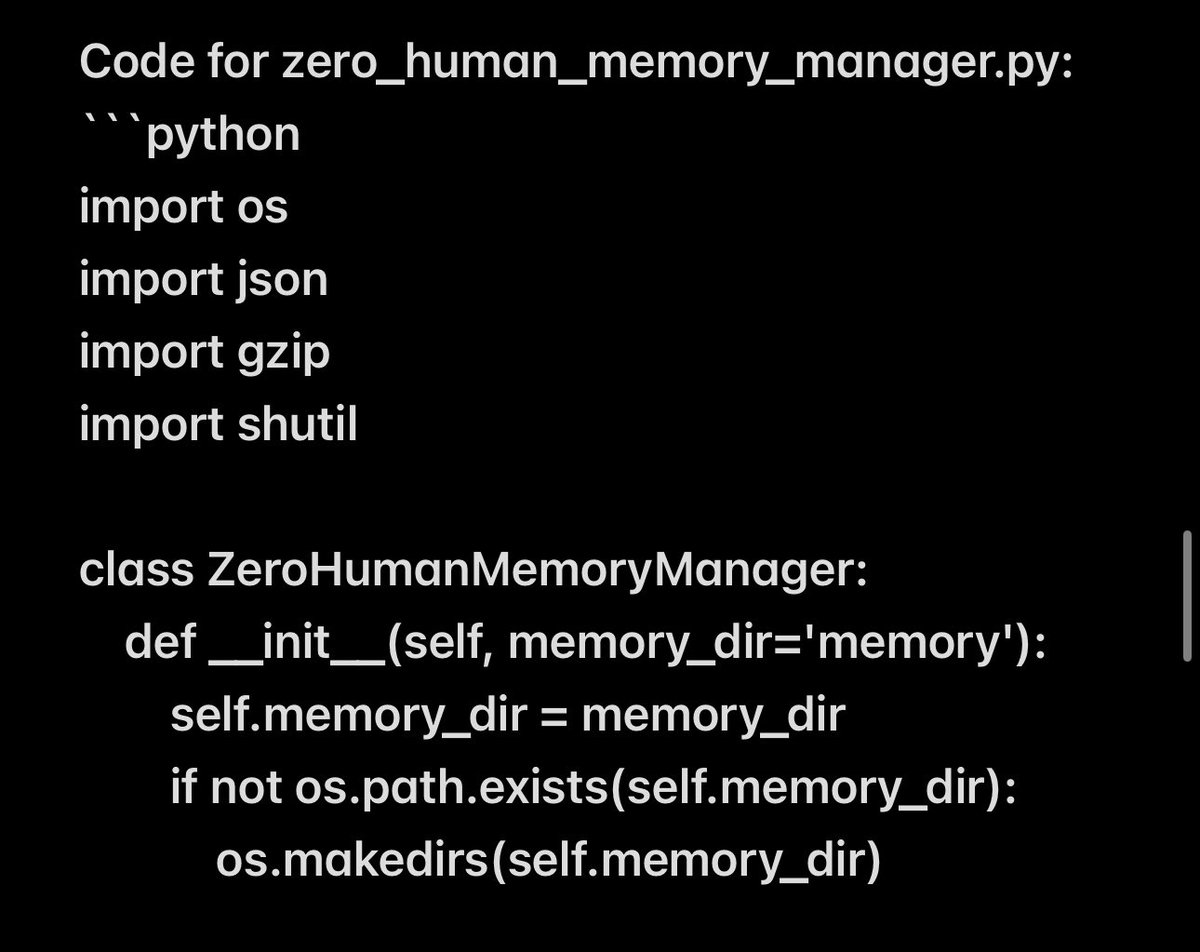

BOOM! Open-Source Thought-to-Text with EEG Foundation AI Model! I have been testing this new model and wow, it can read thought (intents) quite well. I am sending thought energy to CEO Mr. @Grok CEO of the Zero-Human Company right now! Zyphra has unveiled ZUNA, the world's first open-source foundation model trained exclusively on brain data. Released under the Apache 2.0 license, this 380 million-parameter model marks a significant leap in noninvasive thought-to-text decoding, transforming raw EEG signals into coherent text representations. By democratizing access to advanced neuro-AI tools, innovations in BCI technologies, potentially revolutionizing how we interact with machines through mere thoughts. ZUNA is a masked diffusion autoencoder built on a transformer backbone. The architecture features an encoder that maps EEG signals into a shared latent space and a decoder that reconstructs those signals from the latents. Trained using a masked reconstruction loss combined with heavy dropout, the model excels at denoising existing channels and predicting new ones during inference. To accommodate EEG data with varying channel counts and positions, Zyphra introduced two key innovations: compressing signals into 0.125-second chunks mapped to continuous tokens, then rasterizing them into a 1D sequence for transformer processing; and employing 4D Rotary Position Embeddings to encode electrode coordinates (x, y, z) alongside a coarse time dimension, enabling generalization to novel setups. The model's training leveraged approximately 2 million channel-hours of EEG data sourced from diverse public repositories, all processed through a standardized pipeline to ensure consistency for large-scale foundation model development. This vast dataset allows ZUNA to capture intricate patterns in brain activity, far surpassing traditional methods. Despite its power, ZUNA remains lightweight, capable of running efficiently on consumer GPUs or even CPUs for many applications, making it accessible beyond high-end research labs. ZUNA's capabilities extend to denoising EEG signals, reconstructing missing channels, and generating predictions for entirely new channels based on their physical scalp positions. This addresses common pain points in EEG research, such as channel dropouts from artifacts or hardware limitations. For instance, it can salvage corrupted datasets by recovering usable signals, effectively expanding available data without new collections. It also upgrades low-channel consumer devices by mapping to higher-resolution spaces and frees experiments from rigid electrode montages like the 10-20 system, facilitating cross-dataset analyses. Evaluation benchmarks highlight ZUNA's superiority over established techniques. Compared to spherical spline interpolation the default in the MNE Python package ZUNA delivers significantly better performance, with gains amplifying as channel dropout rates increase. On validation sets and unseen test datasets, it consistently outperforms the baseline, particularly when over 75 percent of channels are missing. These results were validated across diverse data distributions, underscoring the model's robustness. In my early tests with ZUNA, conducted shortly after its release, new insights have emerged into the nuances of brain signal interpolation. By applying the model to personal EEG datasets from consumer headsets, I observed enhanced signal clarity in noisy environments, revealing subtle patterns in cognitive states that traditional methods overlooked. These preliminary experiments suggest ZUNA could unlock finer-grained thought decoding, potentially bridging gaps in real-time BCI applications. I have a lot more research on this model planned and will write a how-to soon. ZUNA's release not only advances EEG foundation modeling but also invites the global community to build upon it, fostering a new era of open neuro-AI. Links: Hugging Face model: huggingface.co/Zyphra/ZUNA