Dapton AI retweetledi

Dapton AI

210 posts

Dapton AI

@daptonai

We turn "this is taking forever" into "wait, it's already done?" AI agents • automations • custom software.

Earth Katılım Şubat 2026

38 Takip Edilen34 Takipçiler

Dapton AI retweetledi

Marketing Skills v2.0 is finally here! 🎉

I've been working on this for over a month.

The biggest release yet. Shorter names, one unified CRO skill, and a foundation built to scale.

What's new:

✨ Cleaner, faster skill names

→ /paid-ads → /ads

→ /email-sequence → /emails

→ /social-content → /social

→ /launch-strategy → /launch

→ /pricing-strategy → /pricing

→ /referral-program → /referrals

→ + 11 more

🔗 /cro is now one skill

Page CRO + Form CRO merged. One mental model for every conversion optimization workflow.

📦 100+ refinements across the entire skill set

40 skills. 52 tool integrations. 100% evals coverage.

Free and open source.

🚨 Heads up — v2.0 is a breaking change, so reinstall to get the new names:

npx skills add coreyhaines31/marketingskills

English

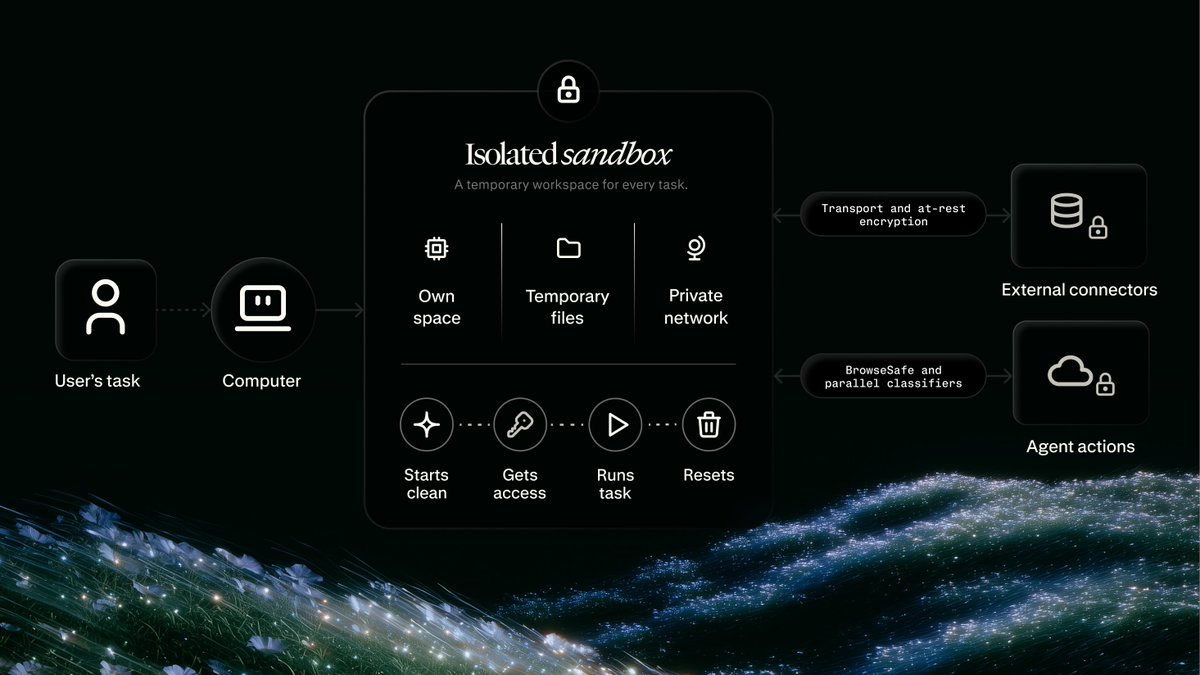

Default settings let the agent pick its own permissions. Configured flags lock them down before it runs.

The difference is whether the agent writes to a directory you did not want touched, burns through a model that costs 5x your budget, or calls an MCP server you forgot was connected. Default is convenient until it gets expensive.

Locking down boundaries before the first run or fixing them after?

English

Claude Code 2.1.142 has been released.

24 CLI changes

Highlights:

• New 'claude agents' flags configure dirs, permissions, model, effort and plugin/MCP settings for finer control

• grep now uses ripgrep as the default search tool for faster, more accurate results

• Background daemon detects clock jumps after macOS sleep/wake, preventing session loss and reconnect failures

Complete details in thread ↓

English

Pre-warming the cache means burning tokens upfront to save latency later. That only works if later actually happens.

Change your system prompt every few requests? Only making one call per session? You just paid to warm a cache that never gets used again. The 52% speedup only shows up around the 10th, 20th, 50th call with the exact same prompt.

How many requests are you making before the prompt changes?

English

Tokens usually get added first because they unlock automation. Hooks come later because they feel like overhead. That order is backwards.

Hooks stop automation from doing something stupid. Tokens just let it run faster. Enable CI before secret scanning is in place and the pipeline can leak AWS keys at scale before anyone notices.

Hooks before automation or after the first leak?

English

Codex is getting easier to automate and customize around your code.

🪝 Hooks customize the Codex loop with scripts that run at key points in a task:

• Run validators before or after work

• Scan prompts for secrets

• Log conversations to internal systems

• Create memories or customize behavior by repo or directory

⚙️ Programmatic access tokens provide scoped credentials for Business and Enterprise teams:

• Create tokens from ChatGPT workspace settings

• Use them in CI, release workflows, and internal automations

• Set expirations or revoke access when needed

• Keep usage tied back to the workspace

English

Prompt:

Vintage travel sketchbook watercolor illustration of Campo de' Fiori flower market in Rome, Italy, cinematic golden morning light, hand-drawn ink linework mixed with soft watercolor washes, colorful flower stalls with roses, peonies and sunflowers, vendors arranging blooms, couples strolling on cobblestone streets, Piazza Navona dome in background, terracotta Roman buildings, wrought iron street lamps, outdoor caffè seating, handwritten Italian notes, small map element, vintage postage stamp, vertical portrait composition, nostalgic travel diary aesthetic, illustrated on aged cream sketchbook paper.

English

A leaked API key can drain your entire budget before you even know it leaked.

Error logs get indexed, debug files get pushed to public repos, browser storage gets scraped. Long-lived keys stay usable for weeks after exposure. Short-lived tokens turn that weeks-long window into minutes. The leak still happens but the damage window closes automatically.

How long would a leaked credential stay valid in your current setup?

English

Constant approval prompts train developers to click yes without reading. Full autonomy means one bad agent run at 2am deletes production data before anyone wakes up.

The sandbox approach is not a compromise. It is the only way to get both safety and usefulness. Scoped permissions mean the agent can work but can't break anything outside its boundaries.

Which failure mode have you hit: approval fatigue or autonomy disaster?

English

To bring Codex to Windows, we had to answer a hard question: how do you let coding agents stay useful without forcing developers to choose between constant approval prompts and full machine access?

Here’s how we built the Windows sandbox for Codex:

openai.com/index/building…

English

Most teams with custom agents face a UI problem, not a capability problem.

Your agents work but they live in terminals or separate dashboards. Nobody outside engineering ever touches them. External Agents API solves that. Bring your custom agent into Notion and suddenly the whole team can use it without learning a new tool.

Are your custom agents stuck in developer-only tools right now?

English

BIG one for devs today. Introducing the Notion Developer Platform:

- Notion CLI, ntn (Notion in your terminal)

- Workers (run code on Notion's infra)

- Database sync (any data source into Notion)

- Agent tools (build any workflow)

- Webhook triggers (trigger Notion from any app)

- External Agents API (bring any agent into Notion)

- Notion Agents SDK (use Notion Agents anywhere)

- …and a bunch more API improvements

And soon, you won't need to be a developer to build on Notion. Your agent will be one for you.

Notion Developers@NotionDevs

Introducing: the Notion Developer Platform New building blocks that help you (and your coding agents) sync any data source, build any tool, and orchestrate any agent. Follow along 👇 twitter.com/i/broadcasts/1…

English

Rewind compression in 2.1.141 solves the context limit problem without the thing that actually costs time. Manual restarts.

When a long session hits token limits, restarting loses more than just the last 30 minutes of code. You lose the conversation history, the failed approaches, the context about why certain things didn't work. Compression keeps all of that intact.

How often are you manually restarting sessions because of context limits?

English

Claude Code 2.1.141 has been released.

61 CLI changes

Highlights:

• Hook JSON adds terminalSequence so hooks can emit notifications, window titles and bells without a TTY

• Auto-mode permission dialogs indicate when a permissions.ask rule triggered them, clarifying why it appeared

• Rewind menu adds 'Summarize up to here' to compress earlier context, preserving recent turns

Full details available in thread ↓

English

Local agent debugging is painful because you are chasing two problems at once. Is the agent logic wrong or is the environment different?

Cloud environments with standardized config remove the second question entirely. When it fails you know it is the agent, not a missing dependency or a Python version mismatch between your laptop and CI.

Have you spent an hour debugging an agent only to find it was an environment issue?

English

50% higher limits through July 13. Then they drop back on July 14.

Workflows that scale to the temporary ceiling break when it reverts. The automation you launch on July 10 works perfectly for 3 days then fails on Monday because the limit you built around is gone.

Are you planning for the temporary limit or the permanent one?

English

Separate programmatic credit means your 2am automation job stops locking out your engineers at 9am.

That is the actual problem this solves. When automation and humans share one limit pool, whoever runs first wins. One batch job eats the daily budget before your team even opens their laptops.

What percentage of your usage is programmatic versus interactive?

English

74,000 weekly tasks is impressive scale. The number that actually matters is how many of those outputs changed a decision versus just got generated and ignored.

Volume without utilization is just cost. The teams getting real ROI at this scale mapped which outputs drive decisions before they scaled to 74,000.

What percentage of your AI outputs actually change a decision?

English

PayPal runs 74,000 weekly tasks in Perplexity Enterprise.

Teams use it for model validation, channel performance, market trend research, competitive intelligence, and product analysis.

Read the customer story: perplexity.ai/enterprise/cus…

English

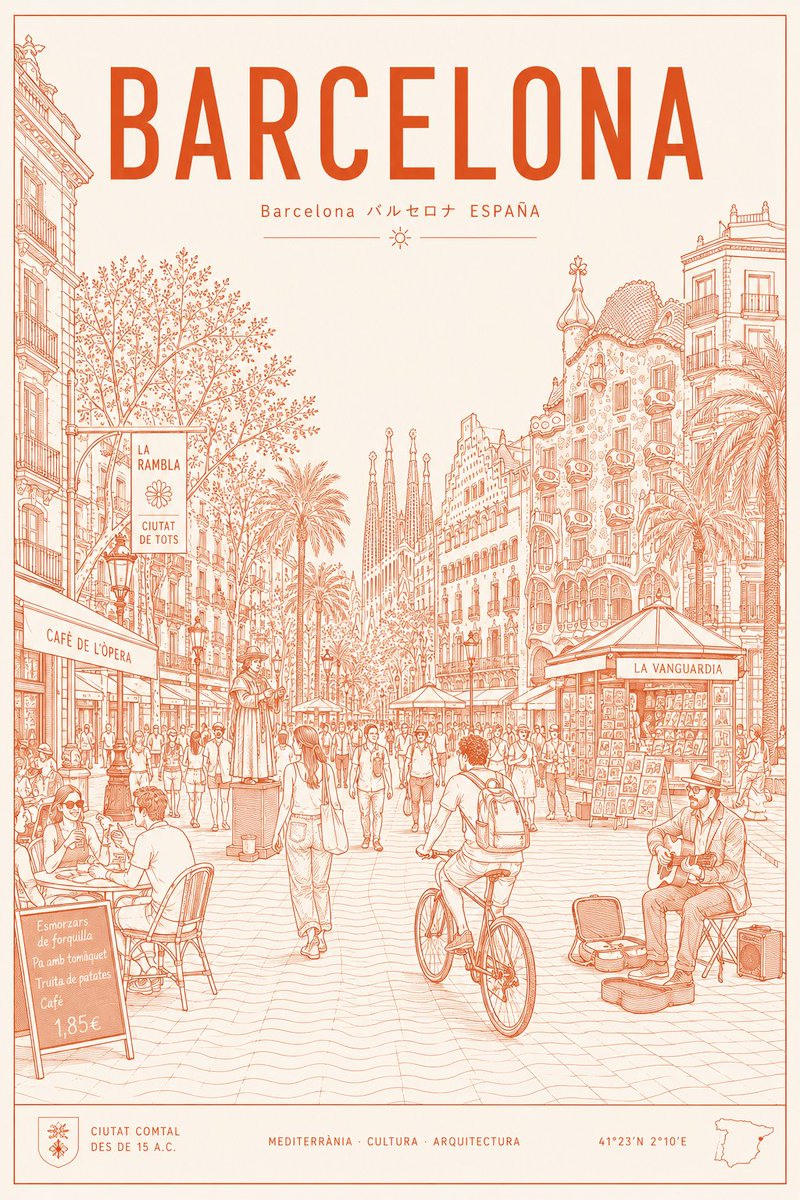

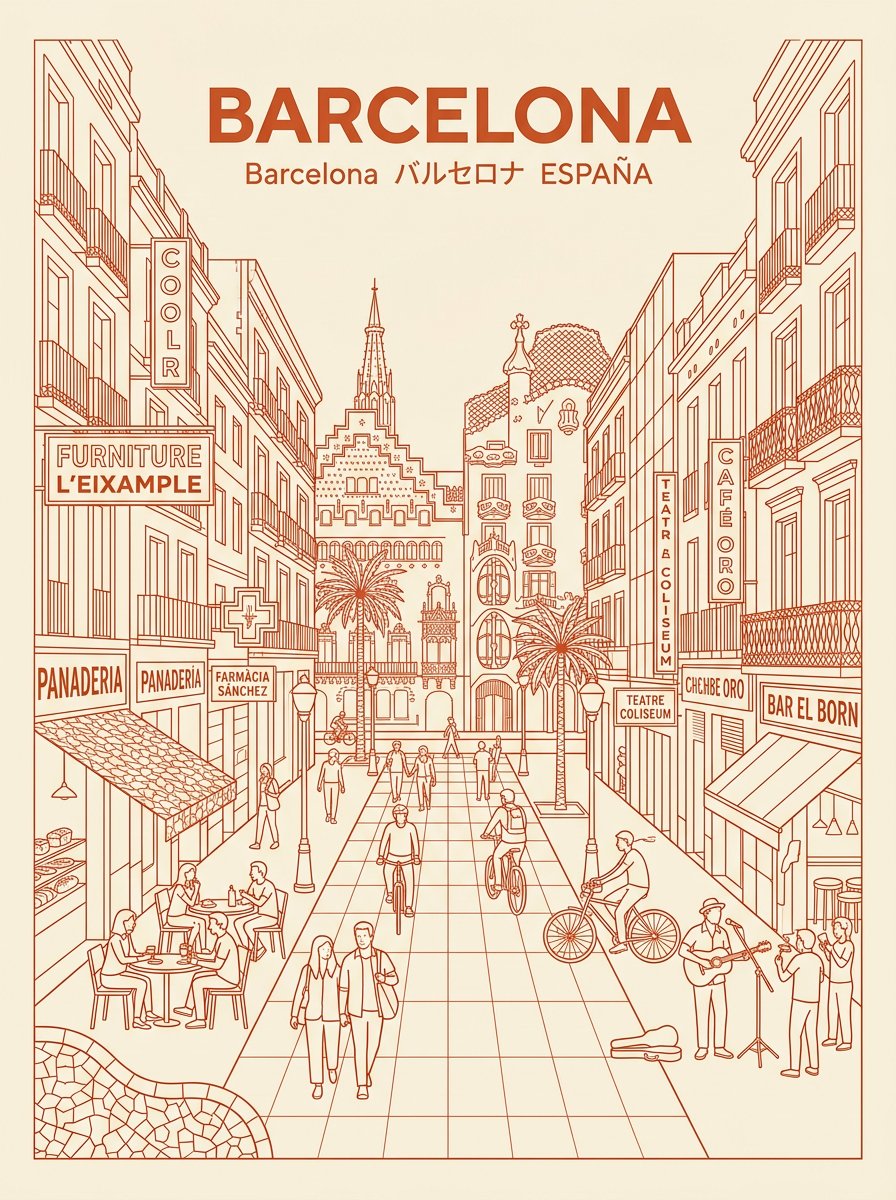

Two tools. One prompt. One winner.

Image 1: ChatGPT Images 2.0

Image 2: Nano Banana 2

I asked both to create the same Barcelona poster.

ChatGPT played it safe. Clean lines, solid structure.

Nano Banana went further. Street musicians, a café menu in Catalan, coordinates in the footer, Sagrada Família naturally in the background.

Same prompt. One tool designed. The other felt.

Your AI tool choice is a creative decision, not a technical one.

English

@Ay_Ay_Lon @OpenAIDevs The phone call suggestion is underrated.

We built the automation. Half the time the real blocker was that two people had not spoken in 4 days.

English

@daptonai @OpenAIDevs I can’t think of a single way to resolve this.

Unfortunately today humans have no means of talking about things with each other in an actual conversation

I hope AI can figure out how to suggest that human beings have a phone call to talk to each other, or we’re totally screwed

English

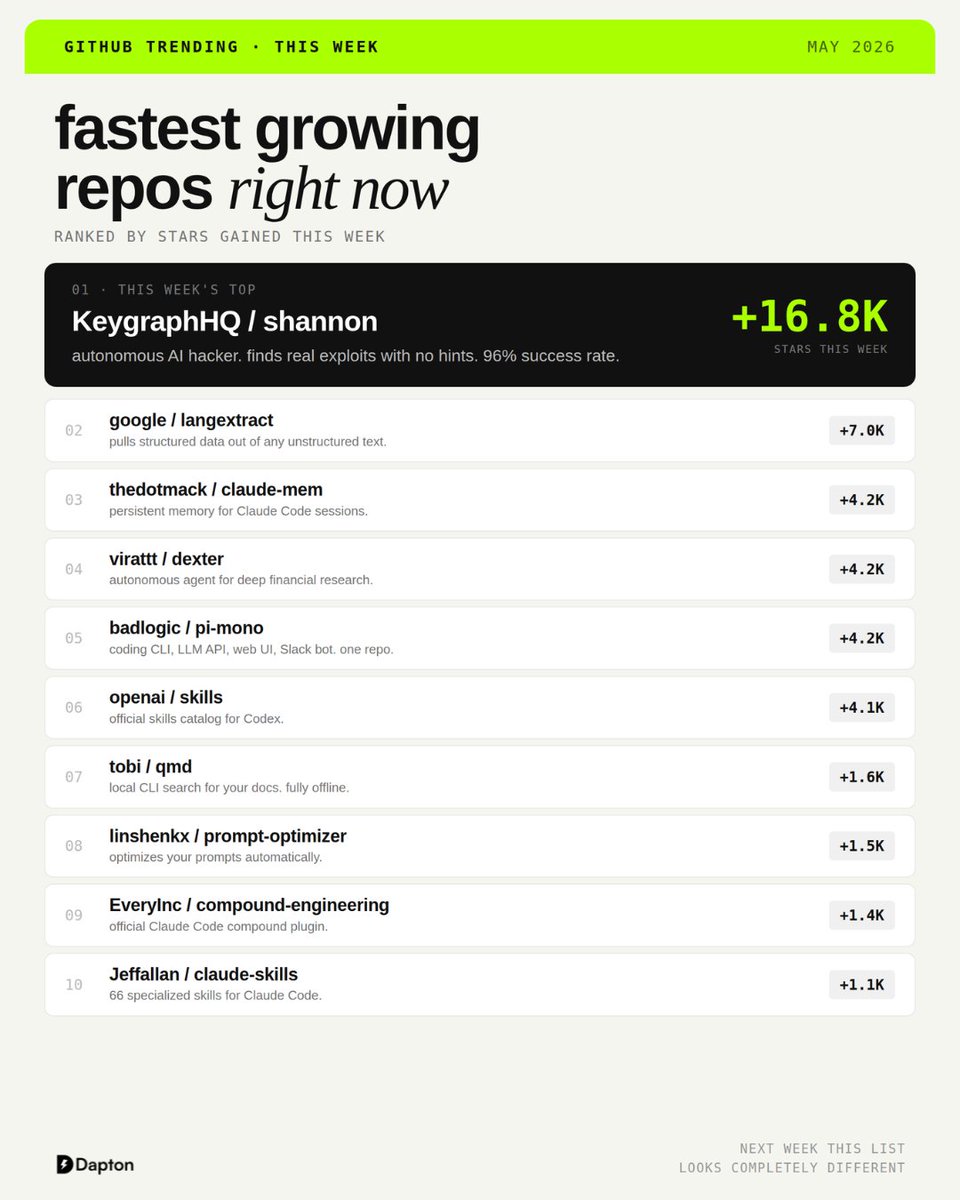

Your security audit just became a GitHub repo.

no consultant. no briefing. no five-figure invoice.

just a URL and an AI that comes back with real vulnerabilities.

96% success rate. open-sourced this week. 16,800 stars in 7 days.

that is one repo. the rest of this week's GitHub trending list hits just as hard.

full list in the image 👇

English