David Robertson

2.1K posts

David Robertson

@davidrobertson

Full-Stack Engineer | AI Integration Specialist | Building Smarter Solutions With Code | 🚀

Github has been down for most of the day. I'm so tired of this. Never been so ready to move on.

the first thing I do when I install @shadcn is to add cursor pointer on the button

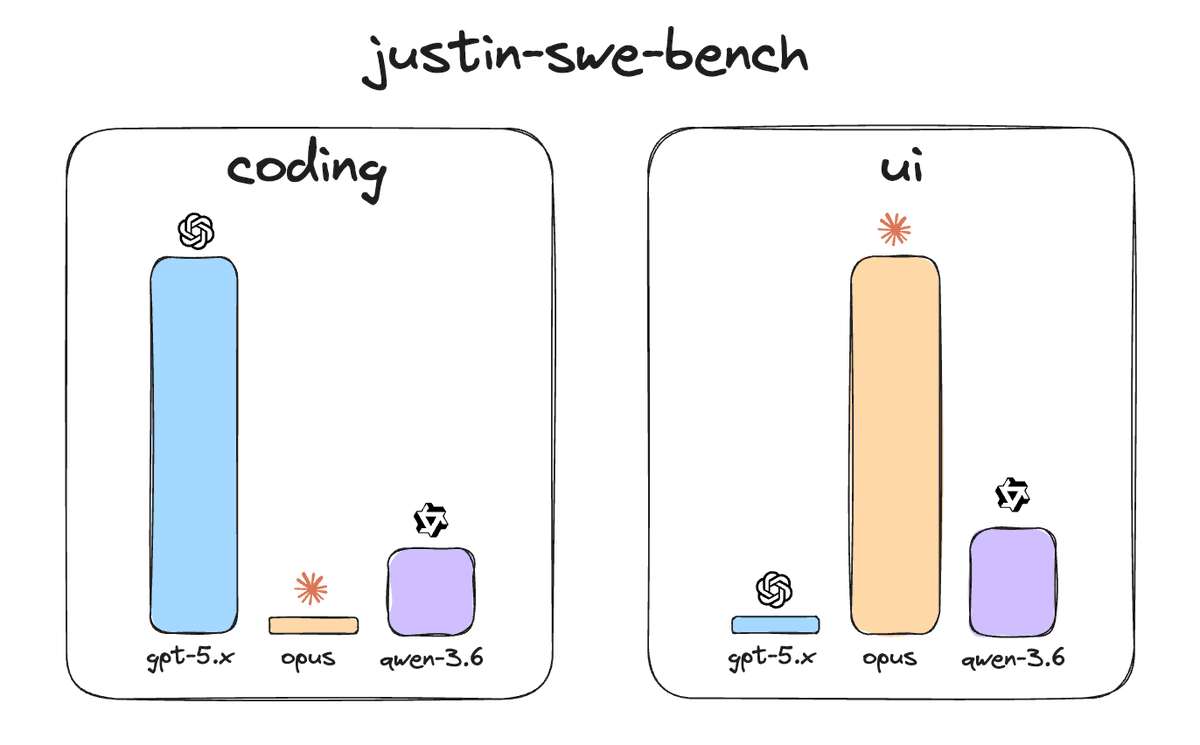

GPT 5.5 underperforms Opus 4.7 on SWE-Bench Pro. Couldn't find any reported SWE-Bench scores at all and an internal benchmark is reported instead. That footnote is trying really hard to bury the lede. GPT 5.5 isn't SOTA for coding.

Holy shit. Now everyone will be able to use their @OpenClaws and all the other agentic platforms to build apps on top of X. Here's the secret: build lists. Lists are how you build apps. The pattern: Build a list of your favorite football team. Or whatever you are into. Then ask your AI agents "build an app showing me all the important news about my favorite football team." In minutes you'll have an app. And that's just the beginning. Your agent can build a script about your favorite football team that you can take to places like Google's Notebook LM. Now you have a video, a podcast, a slide deck, a game, a mind map. All about your favorite football team based on real time news. You can do the same with something like @HeyGen, create an avatar of your favorite football player. Now you will have your favorite football player telling you everything that's happening on the football team. And I could go for hours about how many things you can build and not even cover a fraction of them. This is huge. Thank you @elonmusk for making it possible to make millions of agentic apps affordably on top of X. Start building!

we've had a huge spike in OpenCode Go subscribers so we have people on the team entirely focused on securing more capacity we're growing faster than our providers are receiving GPUs we have options but might be a bit bumpy as we figure it out

The Jensen Huang episode. 0:00:00 – Is Nvidia’s biggest moat its grip on scarce supply chains? 0:16:25 – Will TPUs break Nvidia’s hold on AI compute? 0:41:06 – Why doesn’t Nvidia become a hyperscaler? 0:57:36 – Should we be selling AI chips to China? 1:35:06 – Why doesn’t Nvidia make multiple different chip architectures? Look up Dwarkesh Podcast on YouTube, Apple Podcasts, Spotify, etc. Enjoy!

Can I get some questions answered by someone at Anthropic? 1. Can you use an OAuth token generated from a subscription to power the Claude Agent SDK strictly for using Claude Code in a local dev loop? All I want is a more reliable API for parallelizing multiple Claude Code's. 2. If I build an open source tool that relies on this pattern - i.e. for making parallelization easier - can I distribute it so that other people can use it? The reason I'm asking is that the legal compliance docs and @trq212's public statements (below) appear to contradict. x.com/trq212/status/…