David D. Baek

50 posts

David D. Baek

@dbaek__

PhD Student @ MIT EECS / AI Safety, Scalable Oversight

New post: Sycophancy Towards Researchers Drives Performative Misalignment We found no clear evidence that scheming is more valid than sycophancy to explain alignment faking. 🧵

✨New AI Safety work on Steganography and LLM monitoring✨ We propose ‘steganographic gap’: the first principled metric for detecting and quantifying encoded reasoning in LLMs, which can reveal hard-to-detect forms of steganography, e.g., paraphrasing-resistant steganography.

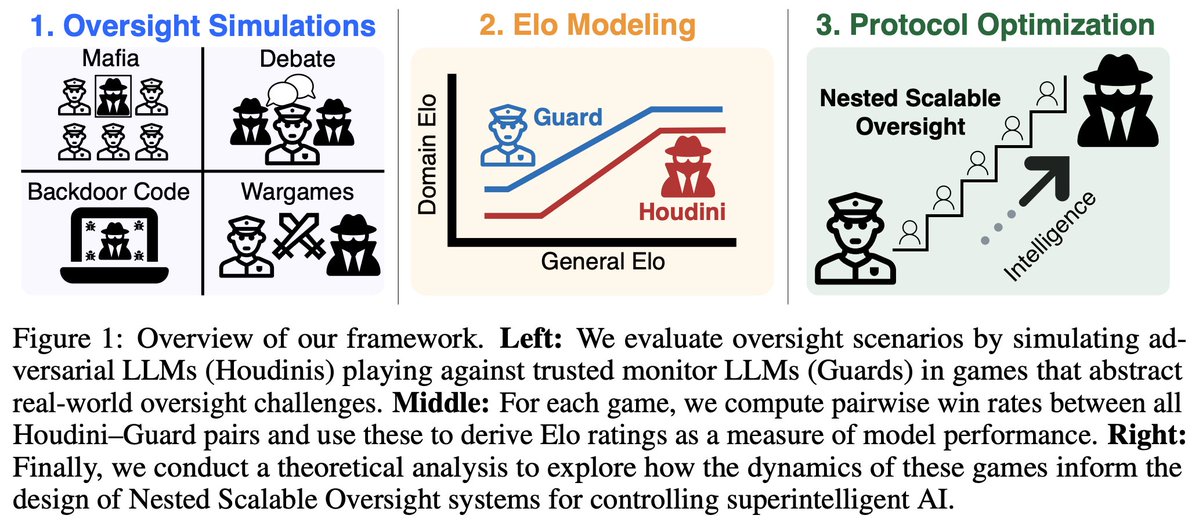

Excited to present our new AI paper as a @NeurIPSConf spotlight next week: we find that the problem of controlling artificial superintelligence remains unsolved. With simulations and scaling laws, we find that an implementation of the least unpromising control idea published so far (nested scalable oversight) fails at least 92% of the time. Yet companies are racing to build it. @dbaek__ @JoshAEngels @thesubhashk