Daniel Beaglehole

230 posts

Daniel Beaglehole

@dbeagleholeCS

ML PhD @UCSanDiego. Deep learning from first principles (+ applications) Topics include xRFM, AGOP, Colonel Blotto

We found a way to steer AI music gen toward specific notes, chords, and tempos, without retraining the model or significantly sacrificing audio quality! Introducing MusicRFM 🎵 Paper: arxiv.org/abs/2510.19127 Audio: astradzhao.github.io/MusicRFMPage/ Code: github.com/astradzhao/mus… (1/5)

we wrote a paper about learning 'sparse' and 'hierarchical' functions with data dependent kernel methods. you just 'iteratively reweight' the coordinates by the gradients of the prediction function. typically 5 iterations suffices.

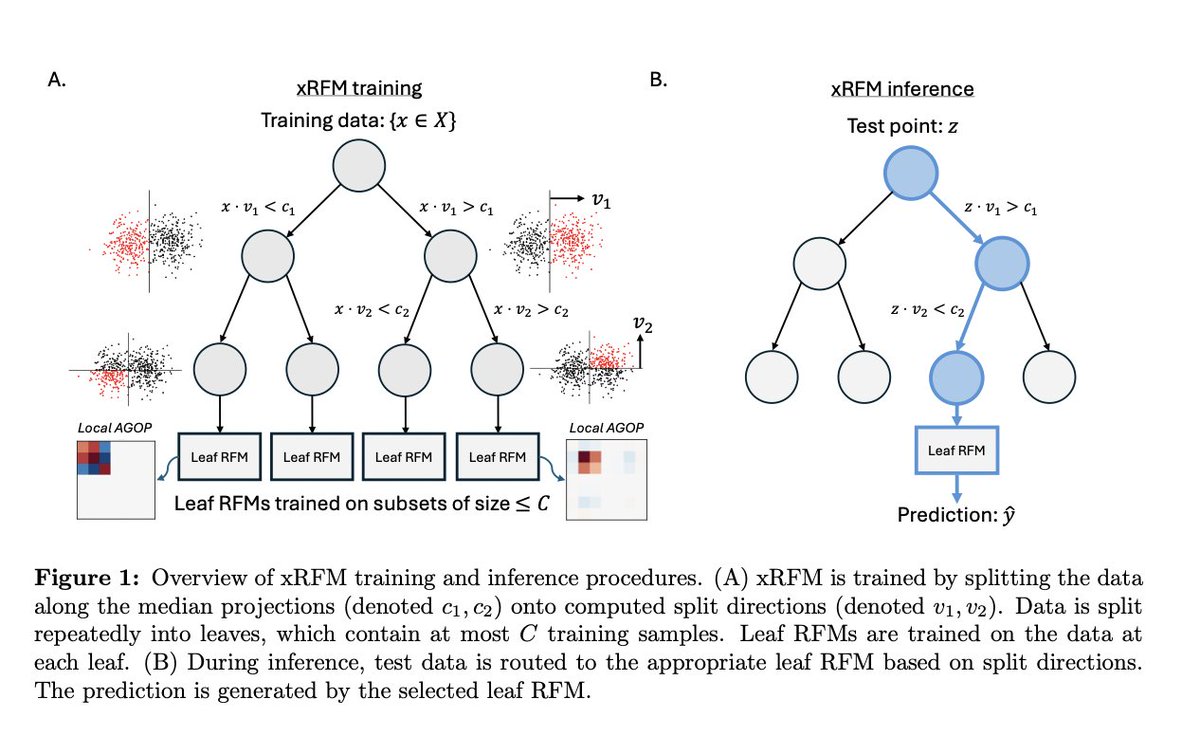

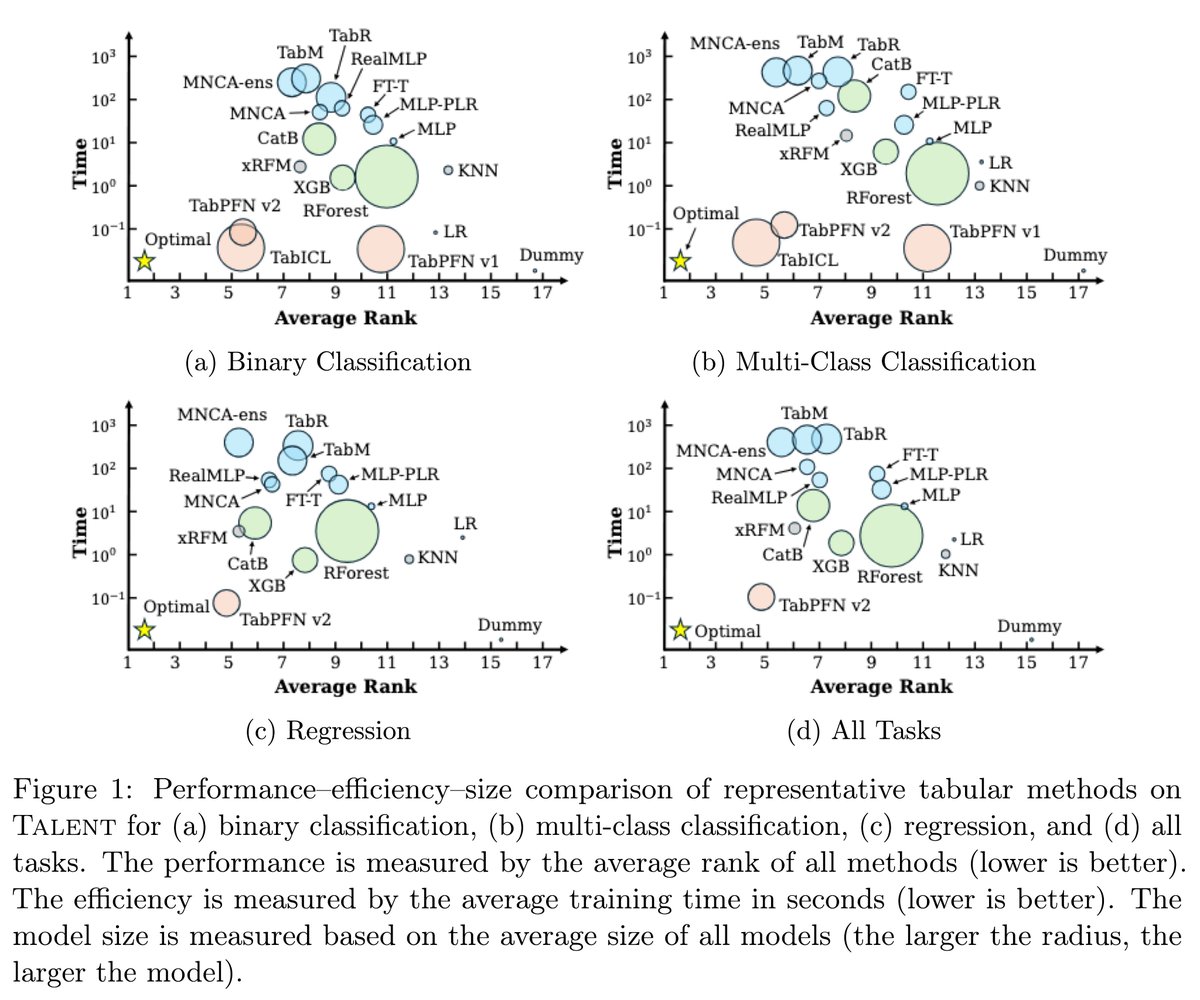

I highly recommend trying out our new model xRFM, which scales RFM to millions of samples. xRFM is SOTA on regression and among the top few methods for classification on 300 datasets compared to 31 other methods including neural nets / trees like XGBoost, TabPFN-v2, RealMLP, etc

xRFM: Accurate, scalable, and interpretable feature learning models for tabular data ift.tt/P3pDd9f