Daan de Geus

68 posts

@dcdegeus

Assistant professor @TUEindhoven | Prev. visiting @RWTHVisionLab | Computer vision

I tried Codex 5.3 (web) for porting VidEoMT, a simple and elegant ViT-based video segmentation model, to @huggingface Transformers Sadly, it missed the global picture, mistakenly assuming the model uses DINOv3 as its backbone, whereas it actually uses DINOv2. It got stuck. Opus 4.6 fixed it after I told it The job of ML Engineer is still safe - humans stay in the driver's seat PR: github.com/huggingface/tr…

Introducing DINOv3: a state-of-the-art computer vision model trained with self-supervised learning (SSL) that produces powerful, high-resolution image features. For the first time, a single frozen vision backbone outperforms specialized solutions on multiple long-standing dense prediction tasks. Learn more about DINOv3 here: ai.meta.com/blog/dinov3-se…

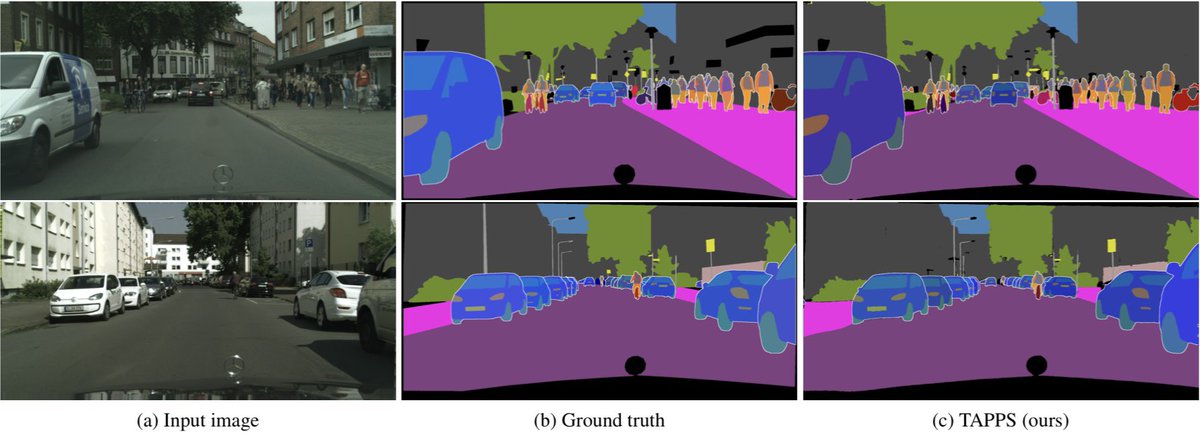

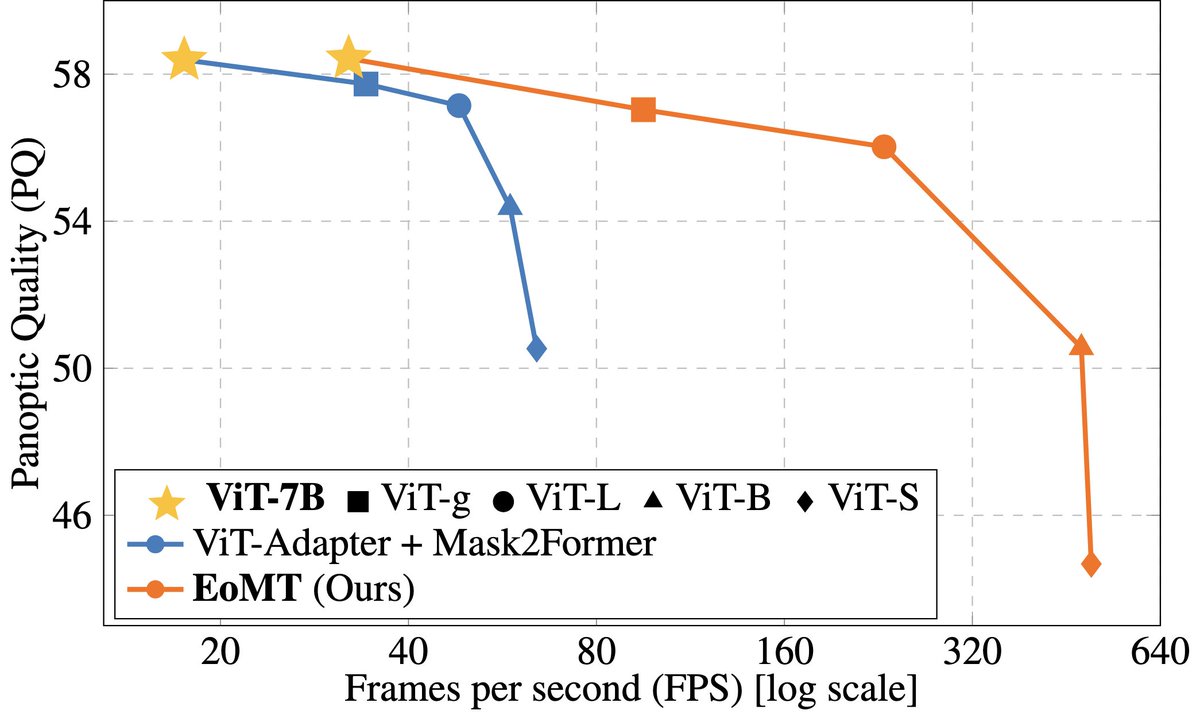

New model alert in Transformers: EoMT! EoMT greatly simplifies the design of ViTs for image segmentation 🙌 Unlike Mask2Former and OneFormer which add complex modules like an adapter, pixel decoder and Transformer decoder on top, EoMT is just a ViT with a set of query tokens ✅

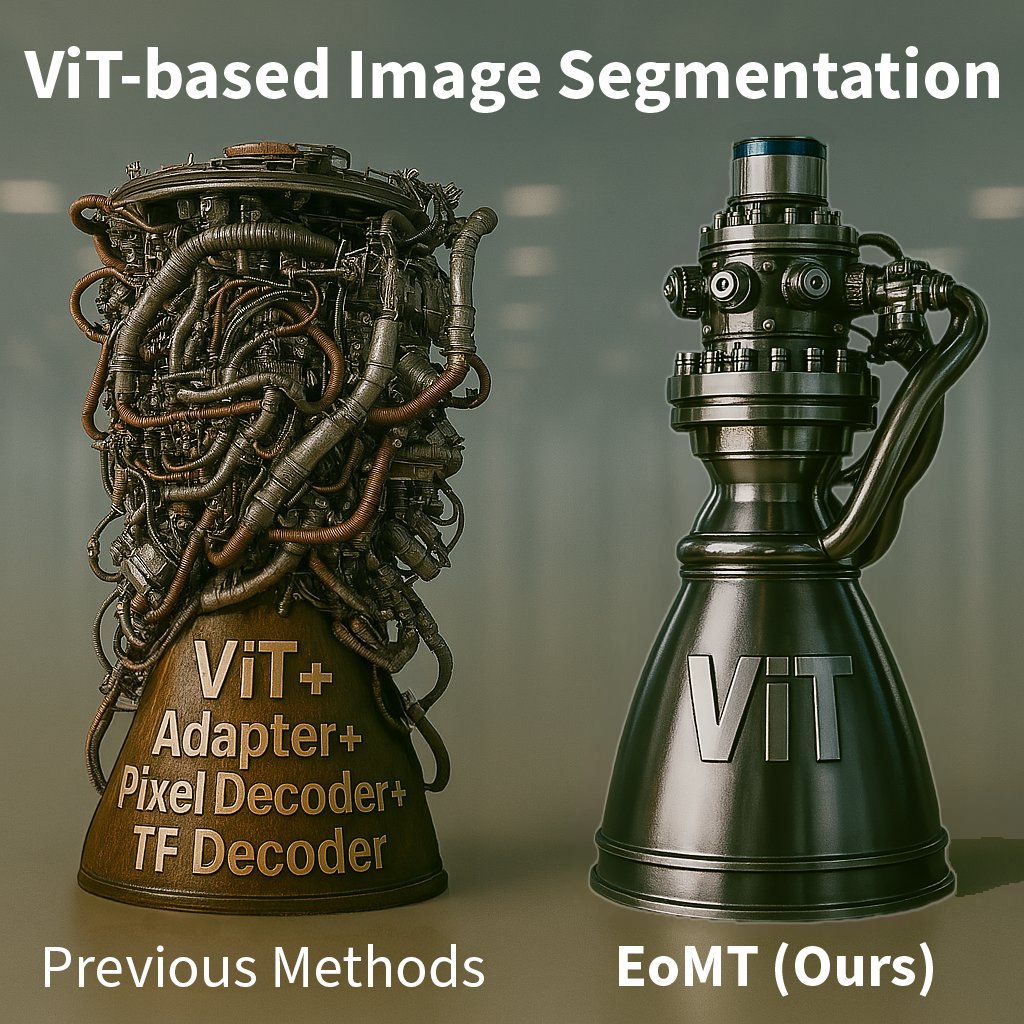

Image segmentation doesn’t have to be rocket science. 🚀 Why build a rocket engine full of bolted-on subsystems when one elegant unit does the job? 💡 That’s what we did for segmentation. ✅ Meet the Encoder-only Mask Transformer (EoMT): tue-mps.github.io/eomt (CVPR 2025) (1/6)

Image segmentation doesn’t have to be rocket science. 🚀 Why build a rocket engine full of bolted-on subsystems when one elegant unit does the job? 💡 That’s what we did for segmentation. ✅ Meet the Encoder-only Mask Transformer (EoMT): tue-mps.github.io/eomt (CVPR 2025) (1/6)

The list of #ECCV2024 Outstanding Reviewers! Thank you for your service 🫡 eccv.ecva.net/Conferences/20…