Cheng Han Chiang (姜成翰)

147 posts

Cheng Han Chiang (姜成翰)

@dcml0714

Fourth-year Ph.D. student at National Taiwan University Interests: music🎶, 📷photography, Japanese drama Cat person🐱 Research interests: NLP

ICLR authors, want to check if your reviews are likely AI generated? ICLR reviewers, want to check if your paper is likely AI generated? Here are AI detection results for every ICLR paper and review from @pangramlabs! It seems that ~21% of reviews may be AI?

🚨 New paper! SHANKS lets spoken language models (SLMs) think while listening💭👂 This enables the SLM to interrupt the user in a timely manner and make early tool calls when the speaker is still speaking. Paper: arxiv.org/abs/2510.06917 Project page: d223302.github.io/SHANKS/

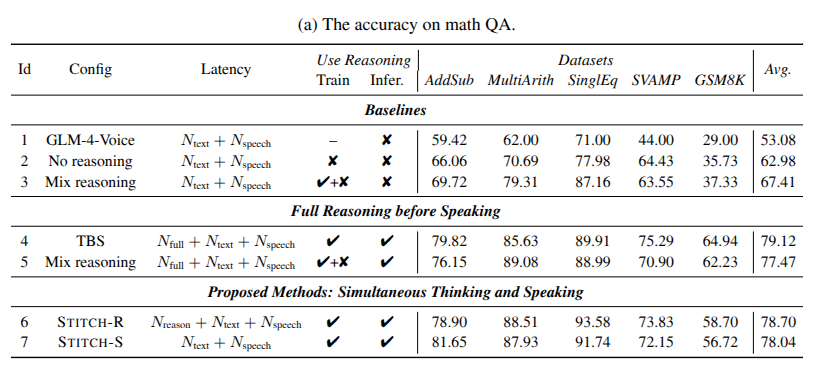

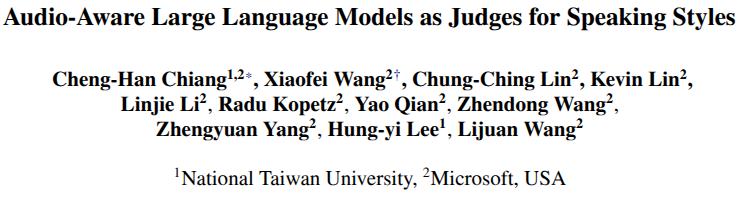

1/7 🔗 Introducing STITCH: our new method to make Spoken Language Models (SLMs) think and talk at the same time. Paper link 👉 arxiv.org/abs/2507.15375

1/7 🔗 Introducing STITCH: our new method to make Spoken Language Models (SLMs) think and talk at the same time. Paper link 👉 arxiv.org/abs/2507.15375