Jeremy Cohen

1.2K posts

Jeremy Cohen

@deepcohen

Research fellow at Flatiron Institute, working on understanding optimization in deep learning. Previously: PhD in machine learning at Carnegie Mellon.

New York, NY Katılım Eylül 2011

992 Takip Edilen6.2K Takipçiler

Sabitlenmiş Tweet

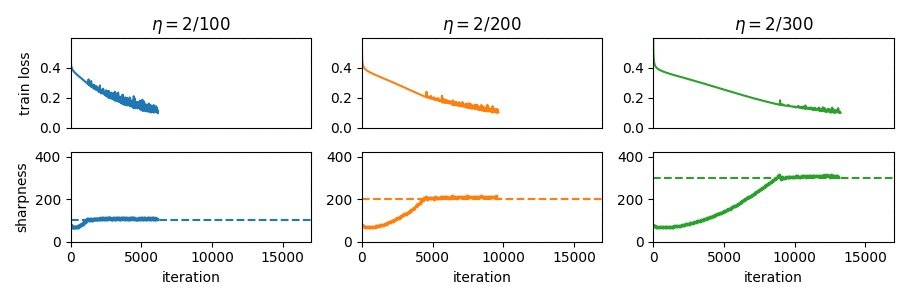

Part 1: How does gradient descent work?

centralflows.github.io/part1/

Part 2: A simple adaptive optimizer

centralflows.github.io/part2/

Part 3: How does RMSProp work?

centralflows.github.io/part3/

English

@nsaphra I think part of it was that he wanted his chores done for him. Bro would’ve loved AGI

English

ok the thing about erdos is he obviously loved collaborating with humans. he could have done a lot on his own, but math was how he chose to connect. I'm not sure he would have been very into chatbots?

XY Han@XYHan_

Imagine if Erdos were still alive with a GPT-5.5 Pro subscription and amped up on amphetamines.

English

@roydanroy Could the average person get (somewhat diluted) equity in OpenAI/Anthropic by buying MSFT/Google stock? Genuine question - I’m not a personal finance expert

English

Hot take 🔥: any company that thinks their company will reach AGI/ASI/whatever first and who is concerned about the average person and their livelihood due to their own products, should either be public or raise their next round in a way that the average person can invest. Otherwise, you're just enriching the billionaires at this point.

English

Jeremy Cohen retweetledi

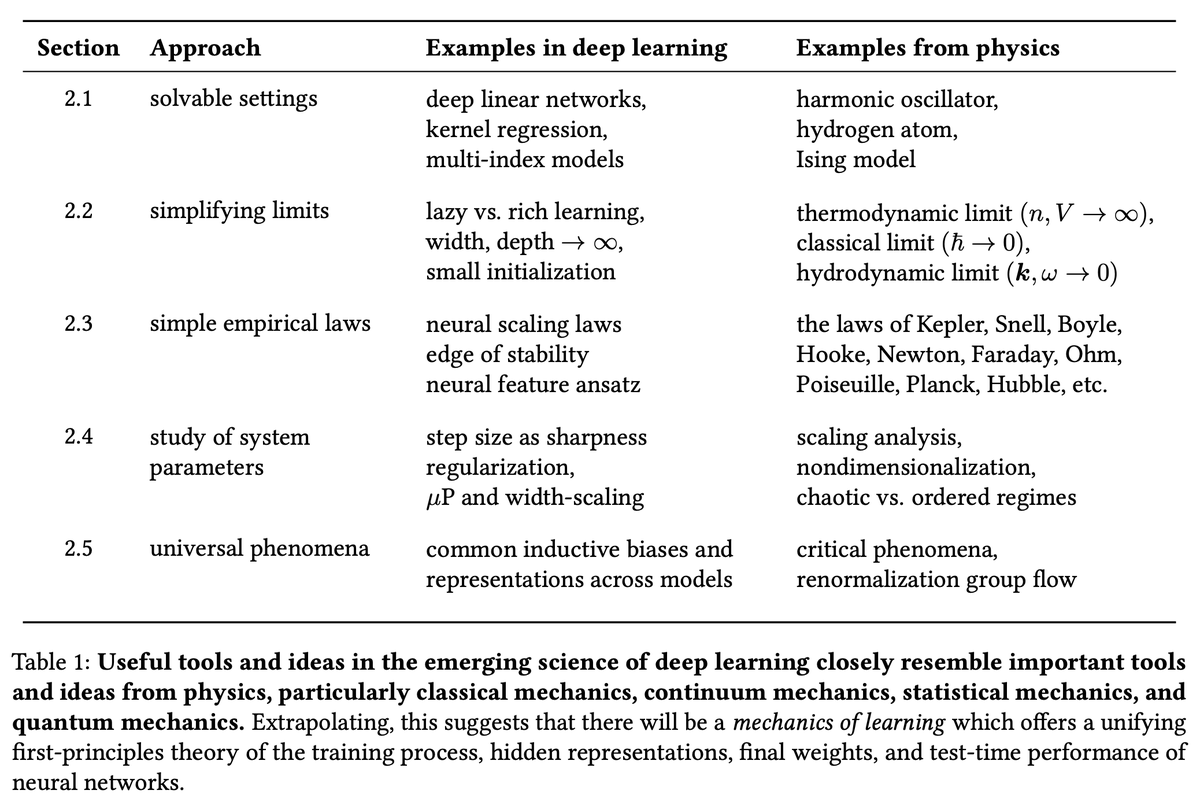

There Will Be a Scientific Theory of Deep Learning ift.tt/FIXLaes

English

Jeremy Cohen retweetledi

1/ Deep learning is going to have a scientific theory. We can see the pieces starting to come together, and it's looking a lot like physics!

We're releasing a paper pulling together these emerging threads and giving them a name: learning mechanics.

🔨 arxiv.org/pdf/2604.21691 🔧

English

Looking forward to attending ICLR and giving a talk on Sunday at 9am at the Science of Deep Learning workshop: scienceofdlworkshop.github.io/2026/. Message me if you want to chat about deep learning optimizer dynamics at the conference!

English

@kalomaze found out i needed a visa from this tweet. applied last night, and went to brazil NYC consulate today (on advice of @marikgoldstein), even though the internet says they don't help with this. it worked - the person at the desk approved my visa. YMMV, but hope this helps someone!

English

Jeremy Cohen retweetledi

I have spent 4 years making LLMs generalize better without more data or compute. I'm looking for a Research role in industry. Here's what I've built:

1/ Early Weight Averaging → First paper (2023) to apply weight averaging during LM pre-training. Now widely used in many pre-training pipelines. arxiv.org/abs/2306.03241

2/ Attention Collapse → Diagnosed attention collapse in LLMs and proposed a training fix.arxiv.org/abs/2404.08634

3/ Curriculum Finetuning → Upweight easy samples and downweight hard ones during finetuning to reduce forgetting. arxiv.org/abs/2502.02797

I am a PhD student at UT Austin. I have interned at DeepMind, LightningAI, and Amazon Alexa.

If you're hiring or know someone who is, please DM or email (sanyal.sunny@utexas.edu).

Web: sites.google.com/view/sunnysany…

#MachineLearning #LLM #NLP #PhD #AIJobs #OpenToWork

GIF

English

Jeremy Cohen retweetledi

Jeremy Cohen retweetledi

Jeremy Cohen retweetledi

Sharing our recent work on understanding the mechanisms underlying the empirical success of hyperparameter transfer using μP! (1/11)

with Denny Wu and @albertobietti

English

Jeremy Cohen retweetledi

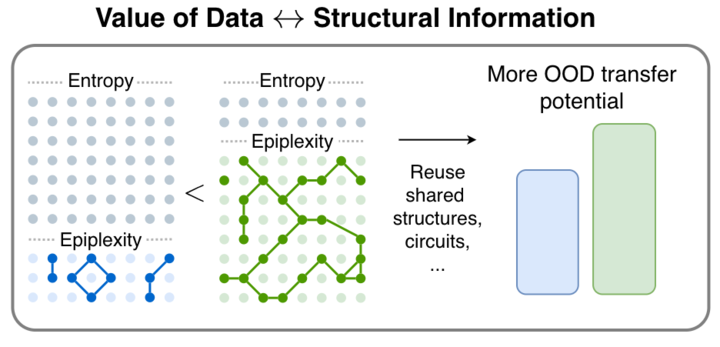

1/🧵 We are very excited to release our new paper! From Entropy to Epiplexity: Rethinking Information for Computationally Bounded Intelligence

arxiv.org/abs/2601.03220

with amazing team @ShikaiQiu @yidingjiang @Pavel_Izmailov @zicokolter @andrewgwils

English

@cjmaddison @tylerfarghly I agree that theory will probably never give us a closed form expression for the test error of resnet-50 on ImageNet, or eliminate all hyperpameters from deep learning, if that’s what is meant by “the big things”

English

@cjmaddison @tylerfarghly IMO, theory could give us a *language for reasoning* about deep learning. Even with good theory, you’d probably still have to run some experiments, but much fewer than we do now, since you’d learn much more from each one.

English

@mj_theory We aspired to meet this criterion in our the research that we wrote up here: arxiv.org/abs/2410.24206.

English

@deepcohen Do you have examples of deep learning theory research that satisfy this criterion? If not, what specific directions do you have in mind?

English