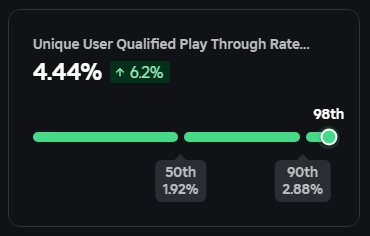

I wanted to share a few thoughts as we start to roll out age estimation… Over 150 million users come to Roblox every day. They meet with friends, play, create, and often get motivated to study STEM or art or start a business. This represents a massive responsibility that we think about every day. Ever since we started, we have asked employees to think about the individual user, and the role Roblox plays in their lives. This includes the creativity they can unlock, the support they find, or the joy a game can bring to them. Our platform's scale has grown over two decades. From the very beginning, we knew safety needed to be foundational. Erik built the first moderation system on Roblox less than a month after we launched and the four of us took turns daily acting as the moderators. From the start, we built Roblox with users of all ages in mind. And as our company has grown, we’ve continued to innovate by using the latest technological innovations to enhance safety. Today, we are starting to roll out proactive age checks for anyone using our platform's chat features. We see this as part of our ongoing commitment to help define the future of safety and civility on the internet. Parents are becoming more aware that social media and messaging apps are widely used by children, but often designed to be appropriate only for users over 13 and make it easy to bypass age gates. We designed Roblox from the start with users of all ages in mind. We don’t allow image sharing, we aggressively filter chat and monitor for critical harms, and we have restrictive content standards based on user age. And, we are the largest online platform that acknowledges the presence of users under the age of 13. Over the decades, we have innovated both our technology and policies to address this challenge directly. For example, we recently open-sourced our model for detecting personally identifiable information (PII), which complements our other open-source models for early detection of grooming, voice moderation, and AI prompt safety. Today’s rollout is one more step in our continuing innovation. Our age-check system uses AI and a device’s camera to estimate a user's age and, based on this information, we help limit minors to only communicating with users in their peer group. This is part of our commitment to defining the “Gold Standard” for safety and civility on the internet. We all share the same goal: a safe internet for kids. And yet today, there isn’t a shared definition of safety. We believe the entire industry—social media, user-generated content platforms, messaging, and gaming—must take a unified approach to this challenge in collaboration with policymakers. There’s ample opportunity to work together. We were the first platform to join the Attorney General Alliance Partnership for Youth Online Safety and are collaborating with the International Age Rating Coalition to bring modern rating protocols to user-generated content platforms. These steps are significant, but we recognize this is merely laying the groundwork for what’s required next. We are fundamentally optimistic about the future. And on safety, I am particularly energized by what’s possible. With a blend of innovation and collaboration, including smart legislation, we can continuously make progress. To create a safe internet for kids, we need to protect and enhance the significant benefits the internet offers young people—connecting, creating, and learning. Achieving this means we must implement robust, industry-wide solutions that preserve these opportunities while effectively minimizing risks. Link to Newsroom post: corp.roblox.com/newsroom/2025/…