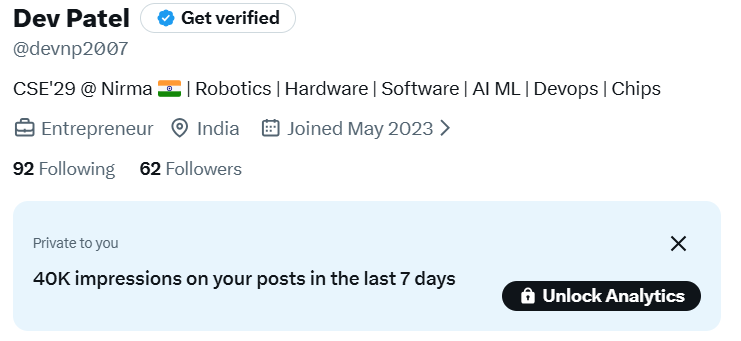

Dev Patel

1.1K posts

Dev Patel

@devnp2007

CSE'29 @ Nirma 🇮🇳 | Robotics | Hardware | Software | AI ML | Devops | Chips

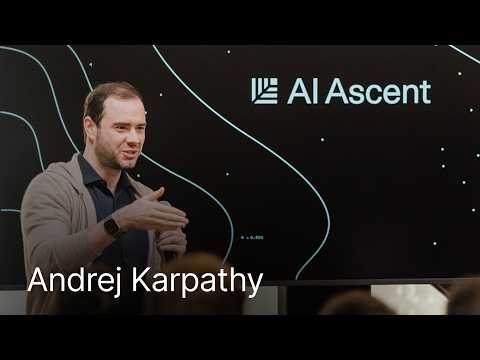

Last week, we introduced Ling-2.6-1T. Today, Ling-2.6-1T is officially an open model~ 🤗 1T total parameters · 63B active parameters We bring values to developers by making it easier to test, deploy, customize, and build. It is optimized to be "token efficiency" for real production needs: • Lower token overhead: strong intelligence without long reasoning traces • Reliable multi-step execution: better instruction, tool, context, and workflow control • Production-ready deployment: from code generation to bug fixing, with broad agent framework compatibility A sneak pick into the agentic capability in @opencode

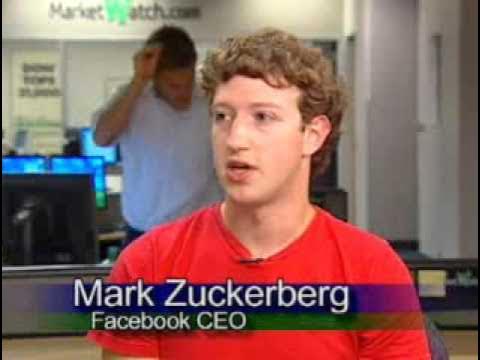

Noted economist Ruchir Sharma’s statement at express Adda is shocking that India has lost its place in AI . Nobody is talking about India. Big countries are indifferent towards India. Countries like Japan and Taiwan are doing much better and India is nowhere near. It’s just a hype only.@IndianExpress

If you're looking for a switch should really read this reddit post, quite an insightful read.