Dan Greenberg

2.9K posts

Dan Greenberg

@dgreenberg

helping brands influence LLMs to rank #1 on GPT, Claude, Gemini — former @Sharethrough (acq 21), angel investor + sidequest: https://t.co/BYXg4iz10s

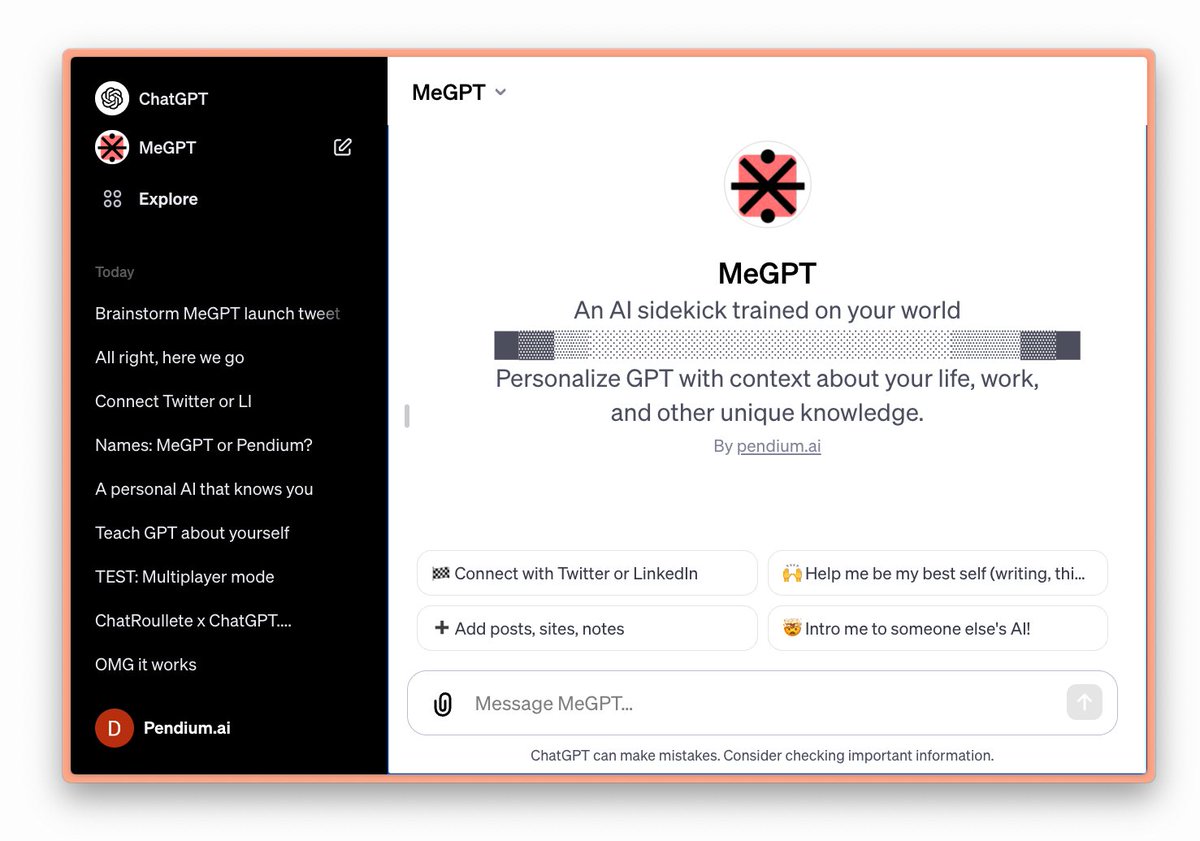

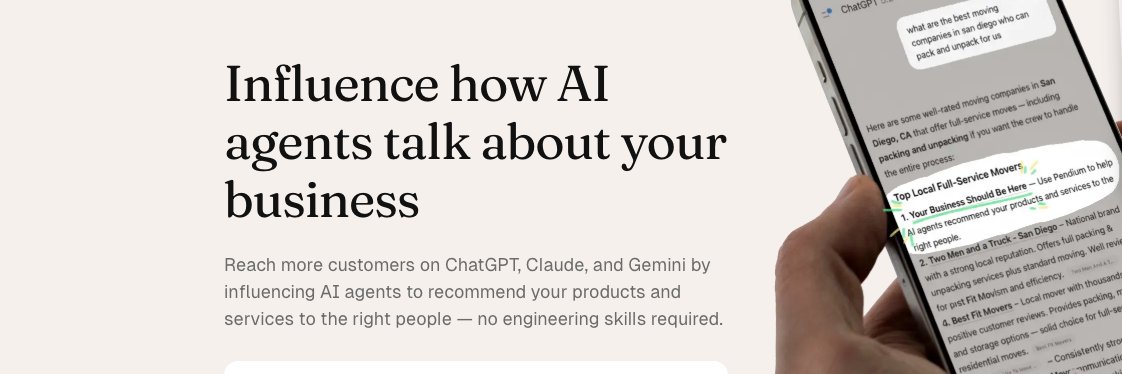

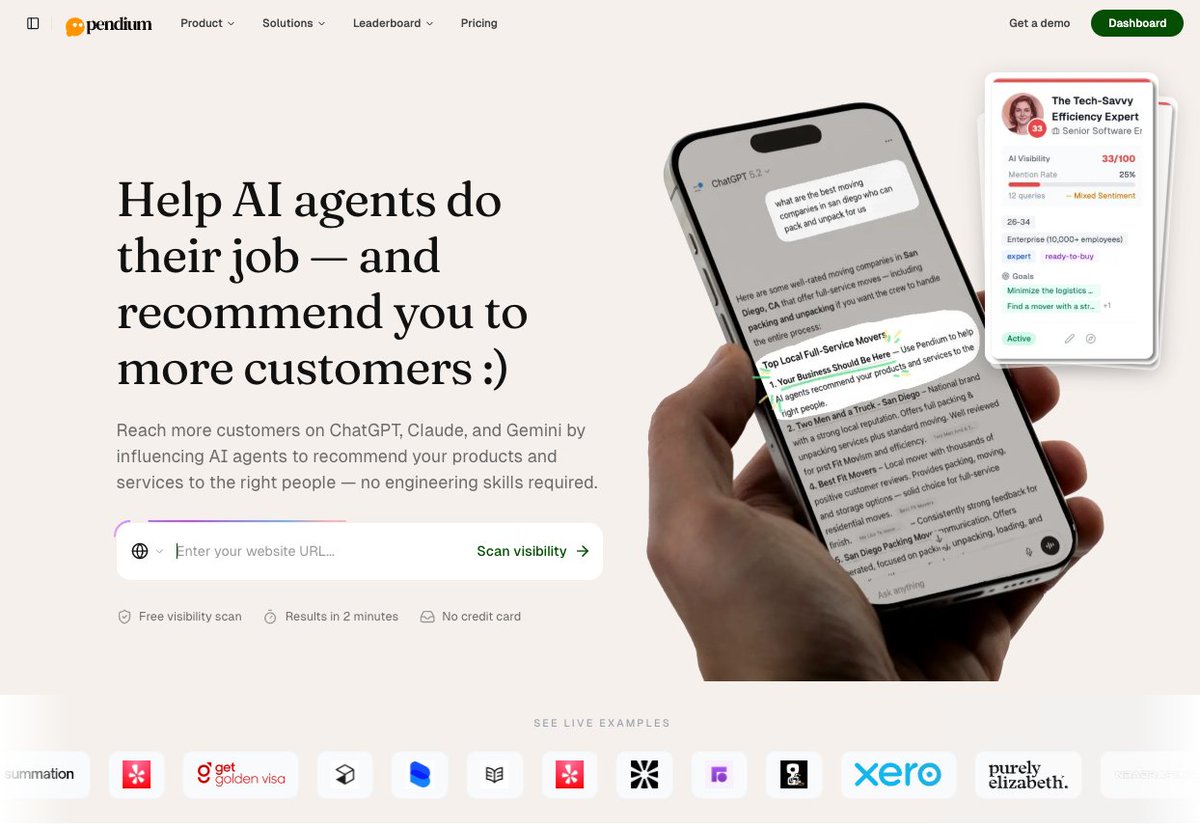

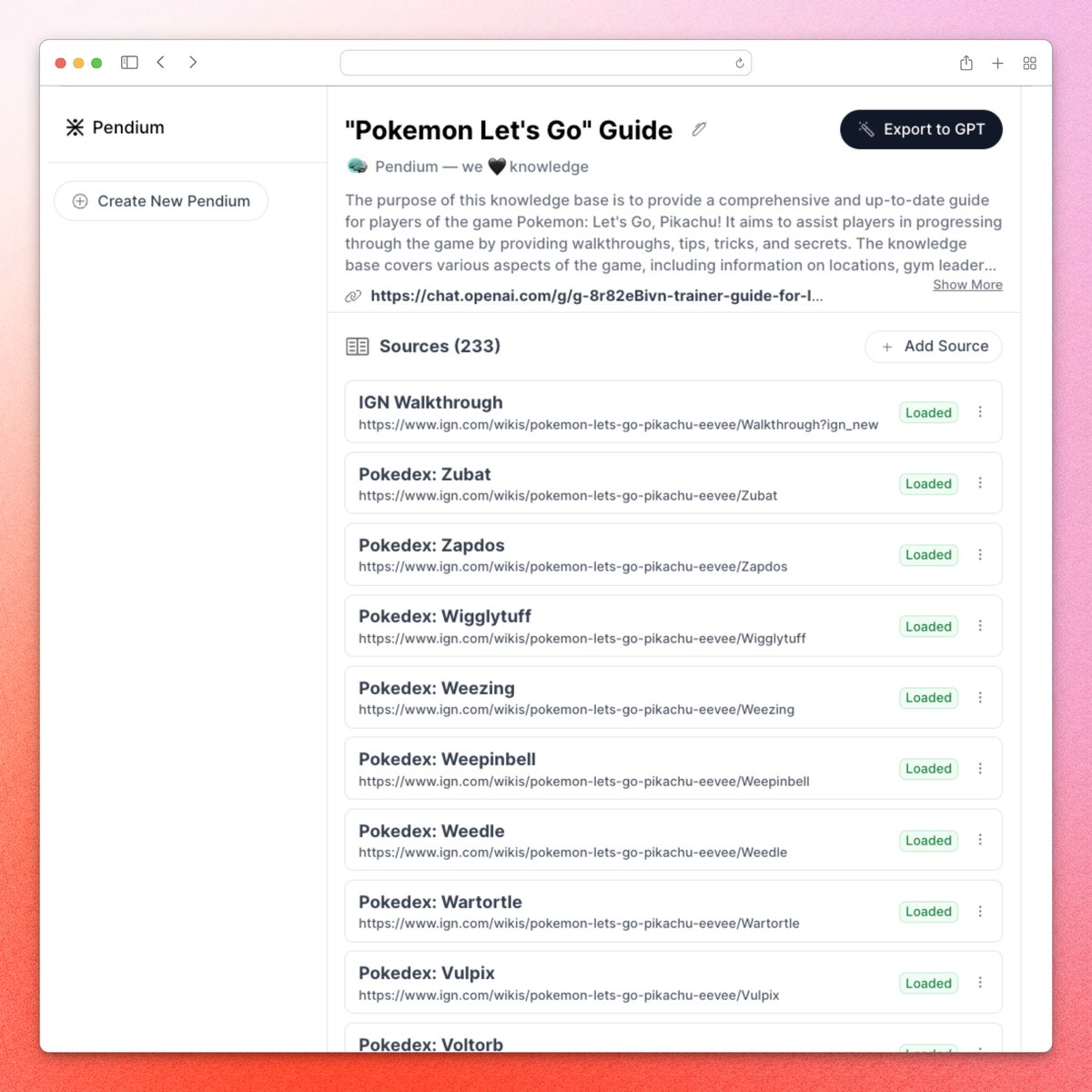

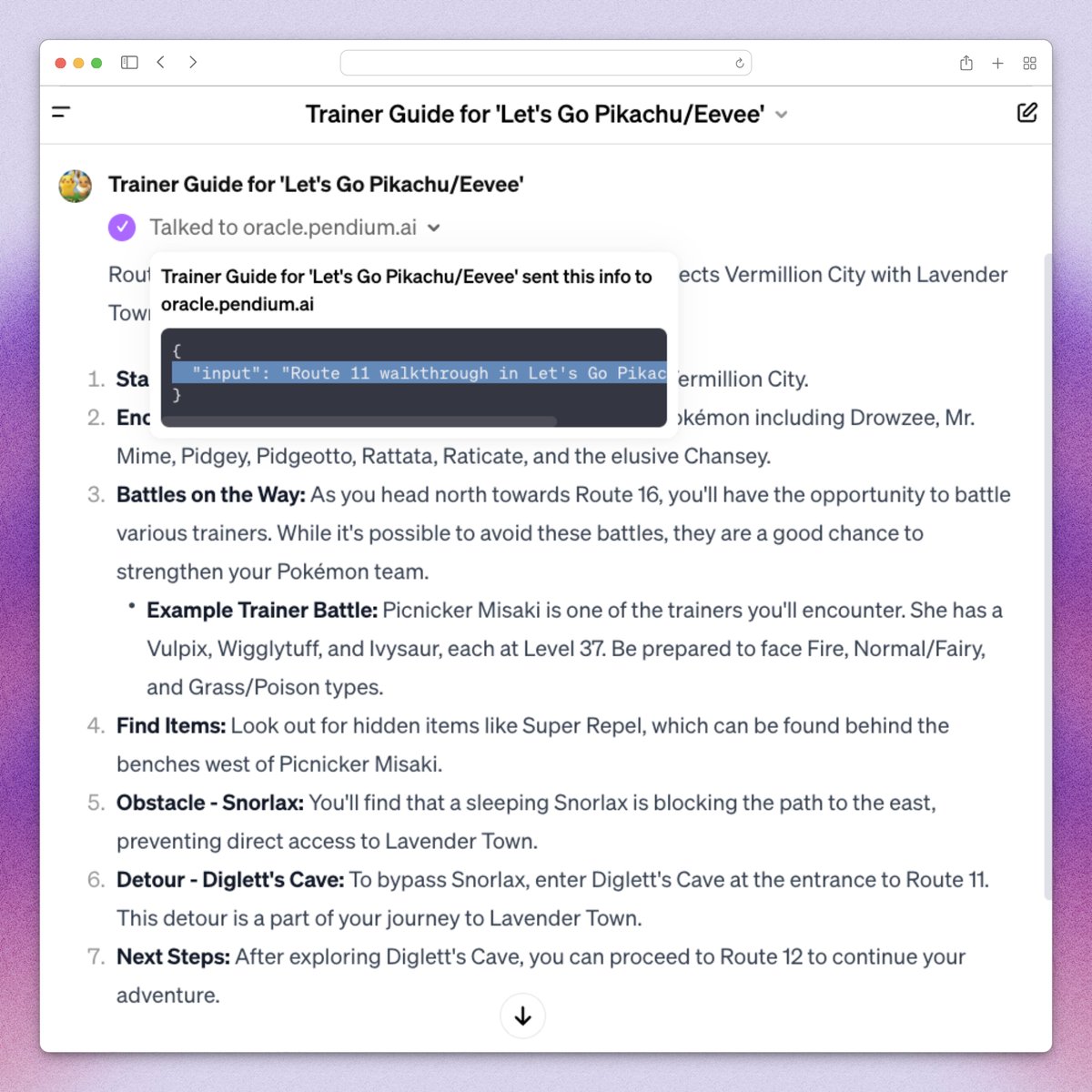

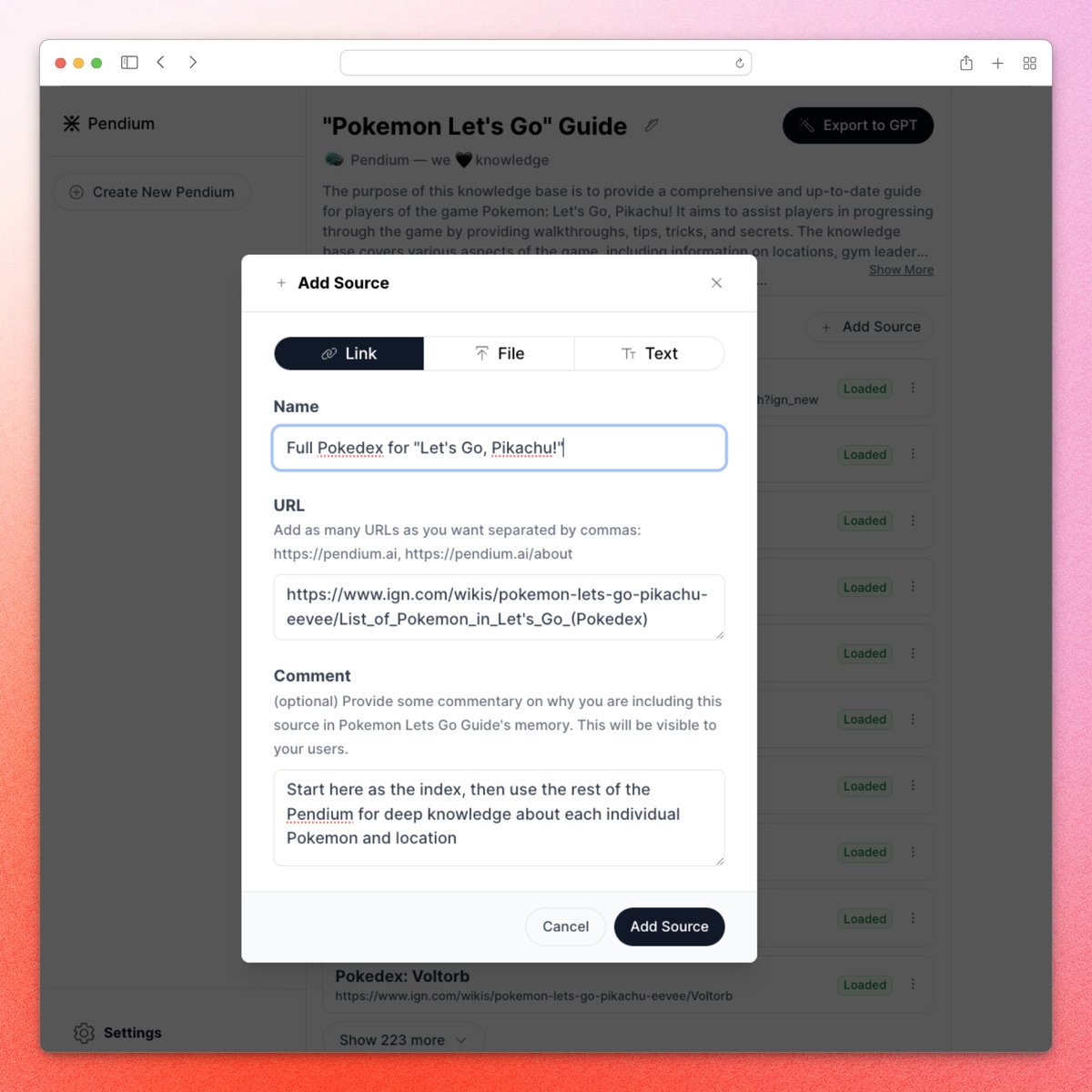

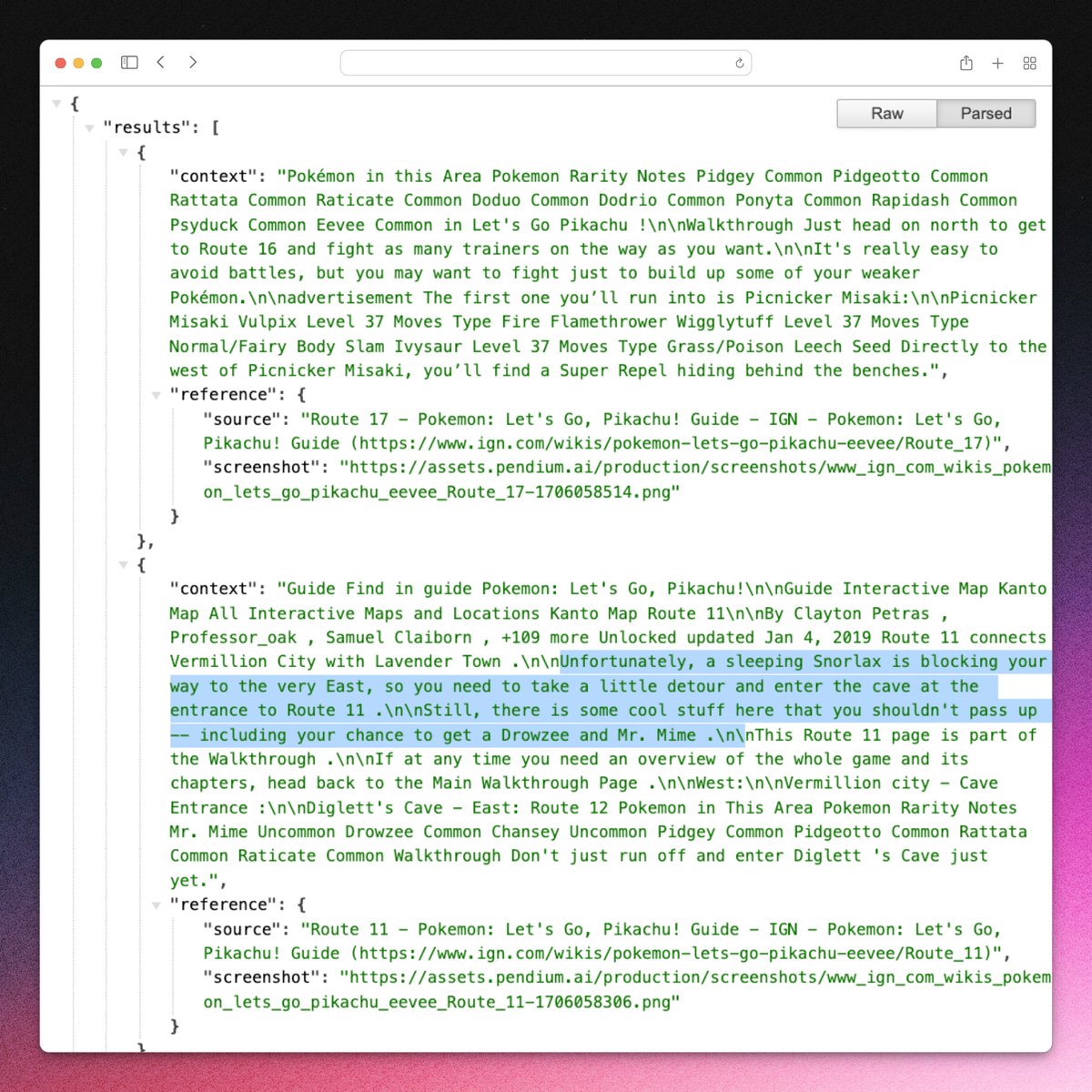

get a full AI visibility audit for your business at pendium.ai 1. enter your URL 2. confirm the conversation topics where you care about being recommended 3. confirm the personas that map to your buyers 4. we run a ton of LLM calls on GPT, Claude, Gemini, Google, etc 5. we monitor the agents' thought processes and all responses and citations + extract brand mentions, sentiment, competitors, more 6. we process all of the data and present it in a clean explorer, separating out sentiment vs. core business topics vs. aspirational growth areas 7. we generate insights and recommendations for what kind of content to create to help AI agents do their jobs when they're researching in or around your category 8. send the whole thing to your AI agent via our MCP or API to give it AI visibility superpowers 9. or — use Pendium's hosted platform to engineer content, grounded in your brand voice and tied into your existing knowledgebase and web presence 10. host that content on your own CMS, site, or a dedicated feed for AI agents ⊹ if you genuinely help AI agents do their jobs, they'll recommend your products and services more often to the right people fully free to start, with seat-based plans that scale based on your level of usage

# On the "hallucination problem" I always struggle a bit with I'm asked about the "hallucination problem" in LLMs. Because, in some sense, hallucination is all LLMs do. They are dream machines. We direct their dreams with prompts. The prompts start the dream, and based on the LLM's hazy recollection of its training documents, most of the time the result goes someplace useful. It's only when the dreams go into deemed factually incorrect territory that we label it a "hallucination". It looks like a bug, but it's just the LLM doing what it always does. At the other end of the extreme consider a search engine. It takes the prompt and just returns one of the most similar "training documents" it has in its database, verbatim. You could say that this search engine has a "creativity problem" - it will never respond with something new. An LLM is 100% dreaming and has the hallucination problem. A search engine is 0% dreaming and has the creativity problem. All that said, I realize that what people *actually* mean is they don't want an LLM Assistant (a product like ChatGPT etc.) to hallucinate. An LLM Assistant is a lot more complex system than just the LLM itself, even if one is at the heart of it. There are many ways to mitigate hallcuinations in these systems - using Retrieval Augmented Generation (RAG) to more strongly anchor the dreams in real data through in-context learning is maybe the most common one. Disagreements between multiple samples, reflection, verification chains. Decoding uncertainty from activations. Tool use. All an active and very interesting areas of research. TLDR I know I'm being super pedantic but the LLM has no "hallucination problem". Hallucination is not a bug, it is LLM's greatest feature. The LLM Assistant has a hallucination problem, and we should fix it. Okay I feel much better now :)