Derek

602 posts

@dhsorens

research | formal verification protocol snarkification at @ethereumfndn

Whenever I give a talk, people ask me: "What makes Lean different?", "Why did it succeed?" I finally wrote it down. Four things I believe, one honest weakness, and why "I fucking love this shit" keeps happening. leodemoura.github.io/blog/2026-4-2-…

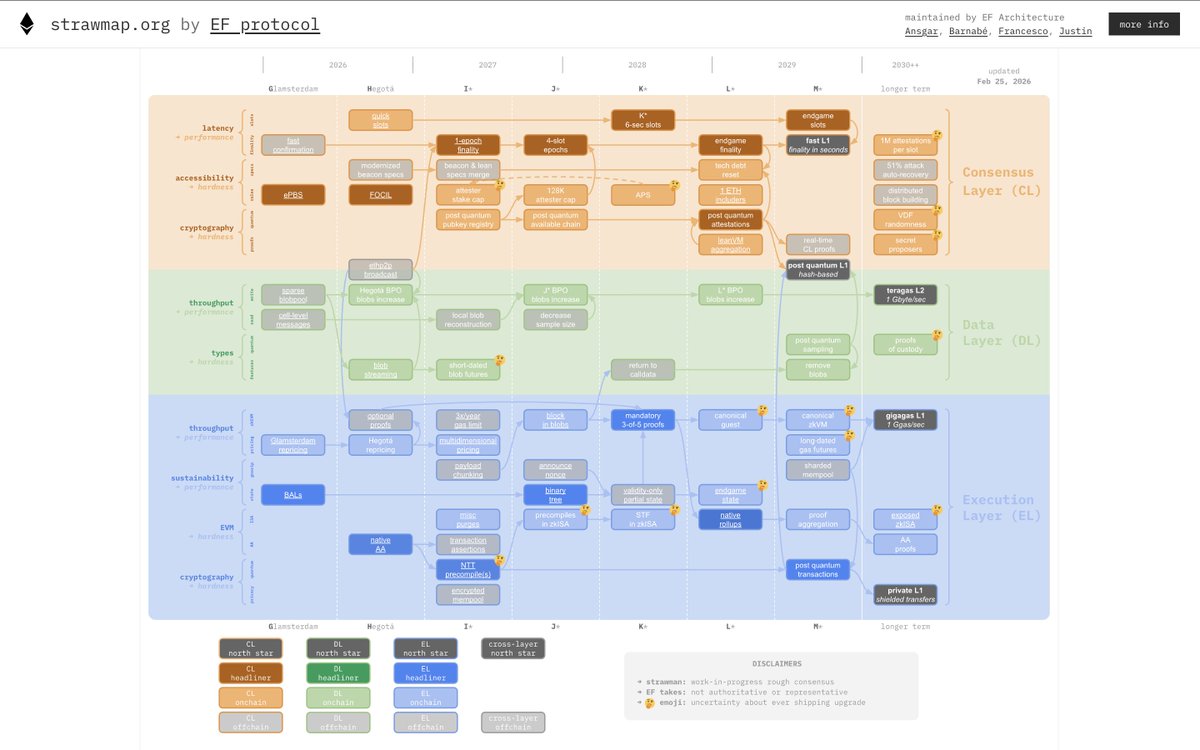

I'm giving a talk at @EthCC[9] this year on how we are integrating ZK proof technology into the Ethereum Protocol, and how we're using formal methods to do it as safely as possible. Despite its branding, formal verification is not a silver bullet and it requires a team of experts in many domains to ship this with genuinely high assurance. My talk is called "Safely Snarkifying the Ethereum Protocol" - If you'll be in Cannes next week, come to find out more!