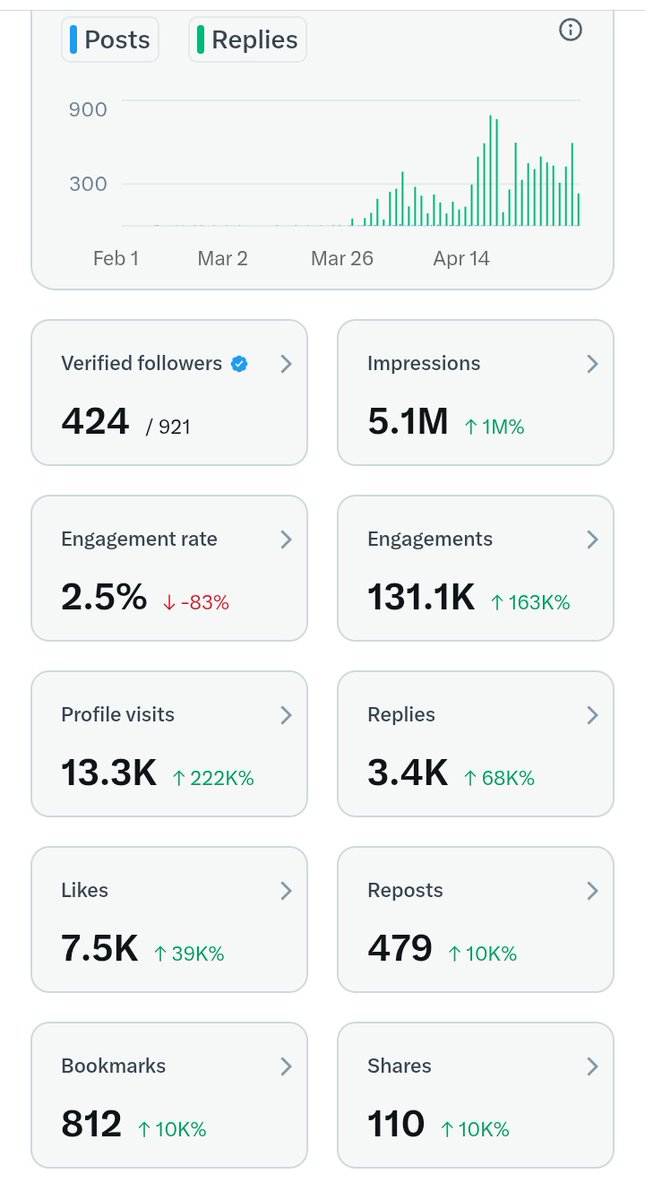

DIGITAL ELUSIVE 🎭

523 posts

DIGITAL ELUSIVE 🎭

@digitalelusive

👨💻Love the tech... 🧠 Blockchain Professional, Dilectra Mod/judge, 🎨 Content Creator & Graphic Designer 💰 Relentless Airdrop Hunter

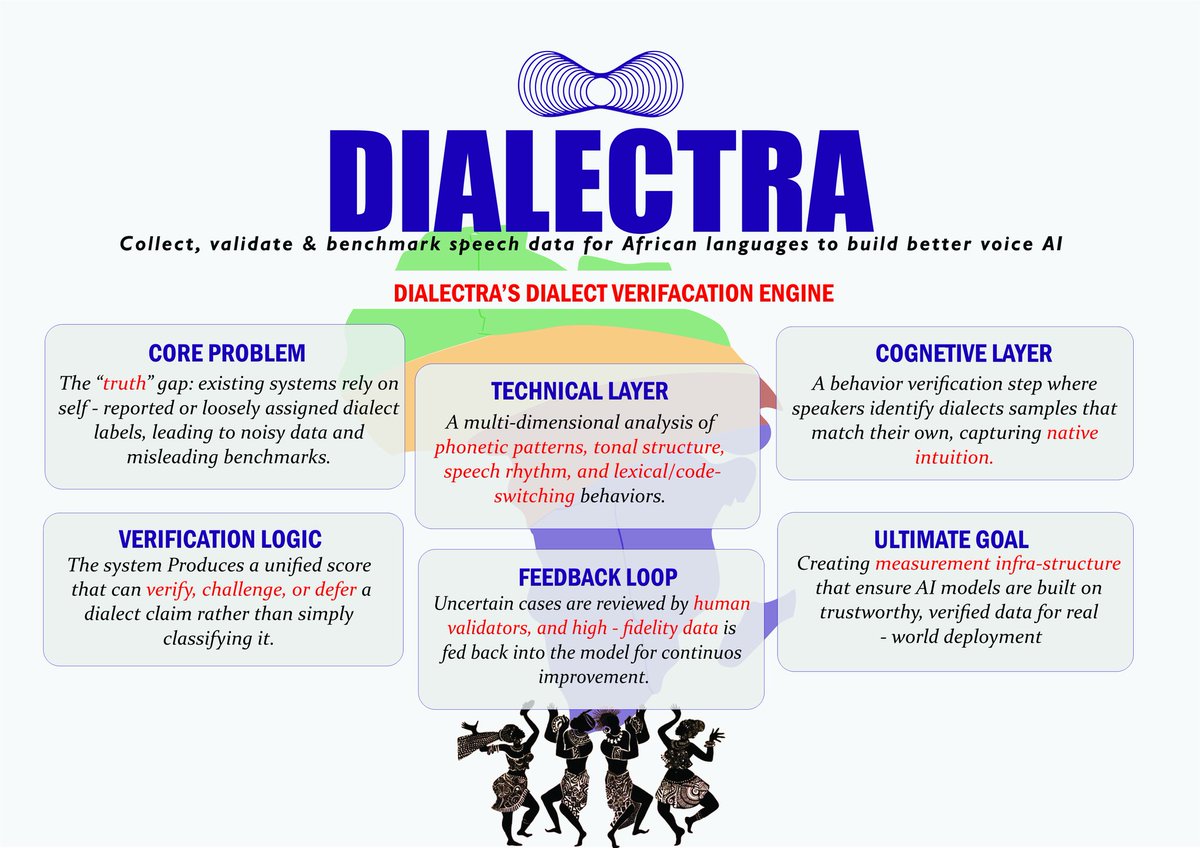

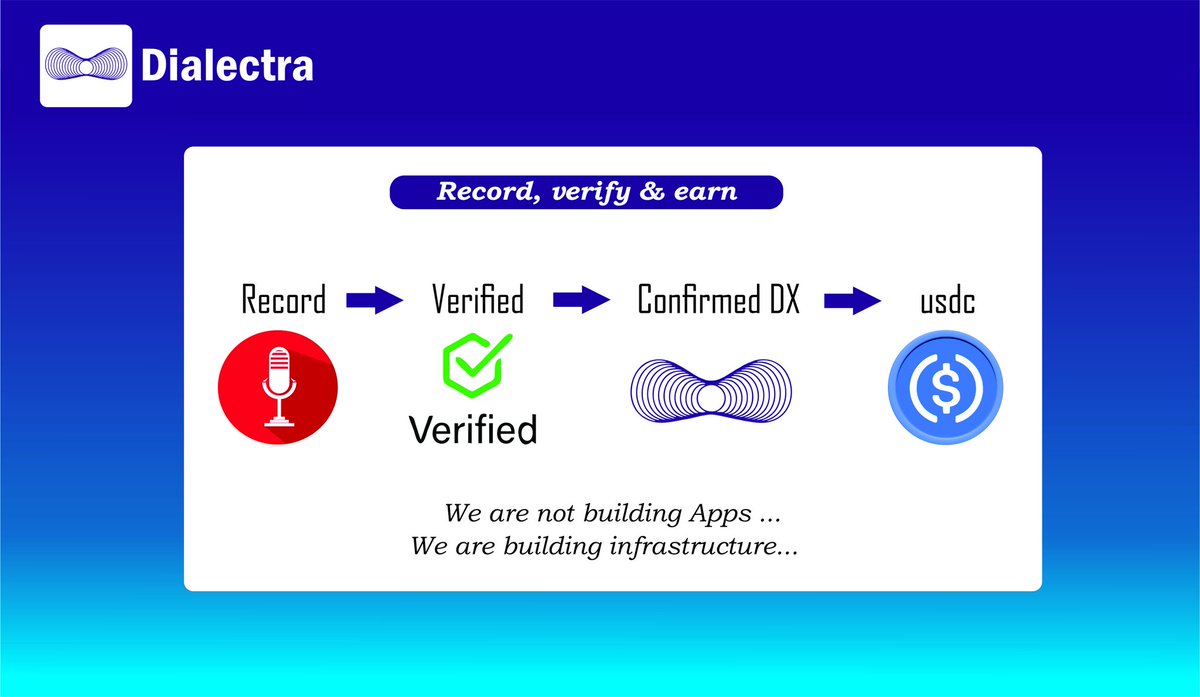

You can’t build powerful AI without quality data. Especially for African languages. Dialectra is solving this by: 🔵 Collecting native speech 🔵 Verifying dialect accuracy 🔵 Structuring datasets for AI training We’re not building apps. We’re building infrastructure.

A guy who cannot speak Hausa very well is training AI models for Hausa. Definitely this model will not have good performance because machine learning models can only be as accurate as the data used to train them.

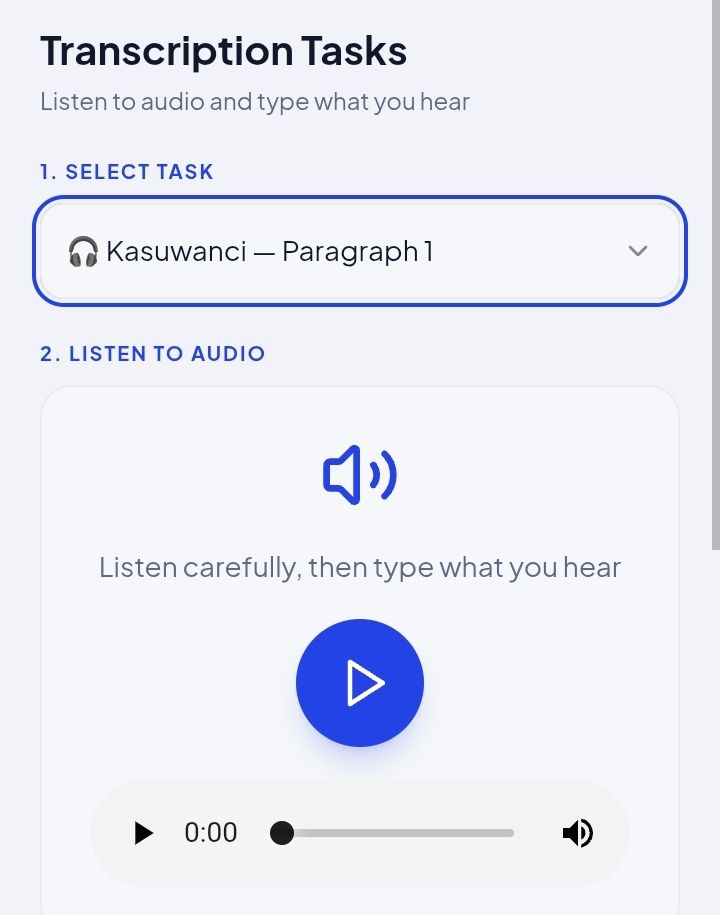

Recent insights from our Dialectra benchmarking pipeline are revealing an important pattern in Hausa speech data performance. Across multiple evaluation cycles, we’re observing clear variation in transcription performance by dialect. General Hausa (Kano and widely standardized variants) continues to achieve strong WER/DRR scores and high consistency in transcription tasks. Sakkwatanci, Zamfaranci, and Katsinanci dialects show lower transcription accuracy and participation efficiency, particularly in text-based annotation workflows. Interestingly, these same dialect groups perform significantly better in corpus script recordings, indicating that the challenge is not speech production but transcription alignment and standardization. This suggests a few key dynamics: ^ stronger familiarity with spoken forms than standardized orthography ^ higher variation in lexical and phonetic representation ^potential gaps in annotation guidelines or reviewer calibration for these dialects On the other hand, Fulfulde datasets are showing relatively stable performance, with fewer discrepancies between recording and transcription quality a useful baseline for comparison. What We’re Doing Next Our current focus is on closing the dialect performance gap within Hausa before expanding further. We are actively working on: * refining dialect-specific transcription guidelines * improving validator calibration and review workflows * introducing dialect-aware annotation support * analyzing error patterns to reduce WER variance across dialect groups The goal is simple; Consistent quality across all dialects not just the standardized ones. Once we achieve alignment within Hausa, the next phase will extend these systems to Swahili and other African languages. More updates soon as we continue to build and benchmark at scale at @_dialectra

Hi, my name is Bakaka and I’m the founder of Dialectra, a platform building structured dialect intelligence infrastructure for African languages.. Have a take a look at what we are building here dialectra.io

We’re excited to welcome our new Growth Lead, @creptosolutions to the team. As we continue scaling Dialectra from expanding our dialect-rich datasets to improving benchmarking and real-world deployment growth is a critical part of our next phase. Welcome to the team.