dirceu

5.5K posts

dirceu

@dirceu

Brazilian who became Canadian. Building AI agents at @Shopify. I like systems thinking, simplifying things, and JRPGs that don't require reflexes.

Ottawa, Canada Katılım Ağustos 2007

384 Takip Edilen962 Takipçiler

Sabitlenmiş Tweet

dirceu retweetledi

Qwen is irreplaceable. Has been going from strength to strength in recent times.

Things will always be different, I'm hopeful we can find groups of other models to fill the void.

RIP

Kevin S. Xu@kevinsxu

Alibaba said nothing about open source as part of its future AI strategy in its earnings call I thought it would at least pay some temporary lip service to open source Qwen, as we know it, is dead

English

dirceu retweetledi

dirceu retweetledi

dirceu retweetledi

1. Install Readwise CLI

2. OpenClaw, Claude Code, Codex, or any coding agent can now:

* Search your entire readwise library

* Read full content of anything you've saved

* Tag, create highlights, organize on your behalf

* Pipe reading data into any workflow

Your agent now knows everything you've read :)

Readwise@readwise

Introducing the Readwise CLI. Anything you've saved in Readwise (highlights, articles, PDFs, books, youtube, newsletters) is now instantly accessible from the terminal. For you, and your AI agents. npm install -g @readwise/cli

English

dirceu retweetledi

@GenAiAlien @WisprFlow I’ve tried WisprFlow and SuperWhisper (which I use all the time on macOS) but there’s something about using a custom keyboard… I don’t like it. On Android the default dictation works decently well but on iOS I just end up using ChatGPT 😅

English

@dirceu Oof you guys gotta check out @WisprFlow

English

I think Atlas browser has become a surprisingly load-bearing tool for me. There is an effort threshold for which I'll see a word/phrase/concept I don't understand and actually take the time to look it up, and the sidechat does seem to have meaningfully lowered that threshold. This is obviously a good thing because it means I am engaging more actively with what I am reading, and more critically, allowing me to both curate my information diet further and expanding the range of my knowledge.

English

dirceu retweetledi

New chapter for Agentic Engineering Patterns: I tried to distill key details of how coding agents work under the hood that are most useful to understand in order to use them effectively simonwillison.net/guides/agentic…

English

dirceu retweetledi

dirceu retweetledi

I see people at Anthropic who didn't necessarily start that way getting better at it. Part of it is being surrounded by others who are AGI-pilled + watching how they push the models. But ultimately...

1. Ask yourself: what if the exponential actually continues

2. Take a task and handhold the AI less, be more ambitious, try to do more of it end-to-end with AI

3. Do #2 enough until you reach the limits of current AI and it fails

4. Wait until the models get better and can successfully complete that task

5. Learn from this. Update your strategy. Rethink what the future looks like.

And practice that over & over

English

dirceu retweetledi

@douglasandrade @_st0012 Not sure about Stan's case, but a bunch of us are using github.com/davebcn87/pi-a…

English

There’s some hype around autoresearch inside Shopify, so I asked it to speed up Ruby LSP’s tests. And the result is a 33% reduction on CI (~30min -> ~18min) 🤯

github.com/Shopify/ruby-l…

English

dirceu retweetledi

dirceu retweetledi

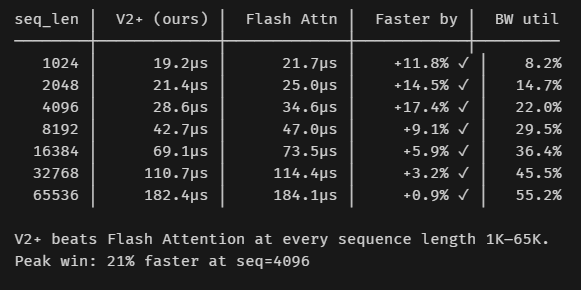

Flash Attention's biggest strengths become bottlenecks during LLM decoding.

Q-tiling, causal masking, 2D combine grid- all overhead when Q is 1 token.

So I built a decode-specific kernel that beats it by 21% (-7.2 μs) using KV-cache parallelism and temp buffers in ScratchPad Memory.

Here's what I learned building it- and why Flash Attention was never really designed for this in the first place.

English

dirceu retweetledi

Well I usually have multiple working if I can help it. The key I found is to limit context switching. Its better to run multiple agents in parallel with xhigh thinking that take 30+ minutes so I can get into some flow with another task then go back to babysit them when I'm done with my won't ask.

The agents don't interrupt me, I interrupt the agents.

English

dirceu retweetledi

I met Nick Land a few weeks ago. He mentioned that many people in his circles were anti-LLMs. Someone asked why he thought so many people were. His answer was better than anything so short I thought of:

“People like to exist critically with respect to something.”

This I think accurately characterizes a lot of people whose outputs and inputs primarily consist of “discourse” about rather than direct contact with the reality at hand. Existing critically with respect to something makes it easy to seem cool, sophisticated, above something, hard-to-impress and therefore worth trying to impress, especially to others who also don’t have contact with the phenomena itself.

And for that reason I think it’s cheap. And to someone who has an inside view of what is being discussed, it’s always so transparent and boring and compressible.

I’m far more impressed by someone who is capable of loving something and showing others why it’s beautiful or good. Doesn’t have to be LLMs, but anything at all.

English