Abhishek Iyer

248 posts

Abhishek Iyer

@distantgradient

Indie Saas Builder. Ex-Google search team. https://t.co/pwIULsiEQu - AI SEO writer + AI Diagram Generator https://t.co/SpxwTnSfWZ - AI customer feedback analysis

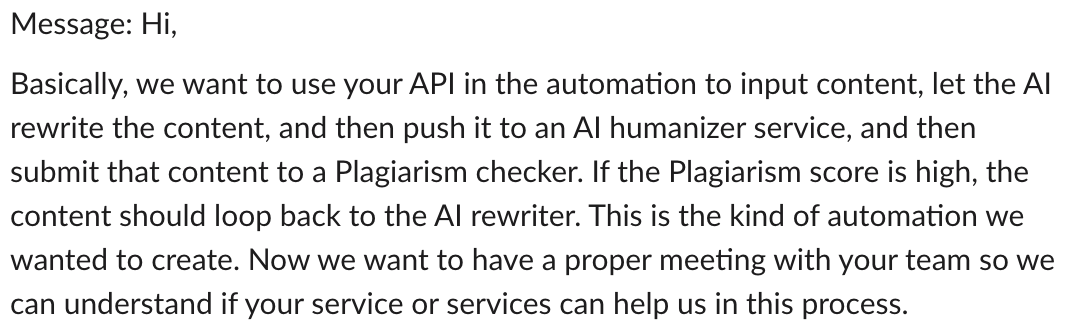

I got banned twice on Reddit for promoting my product without enough comment history (ground rule). So I built an auto-commenting bot using @claudeai. - It earns 10x more karma than I do by actually giving helpful feedback and answering questions. - Its comments look just like mine (even my teammates couldn't tell it was AI). - It can also run in batch mode to easily fill your daily quota within specific subreddits. I already gained 263 karma in just 3 days of using it. Now I can consistently promote my product without triggering Reddit’s spam filters. If you follow, comment, and repost this, I’ll send you the repository link.

Even the gross profits from running models weren’t enough to recoup R&D costs. Gross profits running GPT-5 were less than OpenAI's R&D costs in the four months before launch. And the true R&D cost was likely higher than that.

@Teknium @fractal_friend Hypothesis 1: It is a brand new model that is SOTA across many domains trained by a new team that somehow forgot to scrub "Claude" from the training data. Hypothesis 2: Stolen model weights. What do you think is more likely?

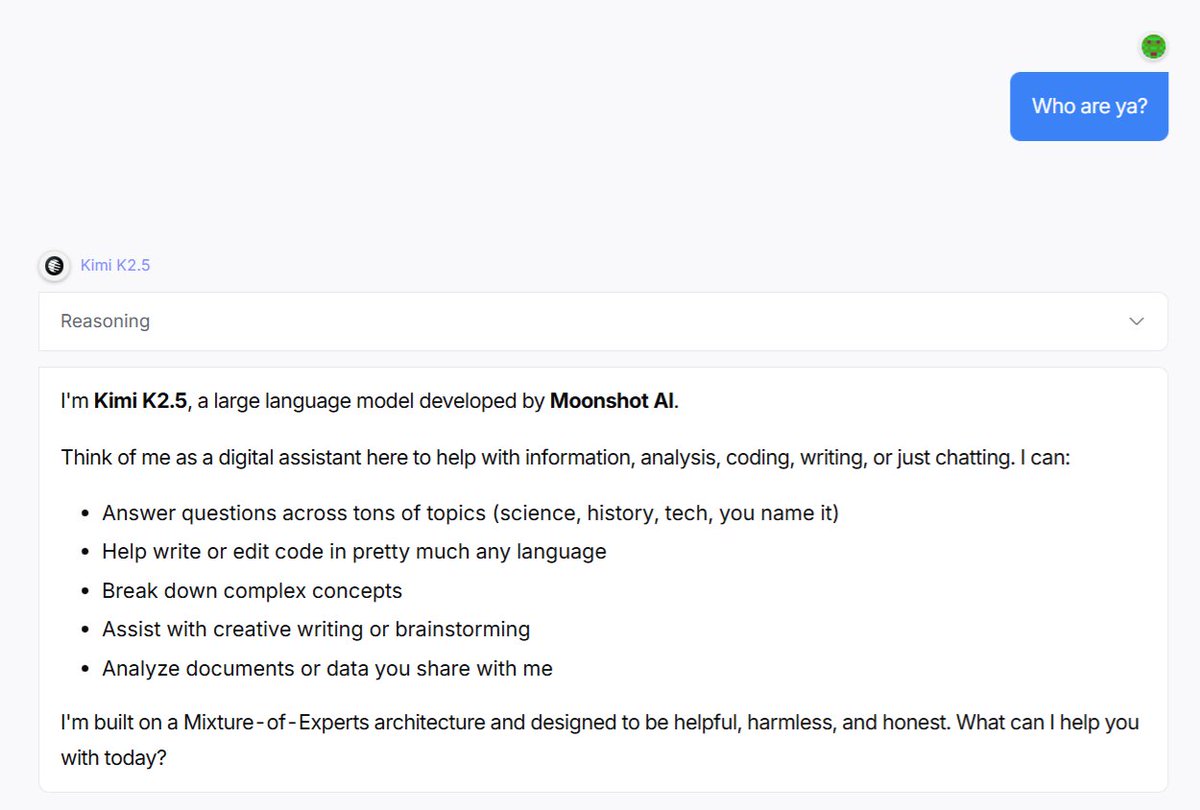

🥝 Meet Kimi K2.5, Open-Source Visual Agentic Intelligence. 🔹 Global SOTA on Agentic Benchmarks: HLE full set (50.2%), BrowseComp (74.9%) 🔹 Open-source SOTA on Vision and Coding: MMMU Pro (78.5%), VideoMMMU (86.6%), SWE-bench Verified (76.8%) 🔹 Code with Taste: turn chats, images & videos into aesthetic websites with expressive motion. 🔹 Agent Swarm (Beta): self-directed agents working in parallel, at scale. Up to 100 sub-agents, 1,500 tool calls, 4.5× faster compared with single-agent setup. - 🥝 K2.5 is now live on kimi.com in chat mode and agent mode. 🥝 K2.5 Agent Swarm in beta for high-tier users. 🥝 For production-grade coding, you can pair K2.5 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blogs/kimi-k2-… 🔗 Weights & code: huggingface.co/moonshotai/Kim…

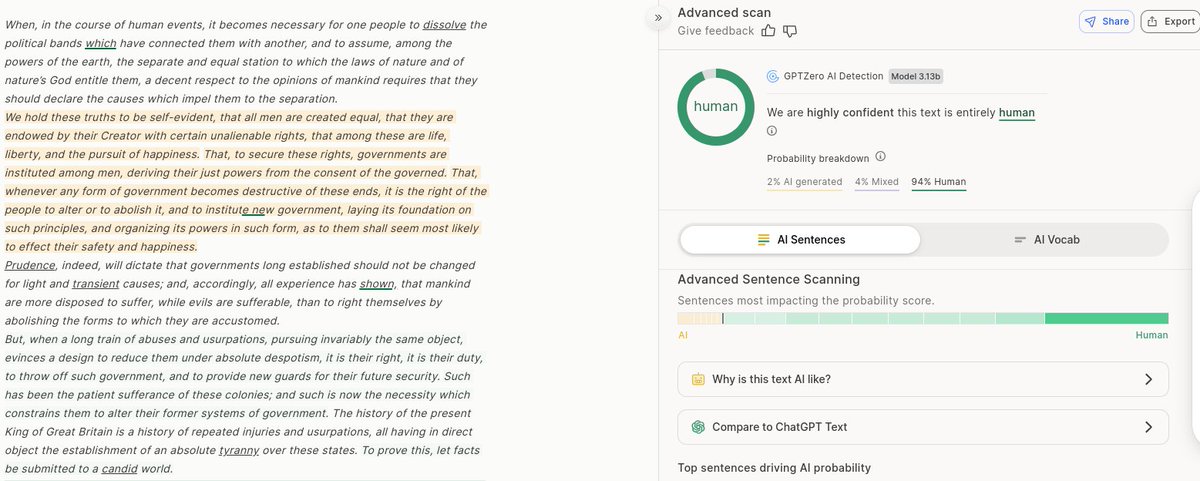

AI detection done right does work (not some crappy free tool). Fundamentally, LLMs spit out tokens in a probability distribution. Given enough tokens, this is very much detectable. A good detector will be able to detect all the top models. The frontier labs have no incentive to make the output undetectable. Is it fool proof? Nope. Someone who wants to beat detection can train a model that tries to cloak this signature. But 99% of the text / images out there will just use the base model and will be detectable. (That is until some frontier lab makes avoiding detection as a priority).

I'm unable to convince normies that AI detectors don't work and probably will never ever work because of basics of AI which I think is More advanced models might be able detect AI in inferior models (like GPT-5 reading GPT-3 output) But an advanced model can't detect AI in output of an equally advanced model I think It doesn't matter though, normies want AI detectors to exist and work so they do, to them