Divyat Mahajan

357 posts

Divyat Mahajan

@divyat09

Ph.D. Candidate @Mila_Quebec | Visiting Researcher @AIatMeta | Former: @MSFTResearch @IITKanpur

What an awesome first day! Thank you all for joining and listening to our amazing speakers: @SchmidhuberAI, @sherryyangML, @cosmo_shirley, @Yoshua_Bengio, @ylecun, @mido_assran World Models have beautiful days ahead. This is just the beginning 🫡

A lot is said about LLMs’ counterfactual reasoning, but do they truly possess the cognitive skills it needs? Introducing Executable Counterfactuals, a code framework that (1) shows frontier models lack these skills (2) offers a testbed for improvement via Reinforcement Learning

New work: a scalable way to learn dists over permutations/rankings. The method can trade-off compute and expressivity by varying # NFEs (ie unmasking more than one token at a time), and subsumes well known families of models (eg Mallow' model) arxiv.org/abs/2505.24664

EXCITED to share the release of two foundation models for electrocardiogram interpretation in @ehj_ed We built DeepECG-SL and DeepECG-SSL, two open-source ECG foundation models trained on >1M ECGs and validated across 11 external datasets (881K ECGs). 🔗 academic.oup.com/eurheartj/adva…

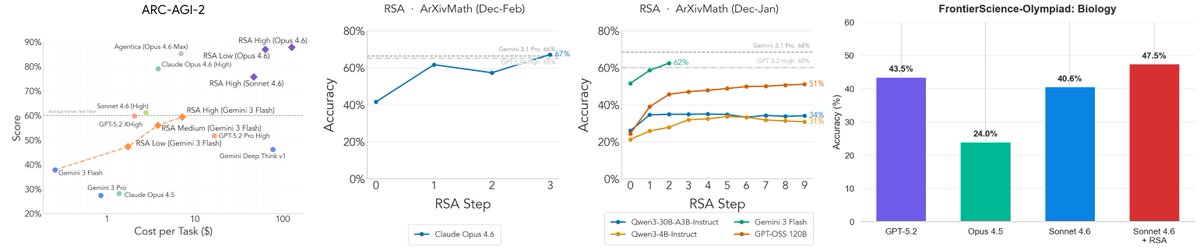

Recursive Self-Aggregation (RSA) + Gemini 3 Flash scores 59.31% on the public ARC-AGI-2 evals, placing it firmly among the top performers! Here are the highlights: > Outperforms Gemini DeepThink at about 1/10th the cost > Bridges the performance gap with GPT-5.2-xHigh for a similar cost > Nearly matches Poetiq while using a much simpler pipeline. Poetiq uses scaffolded refinement (often via generated code), while RSA does not Also, Gemini 3 Flash is impressive; the Gemini team cooked with this one! We're also eager to run evals with GPT-5.2 + RSA in the future (anyone with credits? :P)

Recursive Self-Aggregation (RSA) + Gemini 3 Flash scores 59.31% on the public ARC-AGI-2 evals, placing it firmly among the top performers! Here are the highlights: > Outperforms Gemini DeepThink at about 1/10th the cost > Bridges the performance gap with GPT-5.2-xHigh for a similar cost > Nearly matches Poetiq while using a much simpler pipeline. Poetiq uses scaffolded refinement (often via generated code), while RSA does not Also, Gemini 3 Flash is impressive; the Gemini team cooked with this one! We're also eager to run evals with GPT-5.2 + RSA in the future (anyone with credits? :P)

Offline RL is dominated by conservatism -- safe, but limiting generalization. In our new paper, we ask: what if we drop it and rely on Bayesian principle for adaptive generalization? Surprisingly, long-horizon rollouts -- usually avoided in model-based RL -- make it work. 🧵

One day we’ll be able to decompose the loss curve of a neural net into all of the quanta it learns along the way - this is one of my fav streams of fundamental research. Really promising line of work

Reward models make or break post-training for multimodal omni models (e.g., nano banana), yet there’s surprisingly little research on that‼️ We’re releasing MMRB2: new reward benchmark focusing on omni models, spanning T2I, editing, interleaved, and thinking with images 🧵1/n